- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Calculate time between time-stamp entries base...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Calculate time between time-stamp entries based on row conditions

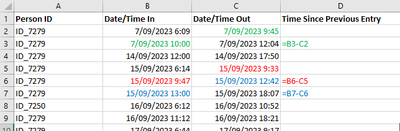

I have a table of swipe entries to a site. On occasions, people can swipe in and out multiple times a day.

I want to understand the the time off-site by calculating the difference between the swipe-out events and the following swipe-in events for the same day and same user ID.

Table ishown below. I am trying to make a calculated columnD. 3 examples provided of the calc I am trying to do in different colours.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@bocajpedersen

Create a blank Query, go to the Advanced Editor, clear the existing code, and paste the codes give below and follow the steps.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("fZXLbhsxDEV/xfA6RiVK1INrb7xKgXZnBEVReNFF06J1/j+XMylGpJIBZmEf32tx+NL1evx8+/vv9/Phcj4+HM/f77dPX3/+uh0uz+br48v9+PRwPV7O3yrVjt9CPX25/TlRoHQoErowO9ols4Sy44tBAh5vjCQhSwrOGbOVBOHkcRUOkqN38hhrzBKbo11SEppONIpc8TiKODJJoh1jTMtLRo+bhCqheWcxoZLE5CiSxjg17xhjVCdFjxsYErQ58RkSU8mcJTdHuyCxVP2JRkFNFZYiCI6atI+NyA5iCuRxV2fq3tk2CatxDXWgTRJC3fN1bS6kz1KtUhGeQn1P4jBeHNFOheybBHVOb6F2U0hCfcmXw0oyHvY4a7rH7MxOjB5qnT1GDxdJfkAojC3AVcfWUhzX5gEZFWiBIDE6qvXFUE4FSafHH/dtC2Dci8e0dGvac2L0eF08BqOL0RqTM28SdcHr6Fspi88rb5KieYjsKAYukUnrZISEF4nDTUsZm3eW8cj/S3Kk+D/kO03DbCUIlidn1WDjVJK6SVDtorvJUl1Z/M44tzFW9Oa6lgfa1Ue840PzFKHsqG7qoBvwYyMkKa7tajDSiimfstPHFkCtQ58ochPSrm9VWKqbOpgduRipjO/I6J7sqDZrmpcyVdcA3B3VJY/qTqNsJbjWll1ncNWtQv5mpuaOzMXR9f8o+1ibb/Pl0jJYl/JyTz69Ag==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"(blank)" = _t, #"(blank).1" = _t, #"(blank).2" = _t]),

#"Promoted Headers" = Table.PromoteHeaders(Source, [PromoteAllScalars=true]),

#"Changed Type" = Table.TransformColumnTypes(#"Promoted Headers",{{"Person ID", type text}, {"Date/Time In", type datetime}, {"Date/Time Out", type datetime}}),

#"Sorted Rows" = Table.Sort(#"Changed Type",{{"Person ID", Order.Descending}, {"Date/Time In", Order.Ascending}, {"Date/Time Out", Order.Ascending}}),

#"Added Index" = Table.AddIndexColumn(#"Sorted Rows", "Index", -1, 1, Int64.Type),

#"Added Custom" =

Table.AddColumn(

#"Added Index", "Custom", each

if [Index] >= 0 then

let

__id = #"Added Index"{[Index]}[Person ID],

__pDate = #"Added Index"{[Index]}[#"Date/Time Out"],

__cdate = [#"Date/Time In"]

in

if __id = [Person ID] and

Date.From(__pDate) = Date.From(__cdate)

then

Duration.From(__cdate-__pDate)

else

null else null, type duration

)

in

#"Added Custom"

⭕ Subscribe and learn Power BI from these videos

⚪ Website ⚪ LinkedIn ⚪ PBI User Group

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

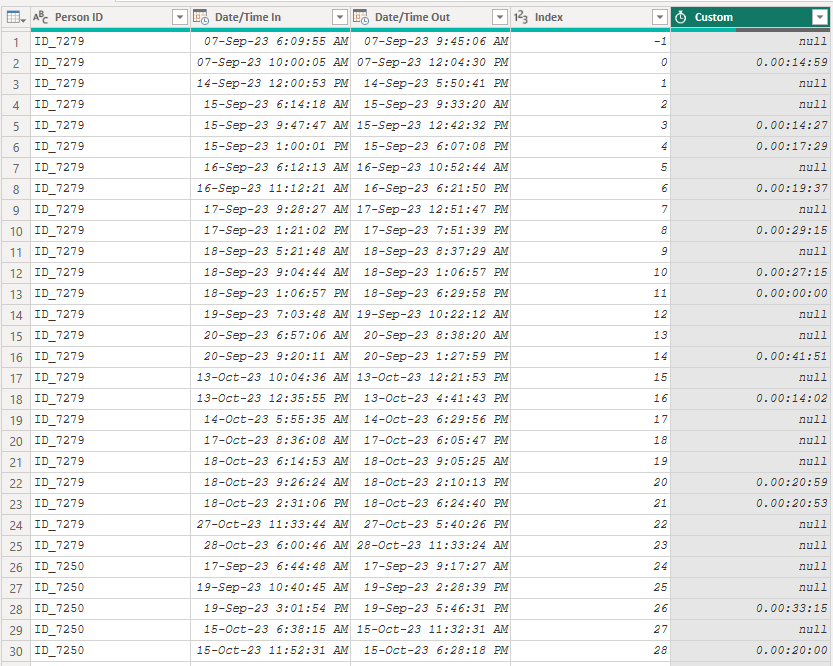

Hi @bocajpedersen, I know that this topic is few months old, but if you have big dataset - consider using this query (this one is much faster)

Result

let

fnShift = (tbl as table, col as text, shift as nullable number, optional newColName as text, optional _type as type) as table =>

//v 3. parametri zadaj zaporne cislo ak chces posunut riadky hore, kladne ak dole, 4. je nepovinny (novy nazov stlpca), 5. je nepovinny typ

let

a = Table.Column(tbl, col),

b = if shift = 0 or shift = null then a else if shift > 0

then List.Repeat({null}, shift) & List.RemoveLastN(a, shift)

else List.RemoveFirstN(a, shift * -1) & List.Repeat({null}, shift * -1),

c = Table.FromColumns(Table.ToColumns(tbl) & {b}, Table.ColumnNames(tbl) &

( if newColName <> null then {newColName} else

if shift = 0 then {col & "_Duplicate"} else

if shift > 0 then {col & "_PrevValue"}

else {col & "_NextValue"} )),

d = Table.TransformColumnTypes(c, {List.Last(Table.ColumnNames(c)), if _type <> null then _type else type any})

in

d,

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("fZW7jt0wDER/Jbj1XoCiSD1Yp0mVIuVikSJInyL/jwztDSxSuwZcGAczFs2XXl8f377+7Nzn4+VB/fnj958nE9cvzWiaaqLTRI3a4+3lM18hIzzZWNhIrFJyFokSMq0Zd1MyKdmpa6xFrIxEp9VqvJ0YFNLxJIo4hK3yjbHU4ydLxsOoG43sbCFUtlITRdIUp8qNsRR3csl4gCFBlxPvkIRKipiMRKchsdzziUHBwxWRIggtnrTPjcgOYiLOeLqzzuwcl0TdeIa60GEVod75pjcX0hepV6mZbqF+JEkYP45ot0LOS4I61/dQZygko76cyxElgkczFk/3mp3didFDrSVj9HCzmgeEaW0B7T62keK4sQ/IqkALkJWSqNcXQ7kVpD6///p7bQGMe8uYj26td06Mnp6LJ2B0MVpjc8olcRe8ib6XsuW86iVpnoeiiWLgKoe0bkZI9JAkPLyUZWRnW4/8vyRXiu8h33Ub5ihBsLo5uwdbtpL0S4JqN99NkfrK0g/GeayxojfPtbzQ6T7WGx+apxlLor6pyTfg50ZIajnbNWCkFVO+ZWeuLYBa09wockP11ncqIvVNTWFHHkZu6z8qukcS9Wat+1LmnhpAZ6K+5FHdbZSjBNfasesC7r5VON/MPNKR0hI9v8eSYx25zY9LK2Bfysc9+fYP", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Person ID" = _t, #"Date/Time In" = _t, #"Date/Time Out" = _t]),

Custom1 = if UseRepeatValue = "Yes" then Table.Repeat(Source, RepeatValue) else Source,

ChangedType = Table.TransformColumnTypes(Custom1,{{"Person ID", type text}, {"Date/Time In", type datetime}, {"Date/Time Out", type datetime}}),

Ad_DateIn = Table.AddColumn(ChangedType, "Date In", each DateTime.Date([#"Date/Time In"]), type date),

Ad_DateOut = Table.AddColumn(Ad_DateIn, "Date Out", each DateTime.Date([#"Date/Time Out"]), type date),

// Added [Date/Time Out_PrevValue] into inner [All] table

GroupedRows = Table.Group(Ad_DateOut, {"Person ID", "Date In", "Date Out"}, {{"All", each fnShift(_, "Date/Time Out", 1, null, type datetime), type table}}),

CombinedAll = Table.Combine(GroupedRows[All]),

Ad_TimeSincePreviousEntray = Table.AddColumn(CombinedAll, "Time Since Previous Entry", each [#"Date/Time In"] - [#"Date/Time Out_PrevValue"], type duration),

RemovedColumns = Table.RemoveColumns(Ad_TimeSincePreviousEntray,{"Date/Time Out_PrevValue", "Date In", "Date Out"})

in

RemovedColumns- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@bocajpedersen

Create a blank Query, go to the Advanced Editor, clear the existing code, and paste the codes give below and follow the steps.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("fZXLbhsxDEV/xfA6RiVK1INrb7xKgXZnBEVReNFF06J1/j+XMylGpJIBZmEf32tx+NL1evx8+/vv9/Phcj4+HM/f77dPX3/+uh0uz+br48v9+PRwPV7O3yrVjt9CPX25/TlRoHQoErowO9ols4Sy44tBAh5vjCQhSwrOGbOVBOHkcRUOkqN38hhrzBKbo11SEppONIpc8TiKODJJoh1jTMtLRo+bhCqheWcxoZLE5CiSxjg17xhjVCdFjxsYErQ58RkSU8mcJTdHuyCxVP2JRkFNFZYiCI6atI+NyA5iCuRxV2fq3tk2CatxDXWgTRJC3fN1bS6kz1KtUhGeQn1P4jBeHNFOheybBHVOb6F2U0hCfcmXw0oyHvY4a7rH7MxOjB5qnT1GDxdJfkAojC3AVcfWUhzX5gEZFWiBIDE6qvXFUE4FSafHH/dtC2Dci8e0dGvac2L0eF08BqOL0RqTM28SdcHr6Fspi88rb5KieYjsKAYukUnrZISEF4nDTUsZm3eW8cj/S3Kk+D/kO03DbCUIlidn1WDjVJK6SVDtorvJUl1Z/M44tzFW9Oa6lgfa1Ue840PzFKHsqG7qoBvwYyMkKa7tajDSiimfstPHFkCtQ58ochPSrm9VWKqbOpgduRipjO/I6J7sqDZrmpcyVdcA3B3VJY/qTqNsJbjWll1ncNWtQv5mpuaOzMXR9f8o+1ibb/Pl0jJYl/JyTz69Ag==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"(blank)" = _t, #"(blank).1" = _t, #"(blank).2" = _t]),

#"Promoted Headers" = Table.PromoteHeaders(Source, [PromoteAllScalars=true]),

#"Changed Type" = Table.TransformColumnTypes(#"Promoted Headers",{{"Person ID", type text}, {"Date/Time In", type datetime}, {"Date/Time Out", type datetime}}),

#"Sorted Rows" = Table.Sort(#"Changed Type",{{"Person ID", Order.Descending}, {"Date/Time In", Order.Ascending}, {"Date/Time Out", Order.Ascending}}),

#"Added Index" = Table.AddIndexColumn(#"Sorted Rows", "Index", -1, 1, Int64.Type),

#"Added Custom" =

Table.AddColumn(

#"Added Index", "Custom", each

if [Index] >= 0 then

let

__id = #"Added Index"{[Index]}[Person ID],

__pDate = #"Added Index"{[Index]}[#"Date/Time Out"],

__cdate = [#"Date/Time In"]

in

if __id = [Person ID] and

Date.From(__pDate) = Date.From(__cdate)

then

Duration.From(__cdate-__pDate)

else

null else null, type duration

)

in

#"Added Custom"

⭕ Subscribe and learn Power BI from these videos

⚪ Website ⚪ LinkedIn ⚪ PBI User Group

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

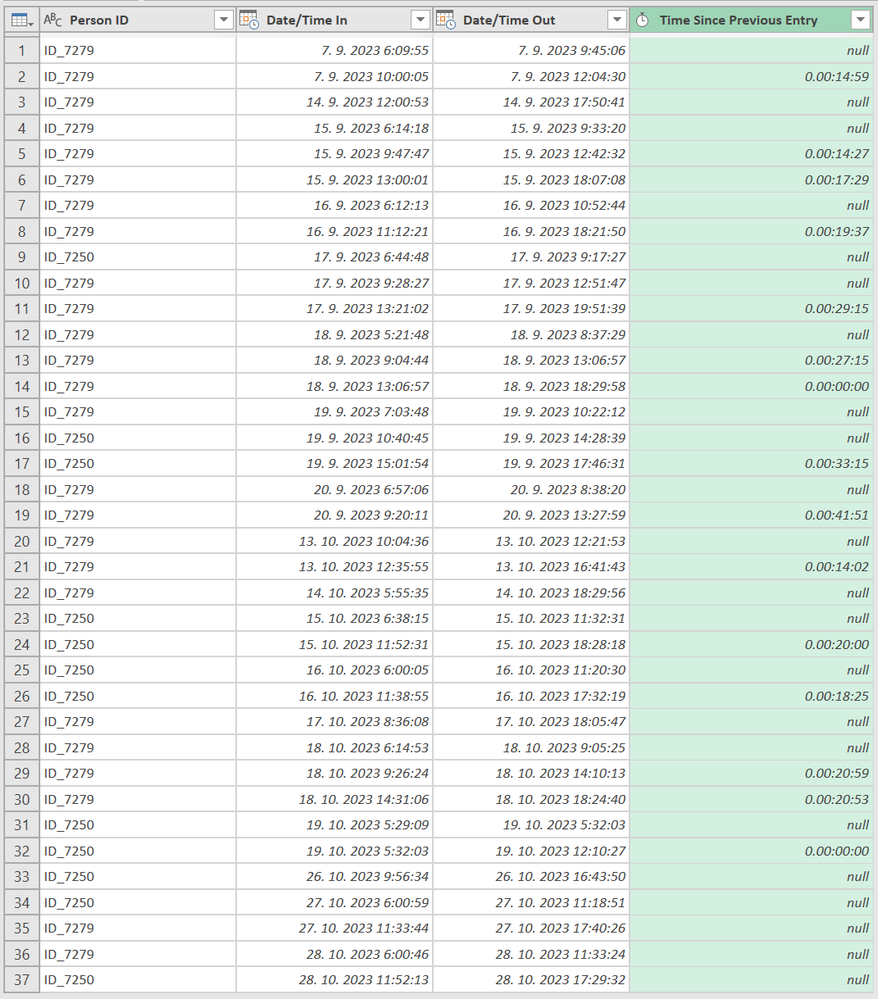

Thanks for the quick response.

I am trying to calculate the time from swiping off, and then returning to site to swipe back on - not just 24-onsite_time.

| Person ID | Date/Time In | Date/Time Out |

| ID_7279 | 07-Sep-2023 6:09:55 | 07-Sep-2023 9:45:06 |

| ID_7279 | 07-Sep-2023 10:00:05 | 07-Sep-2023 12:04:30 |

| ID_7279 | 14-Sep-2023 12:00:53 | 14-Sep-2023 17:50:41 |

| ID_7279 | 15-Sep-2023 6:14:18 | 15-Sep-2023 9:33:20 |

| ID_7279 | 15-Sep-2023 9:47:47 | 15-Sep-2023 12:42:32 |

| ID_7279 | 15-Sep-2023 13:00:01 | 15-Sep-2023 18:07:08 |

| ID_7279 | 16-Sep-2023 6:12:13 | 16-Sep-2023 10:52:44 |

| ID_7279 | 16-Sep-2023 11:12:21 | 16-Sep-2023 18:21:50 |

| ID_7250 | 17-Sep-2023 6:44:48 | 17-Sep-2023 9:17:27 |

| ID_7279 | 17-Sep-2023 9:28:27 | 17-Sep-2023 12:51:47 |

| ID_7279 | 17-Sep-2023 13:21:02 | 17-Sep-2023 19:51:39 |

| ID_7279 | 18-Sep-2023 5:21:48 | 18-Sep-2023 8:37:29 |

| ID_7279 | 18-Sep-2023 9:04:44 | 18-Sep-2023 13:06:57 |

| ID_7279 | 18-Sep-2023 13:06:57 | 18-Sep-2023 18:29:58 |

| ID_7279 | 19-Sep-2023 7:03:48 | 19-Sep-2023 10:22:12 |

| ID_7250 | 19-Sep-2023 10:40:45 | 19-Sep-2023 14:28:39 |

| ID_7250 | 19-Sep-2023 15:01:54 | 19-Sep-2023 17:46:31 |

| ID_7279 | 20-Sep-2023 6:57:06 | 20-Sep-2023 8:38:20 |

| ID_7279 | 20-Sep-2023 9:20:11 | 20-Sep-2023 13:27:59 |

| ID_7279 | 13-Oct-2023 10:04:36 | 13-Oct-2023 12:21:53 |

| ID_7279 | 13-Oct-2023 12:35:55 | 13-Oct-2023 16:41:43 |

| ID_7279 | 14-Oct-2023 5:55:35 | 14-Oct-2023 18:29:56 |

| ID_7250 | 15-Oct-2023 6:38:15 | 15-Oct-2023 11:32:31 |

| ID_7250 | 15-Oct-2023 11:52:31 | 15-Oct-2023 18:28:18 |

| ID_7250 | 16-Oct-2023 6:00:05 | 16-Oct-2023 11:20:30 |

| ID_7250 | 16-Oct-2023 11:38:55 | 16-Oct-2023 17:32:19 |

| ID_7279 | 17-Oct-2023 8:36:08 | 17-Oct-2023 18:05:47 |

| ID_7279 | 18-Oct-2023 6:14:53 | 18-Oct-2023 9:05:25 |

| ID_7279 | 18-Oct-2023 9:26:24 | 18-Oct-2023 14:10:13 |

| ID_7279 | 18-Oct-2023 14:31:06 | 18-Oct-2023 18:24:40 |

| ID_7250 | 19-Oct-2023 5:29:09 | 19-Oct-2023 5:32:03 |

| ID_7250 | 19-Oct-2023 5:32:03 | 19-Oct-2023 12:10:27 |

| ID_7250 | 26-Oct-2023 9:56:34 | 26-Oct-2023 16:43:50 |

| ID_7250 | 27-Oct-2023 6:00:59 | 27-Oct-2023 11:18:51 |

| ID_7279 | 27-Oct-2023 11:33:44 | 27-Oct-2023 17:40:26 |

| ID_7279 | 28-Oct-2023 6:00:46 | 28-Oct-2023 11:33:24 |

| ID_7250 | 28-Oct-2023 11:52:13 | 28-Oct-2023 17:29:32 |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @bocajpedersen ,

Here's my approach

1. Add a column subtracting Out - In , as duration.

2. Add a column extracting either date ( assuming both are always the same)

3. Group by person Id and date, aggregation is sum of the duration. ( This should be on site time)

4. Could negate 24 hours from this to get offsite time

If this doesn't resolve the issue , Kindly post the raw data in a table/ readable query format with any other considerations while masking sensitive data.

If my response(s) assisted you in any way, don't forget to drop me a Kudos 🙂

Kind Regards,

Avinash