Go To

- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- transpose row to column: uneven data source

Reply

Topic Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

transpose row to column: uneven data source

03-25-2019

11:28 AM

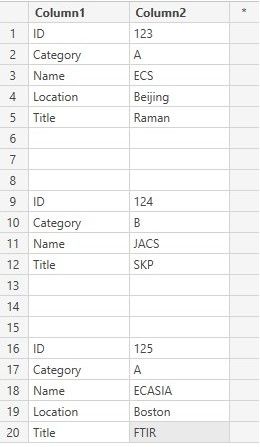

My source database looks as follows after import. Can I transpose it in Power Query Editor in a way that the new column names become ID, Category, Name, Location, Title?

If Category is A, I have an additional line (Location).

Solved! Go to Solution.

1 ACCEPTED SOLUTION

Anonymous

Not applicable

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-25-2019

02:04 PM

Here is the code that you could copy and paste into a blank query for reference, I am sure there is a more efficent way of doing it:

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45W8nRR0lEyNDJWitWJVnJOLElNzy+qBAo5ggX8EnNTgRxX52Aw1yc/ObEkMz8PKOSUmpmVmZcOFg7JLCnJAakLSsxNzAMLATlYaah1JujWOSFb5+UItQ9ucLB3ADHGmhLwhWOwpyOGR/KLS/LzUK1zC/EMUoqNBQA=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1", type text}, {"Column2", type text}}),

#"Filtered Rows" = Table.SelectRows(#"Changed Type", each ([Column2] <> null)),

#"Filtered Rows1" = Table.SelectRows(#"Filtered Rows", each ([Column2] <> "")),

#"Added Custom" = Table.AddColumn(#"Filtered Rows1", "ID", each try Number.FromText([Column2]) > 0),

#"Expanded ID" = Table.ExpandRecordColumn(#"Added Custom", "ID", {"Value"}, {"ID.Value"}),

#"Changed Type1" = Table.TransformColumnTypes(#"Expanded ID",{{"ID.Value", type text}}),

#"Added Custom1" = Table.AddColumn(#"Changed Type1", "Temp ID", each if [ID.Value] = "true" then [Column2] else null),

#"Removed Columns" = Table.RemoveColumns(#"Added Custom1",{"ID.Value"}),

#"Changed Type2" = Table.TransformColumnTypes(#"Removed Columns",{{"Temp ID", Int64.Type}}),

#"Filled Down" = Table.FillDown(#"Changed Type2",{"Temp ID"}),

#"Pivoted Column" = Table.Pivot(#"Filled Down", List.Distinct(#"Filled Down"[Column1]), "Column1", "Column2"),

#"Removed Columns1" = Table.RemoveColumns(#"Pivoted Column",{"Temp ID"})

in

#"Removed Columns1"

1 REPLY 1

Anonymous

Not applicable

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

03-25-2019

02:04 PM

Here is the code that you could copy and paste into a blank query for reference, I am sure there is a more efficent way of doing it:

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45W8nRR0lEyNDJWitWJVnJOLElNzy+qBAo5ggX8EnNTgRxX52Aw1yc/ObEkMz8PKOSUmpmVmZcOFg7JLCnJAakLSsxNzAMLATlYaah1JujWOSFb5+UItQ9ucLB3ADHGmhLwhWOwpyOGR/KLS/LzUK1zC/EMUoqNBQA=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1", type text}, {"Column2", type text}}),

#"Filtered Rows" = Table.SelectRows(#"Changed Type", each ([Column2] <> null)),

#"Filtered Rows1" = Table.SelectRows(#"Filtered Rows", each ([Column2] <> "")),

#"Added Custom" = Table.AddColumn(#"Filtered Rows1", "ID", each try Number.FromText([Column2]) > 0),

#"Expanded ID" = Table.ExpandRecordColumn(#"Added Custom", "ID", {"Value"}, {"ID.Value"}),

#"Changed Type1" = Table.TransformColumnTypes(#"Expanded ID",{{"ID.Value", type text}}),

#"Added Custom1" = Table.AddColumn(#"Changed Type1", "Temp ID", each if [ID.Value] = "true" then [Column2] else null),

#"Removed Columns" = Table.RemoveColumns(#"Added Custom1",{"ID.Value"}),

#"Changed Type2" = Table.TransformColumnTypes(#"Removed Columns",{{"Temp ID", Int64.Type}}),

#"Filled Down" = Table.FillDown(#"Changed Type2",{"Temp ID"}),

#"Pivoted Column" = Table.Pivot(#"Filled Down", List.Distinct(#"Filled Down"[Column1]), "Column1", "Column2"),

#"Removed Columns1" = Table.RemoveColumns(#"Pivoted Column",{"Temp ID"})

in

#"Removed Columns1"

Helpful resources

Featured Topics

Top Solution Authors

| User | Count |

|---|---|

| 102 | |

| 84 | |

| 77 | |

| 70 | |

| 67 |

Top Kudoed Authors

| User | Count |

|---|---|

| 113 | |

| 99 | |

| 97 | |

| 72 | |

| 68 |