- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- DAX Commands and Tips

- Re: get Volume measure Correct - Variance analysis...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

get Volume measure Correct - Variance analysis with parents hierarchies

Hello all,

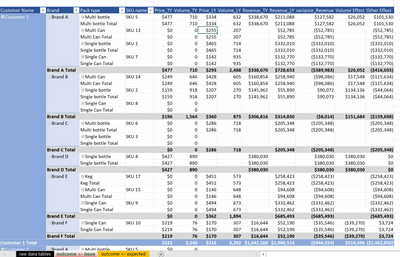

I am building a variance analysis (Price-Volume-Mix-otherA-otherB Effects breakdown) with referencing 3 dimension tables:

- dDate: Calendar table (Month from the picture below)

- dCustomer: Customer table (Customer name from the picture below)

- dSKU: Product table (Product Brand, Product Packaging, Product Name from the picture below)

and the fact table fSales

The objective is to build dynamic measures for each of the P-V-M effects, and it will add up according to what dimensions are applied. I am having issue with the Volume Effect.

The requirement for the Volume Effect is as follow:

1a. If product is new (i.e. Volume_LY = 0) -> Volume Effect = Volume_TY * Price_TY

1b. otherwise (i.e. existing products or delisted products) -> Volume Effect = (Volume_TY - Volume_LY) * Price LY

2. The effects sums up at sub-total or grand total level of each hierarchy

Here is the DAX I used to create the calcluated measure for the Volume Effect:

Volume_Effect :=

SUMX (

SUMMARIZE ( fSales, fSales[Customer Name], fSales[Product Name] ),

IF (

[Volume_LY] = 0,

[Volume_TY] * [Price_TY],

[Volume_TY - Volume_LY] * [Price_LY]

)

)However the problem is, the DAX doesn't work for delisted products (i.e. Volume_TY = 0).

The issue seems to be for delisted items have blank records under "TY", hence the DAX cannot capture the corresponding row. How do I fix the DAX to "look into" sales data for both years?

The calculated measure has to satisfy all of the 3 below:

1. Summation of the Volume Effect at parent total

(i.e. sum of product 1, 2..., n Volume effect = pack A's Volume effect; sum of pack A, B..., n Volume effect = Brand A's effect; and so on)

2. The Volume Effect is dynamic on all dDate, dCustomer, dProduct tables.

3. It cannot be a balancing effect (Revenue difference - sum of the rest Effects), since there is another effect treated as balancing effect already.

Attached the sample file for your reference.

Thanks in advance once again!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

Did you try to use the ISBLANK() function to replace blank values in your calculation with zero's?

for example;

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Adrian. I've tried but still not working.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Do you mind sharing the pbix file?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Adrian,

I'm building all my DAX measures on Powerpivot, and then live connect to PowerBI.

Please find the sample file here: Example file

You will find the DAX measures under Data ->> Data Model / Powerpivot.

Thanks once again!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Something like this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

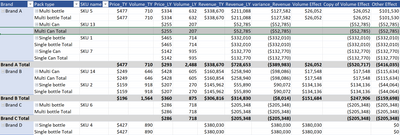

Hi, it's showing all the products now.

However the Volume Effect (Volume_TY - Volume_LY) * Price_LY is still not showing properly.

For instance, Customer 1's SKU 13 shall be showing Volume effect = -$52,785.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What about this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @avanderschilden , yes! Column Copy of Volume Effect looks exactly what I needed. Appreciate if you may share your approach on the solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

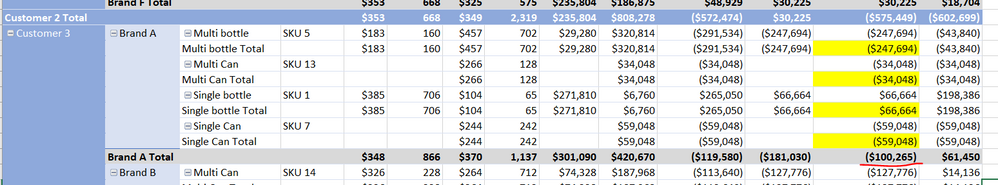

Hello Adrian,

Thanks again for your help. Sorry but just realized the sub-totals are not summing up the Volume Effect properly.

For instance from picture below: Under Customer 3, Brand A Total's Volume Effect shall be -$274,126 (Multi bottle Total + Multi Can Total + Single bottle Total + Single Can Total), but not -$100,265. Hence I tried to implied SUMX at the beginning of the formula.

Thanks once again!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

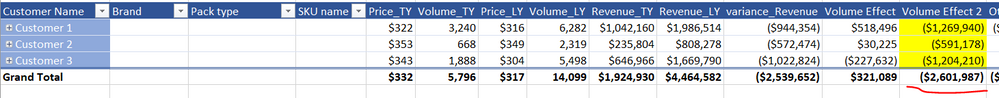

Hi @avanderschilden ,

Thanks once again. However still two issues remained unsovled:

i) The sub-total on Pack Types are missing (e.g. row Multi bottle Total)

ii) the sub-total on the Customer level wasn't adding up properly. The Volume Effect shall be dynamic on all dDate, dCustomer, dProduct tables. E.g. For picture below, the Grand Total shall be adding up all Customer 1, 2, 3's sub-totals and showing -$3,065, 328

I've revised your formula as follow using a nested SUMX

Volume Effect := SUMX ( dCustomer, SUMX ( dSKU, ( [Volume_TY] - [Volume_LY] ) * [Price_TY] ) )

This solves issue (ii) the dynamic summation of sub-totals. However issue (i) is yet not addressed.

Another downside of using nested SUMX can also lead to poor performance issue since this might not be the most efficient DAX measure (the actual dataset I'm using can easily goes beyond million rows of data.

Any thoughts? Thanks once again!!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello, any thoughts?

Helpful resources

| User | Count |

|---|---|

| 50 | |

| 23 | |

| 18 | |

| 18 | |

| 14 |

| User | Count |

|---|---|

| 91 | |

| 85 | |

| 46 | |

| 28 | |

| 21 |