- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Web Scraping Project

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Web Scraping Project

Hi all,

Ive built a simple scraper which pulls pricing data instead of copy/pasting it manually. Currently each Query is pointed to a product URL by changing the "source" of the query. Is there a way that I can create a table of URLs and have one query look at each of them and extract the Source code data, instead of having separate Queries for each product page and appending them?

It would save me considerable copy/paste time if I can acheve this.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous

I assume you have something like this.

let

Source = Web.BrowserContents("https:/abc.com/?page=12"),

//Your addtional tranfomation steps goes below

.

...

....

#"LastStep" = ....

in

#"LastStep"

Then you have other URLs for which you need to apply same steps. In that case follow below steps.

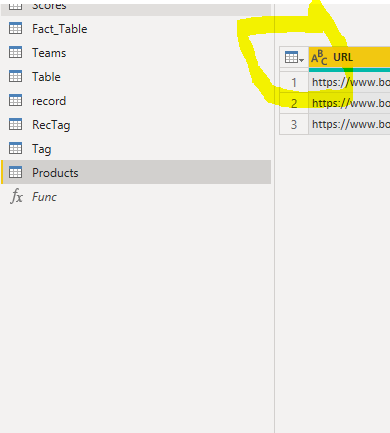

- Create a new table with single column(URL) having all the urls you want. Lets say this table Products.

- Now change above query as below, this creates a custom function for you.

(url as text) =>

let

Source = Web.BrowserContents(url), // Replace the hardcoded url with parameter url

//Your addtional tranfomation steps goes below

.

...

....

#"LastStep" = ....

in

#"LastStep"

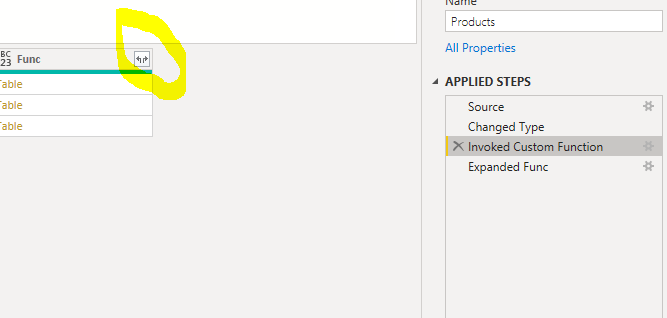

- Go to the Products table created in step 1. Click on the table icon displayed on top left corner of the table.

- Then choose Invoke Custom Function.

- Under function query choose the function created in step 2.

- For url select column name URL and hit ok.

- Then click on expand icon as below

Thats all you need I hope.

Appreciate with kudos by clicking the like button on bottom right.

Please mark as a solution if this solves your problem.

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous ,

as @Anonymous said.

But if you want to regularly refresh the results in Power BI service, you have to move the dynamic URLs into the query parameters instead. Otherwise you'll get an error complaining about dynamic data sources: https://www.thebiccountant.com/2018/03/22/web-scraping-2-scrape-multiple-pages-power-bi-power-query/

Imke Feldmann (The BIccountant)

If you liked my solution, please give it a thumbs up. And if I did answer your question, please mark this post as a solution. Thanks!

How to integrate M-code into your solution -- How to get your questions answered quickly -- How to provide sample data -- Check out more PBI- learning resources here -- Performance Tipps for M-queries

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous you can create a power query function a Table with URLS and iterate over those URLS to get the data.

Thanks.