Jumpstart your career with the Fabric Career Hub

Find everything you need to get certified on Fabric—skills challenges, live sessions, exam prep, role guidance, and a 50 percent discount on exams.

Get started- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Earn a 50% discount on the DP-600 certification exam by completing the Fabric 30 Days to Learn It challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Performance Issues with Group By Clause

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Performance Issues with Group By Clause

Hello Everyone,

I am currently experiencing a performance issue on using a GROUP BY clause while transforming data in Power Query Editor in Power BI.

Scenario:

I have a very large table worth 50 million rows. (can't share data due to sensitivity)

I am working on creating a Dimension table for my data model by merging the source table say Project Table with a 2nd table say Team table; to extract team members information.

Now a single Project Id can result into multiple team members information - therefore I end up with multiple rows for a Project Id in my source table after the merge operation.

Now what I really need is - combine these multiple rows into a single row and get team members information in a single row that are separated by a delimiter; against a project Id.

I know I can do this by using Group BY with a combination of Text.Combine() function.

Challenge:

Now the challenge here is - Project table has nearly 50 million rows. After merge with Teams table, I end up with somewhere around 60 million rows. Applying a Group BY transformation on top of this is really creating a performance challenge for me and I am looking for an alternative way to do this transformation.

Questions:

- Is there an alternative way to carry out this GROUP BY transformation in an optimised way?

- Is creating this summarisation/grouping using DAX more optimised? (In my view it is not but looking to hear others's views)

- I am using a Dataflow to consume my data In Power BI and I am trying to do most of the transformations on the dataflow side in online Power Query Editor. But have limitations as the client doesn't have Premium licensing at their end. What is the best approach here then as I end up creating few computed entities which are only supported in power BI Premium?

I am looking forward to some suggestion on best approaches here and to get some tips around the solution implementation.

@parry2k @edhans @Greg_Deckler @MFelix @mwegener @nickyvv @amitchandak

Thanks In advance! Let me know if more details are needed here.

Best Regards,

Pragati Jain

LinkedIn | Twitter | Blog | YouTube

Did I answer your question? Mark my post as a solution! This will help others on the forum!

Appreciate your Kudos!!

Proud to be a Super User!!

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pragati11 groupby will be slow, I believe DAX will be much faster, Go ahead and try it.

Subscribe to the @PowerBIHowTo YT channel for an upcoming video on List and Record functions in Power Query!!

Learn Power BI and Fabric - subscribe to our YT channel - Click here: @PowerBIHowTo

If my solution proved useful, I'd be delighted to receive Kudos. When you put effort into asking a question, it's equally thoughtful to acknowledge and give Kudos to the individual who helped you solve the problem. It's a small gesture that shows appreciation and encouragement! ❤

Did I answer your question? Mark my post as a solution. Proud to be a Super User! Appreciate your Kudos 🙂

Feel free to email me with any of your BI needs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pragati11 groupby will be slow, I believe DAX will be much faster, Go ahead and try it.

Subscribe to the @PowerBIHowTo YT channel for an upcoming video on List and Record functions in Power Query!!

Learn Power BI and Fabric - subscribe to our YT channel - Click here: @PowerBIHowTo

If my solution proved useful, I'd be delighted to receive Kudos. When you put effort into asking a question, it's equally thoughtful to acknowledge and give Kudos to the individual who helped you solve the problem. It's a small gesture that shows appreciation and encouragement! ❤

Did I answer your question? Mark my post as a solution. Proud to be a Super User! Appreciate your Kudos 🙂

Feel free to email me with any of your BI needs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pragati11 What type is your original data source?

Can you already do the grouping on the team table before the join?

What are the cases in which you cannot avoid the computed entites?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @mwegener ,

Yes I do need computed entities here as I have just included 2 tables in my question, but I do have other tables as well.

I have actually cracked the solution to avoid computed entities in the Dataflow. This can be easily done by making sure which ever tables you are using to get this computed entity, they need to be disabled on load. This has actually solved this issue for me.

Thanks so much for your response 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pragati11 I would still lean towards keeping this in Power Query. You can do the concatenation (TEXT.COMBINE) in the Group By function. I just did this yesterday for a client.

But - performance wasn't great. Added 30min to the transformation. So I moved this to a Dataflow, and let it to the heavy lifting. Still takes about 30min, but it is offloaded from Power BI and doesn't impact your development. If you have other heavy transformations, if you move them to dataflows as well, they can run in parallel, and when you load Power BI data, it is just a straight load of materalized tables.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WSlTSUQpSitWJVkoCsgLALJCYF1zMAy6GkPWHsxzh6lzhrFA4KwSuLhwu5oxmXiqQ5QSX9QazkuEmpwBZfnB1wXBZT7gs0H2xAA==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Group = _t, Code = _t]),

#"Grouped Rows" =

Table.Group(

Source,

{"Group"},

{

{

"All Rows",

each Text.Combine(_[Code], ", "),

type table [Group=nullable text, Code=nullable text]

}

}

)

in

#"Grouped Rows"

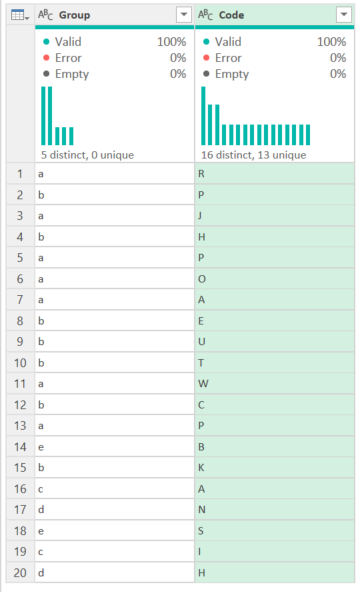

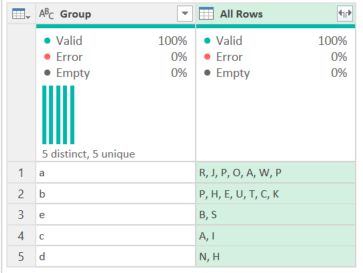

That code converts this:

to this in one step.

Given the amount of data you are talking about, pushing more back to the source can make the model perform better since the vertipaq engine can optimize the compression if the data needs to be in a column. If it just needs to be in a measure, then yeah, CONCATENATEX() will work - but you will have a lot more records as you didn't group before loading.

So.... it depends. 😁

How to use M code provided in a blank query:

1) In Power Query, select New Source, then Blank Query

2) On the Home ribbon, select "Advanced Editor" button

3) Remove everything you see, then paste the M code I've given you in that box.

4) Press Done

5) See this article if you need help using this M code in your model.

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @edhans ,

thank you so much for getting back with this detailed approach.

I did actually try Text.Combine() m-code function but it was in a different way. Let me try using the m-code that you have shared above. I will let you know whether it works for me or not.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pragati11 If that is the case just use CONCATENATEX DAX function instead of doing group by in PQ

Subscribe to the @PowerBIHowTo YT channel for an upcoming video on List and Record functions in Power Query!!

Learn Power BI and Fabric - subscribe to our YT channel - Click here: @PowerBIHowTo

If my solution proved useful, I'd be delighted to receive Kudos. When you put effort into asking a question, it's equally thoughtful to acknowledge and give Kudos to the individual who helped you solve the problem. It's a small gesture that shows appreciation and encouragement! ❤

Did I answer your question? Mark my post as a solution. Proud to be a Super User! Appreciate your Kudos 🙂

Feel free to email me with any of your BI needs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HI @parry2k ,

Does using DAX is good from performance point of view when compared to creating summarisation in Power Query editor?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Pragati11 Thanks for tagging. Just to clarify, you raw data contains two tables:

1 - Project Table with unique projects

2 - Team table with multiple team members for each projects

and above two tables can be joined on ProjectId which will be 1 to many relationship, correct?

And you want the output to show the Team members of each project separated by a comma, correct?

Please confirm when you get a chance.

Subscribe to the @PowerBIHowTo YT channel for an upcoming video on List and Record functions in Power Query!!

Learn Power BI and Fabric - subscribe to our YT channel - Click here: @PowerBIHowTo

If my solution proved useful, I'd be delighted to receive Kudos. When you put effort into asking a question, it's equally thoughtful to acknowledge and give Kudos to the individual who helped you solve the problem. It's a small gesture that shows appreciation and encouragement! ❤

Did I answer your question? Mark my post as a solution. Proud to be a Super User! Appreciate your Kudos 🙂

Feel free to email me with any of your BI needs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content