- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Filtering Duplicate Data

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Filtering Duplicate Data

I am importing data from SQL Server and noticed that my data is getting bloated due to duplicate data that is being loaded. Is there a way to filter out duplicates and keep only the most recent data? Is this suppose to be done in Power Query at the time of ETL? Below are data: Table 1 = the current data including duplicates / Table 2 = expected output

(Table 1 = the current data including duplicates)

| transaction_id | description | total | transaction_datetime |

| 1 | tacos | 10.00 | 2021-01-26 10:00:26.000 |

| 1 | tacos | 10.00 | 2021-01-26 10:20:52.000 |

| 2 | pizza | 6.00 | 2021-02-03 12:30:43.000 |

(Table 2 = expected output)

| transaction_id | description | total | transaction_datetime |

| 1 | tacos | 10.00 | 2021-01-26 10:20:52.000 |

| 2 | pizza | 6.00 | 2021-02-03 12:30:43.000 |

Any advice will be greatly appreciated.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

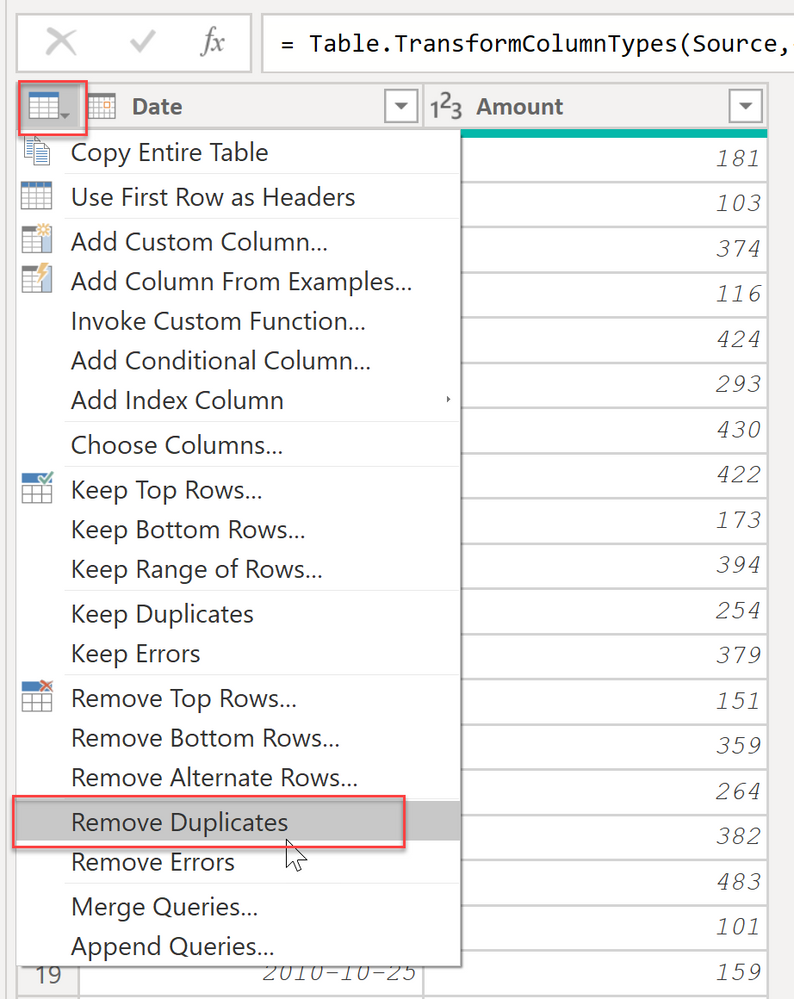

@win_anthony in power query, select all columns and then select remove duplicates.

Check my latest blog post Compare Budgeted Scenarios vs. Actuals I would ❤ Kudos if my solution helped. 👉 If you can spend time posting the question, you can also make efforts to give Kudos to whoever helped to solve your problem. It is a token of appreciation!

⚡Visit us at https://perytus.com, your one-stop-shop for Power BI-related projects/training/consultancy.⚡

Subscribe to the @PowerBIHowTo YT channel for an upcoming video on List and Record functions in Power Query!!

Learn Power BI and Fabric - subscribe to our YT channel - Click here: @PowerBIHowTo

If my solution proved useful, I'd be delighted to receive Kudos. When you put effort into asking a question, it's equally thoughtful to acknowledge and give Kudos to the individual who helped you solve the problem. It's a small gesture that shows appreciation and encouragement! ❤

Did I answer your question? Mark my post as a solution. Proud to be a Super User! Appreciate your Kudos 🙂

Feel free to email me with any of your BI needs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@win_anthony in power query, select all columns and then select remove duplicates.

Check my latest blog post Compare Budgeted Scenarios vs. Actuals I would ❤ Kudos if my solution helped. 👉 If you can spend time posting the question, you can also make efforts to give Kudos to whoever helped to solve your problem. It is a token of appreciation!

⚡Visit us at https://perytus.com, your one-stop-shop for Power BI-related projects/training/consultancy.⚡

Subscribe to the @PowerBIHowTo YT channel for an upcoming video on List and Record functions in Power Query!!

Learn Power BI and Fabric - subscribe to our YT channel - Click here: @PowerBIHowTo

If my solution proved useful, I'd be delighted to receive Kudos. When you put effort into asking a question, it's equally thoughtful to acknowledge and give Kudos to the individual who helped you solve the problem. It's a small gesture that shows appreciation and encouragement! ❤

Did I answer your question? Mark my post as a solution. Proud to be a Super User! Appreciate your Kudos 🙂

Feel free to email me with any of your BI needs.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@parry2k After reworking your suggestion, it ultimately worked out. The only difference with your solution and mine is that I selected the individual column to remove the duplicates. My initial concern was that it was going to remove stores or other legitimate duplicates.

Thank you so much for your support!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@parry2k thank you for your support. Unfortunately, I am still getting the same results. My thoughts are that power query is still keeping the duplicate values because the timestamp on the duplicates are unique (please look at my sample table 1 / transaction_id = 1).

Any other thoughts on how you would approach removing duplicates that have unique datetime stamps?

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 105 | |

| 94 | |

| 75 | |

| 63 | |

| 62 |

| User | Count |

|---|---|

| 137 | |

| 105 | |

| 104 | |

| 80 | |

| 63 |