Jumpstart your career with the Fabric Career Hub

Find everything you need to get certified on Fabric—skills challenges, live sessions, exam prep, role guidance, and more.

Get started- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Grow your Fabric skills and prepare for the DP-600 certification exam by completing the latest Microsoft Fabric challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Identify Overlapping entries for the same empl...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Identify Overlapping entries for the same employee

Hi guys,

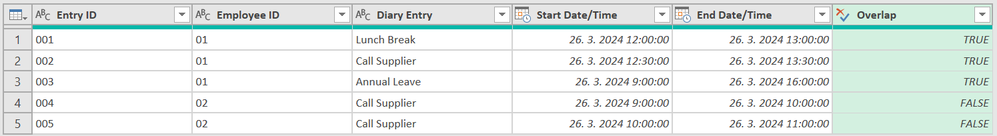

I have the below table (Table A) as my source data. I would like to use Power Query to flag whether or not the entry overlaps only if it is the same employee ID, using Start Date/Time and End Date/Time. For example Entry ID 001,002,003 (from Table A) all overlap for the same Employee ID 01.

I would like the results table to look like Table B (Below)

Thank you so much for all your help as usual!

Table A (Source)

| Entry ID | Employee ID | Diary Entry | Start Date/Time | End Date/Time |

| 001 | 01 | Lunch Break | 26/03/2024 12:00 | 26/03/2024 13:00 |

| 002 | 01 | Call Supplier | 26/03/2024 12:30 | 26/03/2024 13:30 |

| 003 | 01 | Annual Leave | 26/03/2024 09:00 | 26/03/2024 16:00 |

| 004 | 02 | Call Supplier | 26/03/2024 09:00 | 26/03/2024 10:00 |

| 005 | 02 | Call Supplier | 26/03/2024 10:00 | 26/03/2024 11:00 |

Table B (results)

| Entry ID | Employee ID | Diary Entry | Start Date/Time | End Date/Time | Overlap |

| 001 | 01 | Lunch Break | 26/03/2024 12:00 | 26/03/2024 13:00 | TRUE |

| 002 | 01 | Call Supplier | 26/03/2024 12:30 | 26/03/2024 13:30 | TRUE |

| 003 | 01 | Annual Leave | 26/03/2024 09:00 | 26/03/2024 16:00 | TRUE |

| 004 | 02 | Call Supplier | 26/03/2024 09:00 | 26/03/2024 10:00 | FALSE |

| 005 | 02 | Call Supplier | 26/03/2024 10:00 | 26/03/2024 11:00 | FALSE |

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HI @threw001 . Here's the M Code to get your desired results.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMjAwVNJRAhM+pXnJGQpORamJ2UCekZm+gbG+kYGRiYKhkZWBAZqQMUgoVgdkgBHMAOfEnByF4NKCgpzM1CIMI4wxjTCGGWEMM8IxL680MUfBJzWxLBVVuYElpiPMEI4wAZlghN8R2IwwQBhhSoQREPWoQoZgI2IB", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Entry ID" = _t, #"Employee ID" = _t, #"Diary Entry" = _t, #"Start Date/Time" = _t, #"End Date/Time" = _t]),

#"Changed Type with Locale" = Table.TransformColumnTypes(Source, {{"Start Date/Time", type datetime}}, "en-GB"),

#"Changed Type with Locale1" = Table.TransformColumnTypes(#"Changed Type with Locale", {{"End Date/Time", type datetime}}, "en-GB"),

#"Changed Type" = Table.TransformColumnTypes(#"Changed Type with Locale1",{{"Entry ID", type text}, {"Employee ID", type text}, {"Diary Entry", type text}, {"Start Date/Time", type datetime}, {"End Date/Time", type datetime}}),

#"Added Custom" = Table.AddColumn(#"Changed Type", "Overlap", each let

currentEntryID = [#"Entry ID"],

currentEmployeeID = [#"Employee ID"],

currentStart = [#"Start Date/Time"],

currentEnd = [#"End Date/Time"],

otherEntries = Table.SelectRows(#"Changed Type", each [#"Employee ID"] = currentEmployeeID and [#"Entry ID"] <> currentEntryID),

overlapExists = List.AnyTrue(Table.TransformRows(otherEntries, each (_[#"Start Date/Time"] < currentEnd and _[#"End Date/Time"] > currentStart) or (_[#"End Date/Time"] > currentStart and _[#"Start Date/Time"] < currentEnd)))

in

overlapExists, type logical)

in

#"Added Custom"Did I answer your question? Mark my post as a solution!

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @threw001, different approach here.

Result

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMjAwVNJRAhM+pXnJGQpORamJ2UCekZm+gbG+kYGRiYKhkZWBAZqQMUgoVgdkgBHMAOfEnByF4NKCgpzM1CIMI4wxjTCGGWEMM8IxL680MUfBJzWxLBVVuYElpiPMEI4wAZlghN8R2IwwQBhhSoQREPWoQoZgI2IB", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Entry ID" = _t, #"Employee ID" = _t, #"Diary Entry" = _t, #"Start Date/Time" = _t, #"End Date/Time" = _t]),

ChangedType = Table.TransformColumnTypes(Source,{{"Start Date/Time", type datetime}, {"End Date/Time", type datetime}}, "sk-SK"),

Ad_DateTimes = Table.AddColumn(ChangedType, "DateTimes", each List.DateTimes([#"Start Date/Time"], Duration.TotalMinutes([#"End Date/Time"] - [#"Start Date/Time"]) +1, #duration(0,0,1,0)), type list),

GroupedRows = Table.Group(Ad_DateTimes, {"Employee ID"}, {{"All", each _, type table}}),

Ad_Helper = Table.AddColumn(GroupedRows, "Helper", each Table.AddColumn(Table.RemoveColumns([All], {"DateTimes"}), "All", (x)=> [All], type table), type table),

CombinedHelper = Table.Combine(Ad_Helper[Helper]),

// Removed First and Last time (because we would like to compare only matched times greater than 1 minute)

Ad_DateTimesCurrentEntry = Table.AddColumn(CombinedHelper, "DateTimes Current Entry", each List.RemoveLastN(List.Skip(List.Combine(Table.SelectRows([All], (x)=> x[Entry ID] = [Entry ID])[DateTimes])), 1), type list),

// Same Employee

Ad_DateTimesOtherEntries = Table.AddColumn(Ad_DateTimesCurrentEntry, "DateTimes Other Entries", each List.Combine(Table.SelectRows([All], (x)=> x[Entry ID] <> [Entry ID])[DateTimes]), type list),

Ad_Overlap = Table.AddColumn(Ad_DateTimesOtherEntries, "Overlap", each List.ContainsAny([DateTimes Current Entry], [DateTimes Other Entries]), type logical),

#"Removed Columns" = Table.RemoveColumns(Ad_Overlap,{"All", "DateTimes Current Entry", "DateTimes Other Entries"})

in

#"Removed Columns"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @threw001, different approach here.

Result

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMjAwVNJRAhM+pXnJGQpORamJ2UCekZm+gbG+kYGRiYKhkZWBAZqQMUgoVgdkgBHMAOfEnByF4NKCgpzM1CIMI4wxjTCGGWEMM8IxL680MUfBJzWxLBVVuYElpiPMEI4wAZlghN8R2IwwQBhhSoQREPWoQoZgI2IB", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Entry ID" = _t, #"Employee ID" = _t, #"Diary Entry" = _t, #"Start Date/Time" = _t, #"End Date/Time" = _t]),

ChangedType = Table.TransformColumnTypes(Source,{{"Start Date/Time", type datetime}, {"End Date/Time", type datetime}}, "sk-SK"),

Ad_DateTimes = Table.AddColumn(ChangedType, "DateTimes", each List.DateTimes([#"Start Date/Time"], Duration.TotalMinutes([#"End Date/Time"] - [#"Start Date/Time"]) +1, #duration(0,0,1,0)), type list),

GroupedRows = Table.Group(Ad_DateTimes, {"Employee ID"}, {{"All", each _, type table}}),

Ad_Helper = Table.AddColumn(GroupedRows, "Helper", each Table.AddColumn(Table.RemoveColumns([All], {"DateTimes"}), "All", (x)=> [All], type table), type table),

CombinedHelper = Table.Combine(Ad_Helper[Helper]),

// Removed First and Last time (because we would like to compare only matched times greater than 1 minute)

Ad_DateTimesCurrentEntry = Table.AddColumn(CombinedHelper, "DateTimes Current Entry", each List.RemoveLastN(List.Skip(List.Combine(Table.SelectRows([All], (x)=> x[Entry ID] = [Entry ID])[DateTimes])), 1), type list),

// Same Employee

Ad_DateTimesOtherEntries = Table.AddColumn(Ad_DateTimesCurrentEntry, "DateTimes Other Entries", each List.Combine(Table.SelectRows([All], (x)=> x[Entry ID] <> [Entry ID])[DateTimes]), type list),

Ad_Overlap = Table.AddColumn(Ad_DateTimesOtherEntries, "Overlap", each List.ContainsAny([DateTimes Current Entry], [DateTimes Other Entries]), type logical),

#"Removed Columns" = Table.RemoveColumns(Ad_Overlap,{"All", "DateTimes Current Entry", "DateTimes Other Entries"})

in

#"Removed Columns"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you so much! This worked great and load times are much better!

I will verify results but looks good so far! Really appreciate your help 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, you're welcome. To be honest I spent some time with this query yesterday. I tried few different versions of code, but this one was the fastest. There should be a way to make it even faster, but someone else should help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi - thanks again for all your efforts

The load time is actually pretty good for the data set I have! Works perfectly - I have tested the results 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

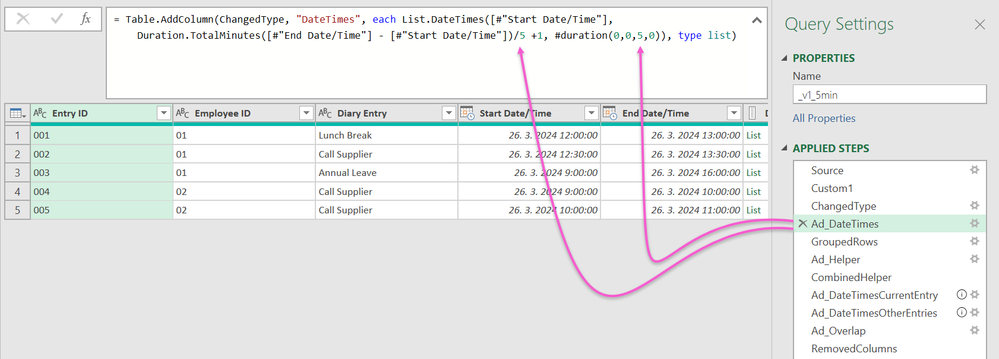

Hi again, query will be significantly faster if you use 5 minutes overlaping interval. Or maybe you can use 3 or 2 or even 10 or 15. It's up to you.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMjAwVNJRAhM+pXnJGQpORamJ2UCekZm+gbG+kYGRiYKhkZWBAZqQMUgoVgdkgBHMAOfEnByF4NKCgpzM1CIMI4wxjTCGGWEMM8IxL680MUfBJzWxLBVVuYElpiPMEI4wAZlghN8R2IwwQBhhSoQREPWoQoZgI2IB", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Entry ID" = _t, #"Employee ID" = _t, #"Diary Entry" = _t, #"Start Date/Time" = _t, #"End Date/Time" = _t]),

Custom1 = if UseRepeatValue = "Yes" then Table.Repeat(Source, RepeatValue) else Source,

ChangedType = Table.TransformColumnTypes(Custom1,{{"Start Date/Time", type datetime}, {"End Date/Time", type datetime}}, "sk-SK"),

Ad_DateTimes = Table.AddColumn(ChangedType, "DateTimes", each List.DateTimes([#"Start Date/Time"], Duration.TotalMinutes([#"End Date/Time"] - [#"Start Date/Time"])/5 +1, #duration(0,0,5,0)), type list),

GroupedRows = Table.Group(Ad_DateTimes, {"Employee ID"}, {{"All", each _, type table}}),

Ad_Helper = Table.AddColumn(GroupedRows, "Helper", each Table.AddColumn(Table.RemoveColumns([All], {"DateTimes"}), "All", (x)=> [All], type table), type table),

CombinedHelper = Table.Combine(Ad_Helper[Helper]),

// Removed First and Last time (because we would like to compare only matched times greater than 1 minute)

Ad_DateTimesCurrentEntry = Table.AddColumn(CombinedHelper, "DateTimes Current Entry", each List.RemoveLastN(List.Skip(List.Combine(Table.SelectRows([All], (x)=> x[Entry ID] = [Entry ID])[DateTimes])), 1), type list),

// Same Employee

Ad_DateTimesOtherEntries = Table.AddColumn(Ad_DateTimesCurrentEntry, "DateTimes Other Entries", each List.Combine(Table.SelectRows([All], (x)=> x[Entry ID] <> [Entry ID])[DateTimes]), type list),

Ad_Overlap = Table.AddColumn(Ad_DateTimesOtherEntries, "Overlap", each List.ContainsAny([DateTimes Current Entry], [DateTimes Other Entries]), type logical),

RemovedColumns = Table.RemoveColumns(Ad_Overlap,{"All", "DateTimes Current Entry", "DateTimes Other Entries"})

in

RemovedColumns

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HI @threw001 . Here's the M Code to get your desired results.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMjAwVNJRAhM+pXnJGQpORamJ2UCekZm+gbG+kYGRiYKhkZWBAZqQMUgoVgdkgBHMAOfEnByF4NKCgpzM1CIMI4wxjTCGGWEMM8IxL680MUfBJzWxLBVVuYElpiPMEI4wAZlghN8R2IwwQBhhSoQREPWoQoZgI2IB", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Entry ID" = _t, #"Employee ID" = _t, #"Diary Entry" = _t, #"Start Date/Time" = _t, #"End Date/Time" = _t]),

#"Changed Type with Locale" = Table.TransformColumnTypes(Source, {{"Start Date/Time", type datetime}}, "en-GB"),

#"Changed Type with Locale1" = Table.TransformColumnTypes(#"Changed Type with Locale", {{"End Date/Time", type datetime}}, "en-GB"),

#"Changed Type" = Table.TransformColumnTypes(#"Changed Type with Locale1",{{"Entry ID", type text}, {"Employee ID", type text}, {"Diary Entry", type text}, {"Start Date/Time", type datetime}, {"End Date/Time", type datetime}}),

#"Added Custom" = Table.AddColumn(#"Changed Type", "Overlap", each let

currentEntryID = [#"Entry ID"],

currentEmployeeID = [#"Employee ID"],

currentStart = [#"Start Date/Time"],

currentEnd = [#"End Date/Time"],

otherEntries = Table.SelectRows(#"Changed Type", each [#"Employee ID"] = currentEmployeeID and [#"Entry ID"] <> currentEntryID),

overlapExists = List.AnyTrue(Table.TransformRows(otherEntries, each (_[#"Start Date/Time"] < currentEnd and _[#"End Date/Time"] > currentStart) or (_[#"End Date/Time"] > currentStart and _[#"Start Date/Time"] < currentEnd)))

in

overlapExists, type logical)

in

#"Added Custom"Did I answer your question? Mark my post as a solution!

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks - This looks to be working but unable to verify all the results due to long loading time for query.

The query loads over 2gb of data although the excel file source I am using is below 10mb

Apologies, I should have provided more info - I am looking to run this query for a data set with around 10k lines of data. There are multiple entries for multiple employees - is there a way to speed up the query?

Thanks!