Jumpstart your career with the Fabric Career Hub

Find everything you need to get certified on Fabric—skills challenges, live sessions, exam prep, role guidance, and more.

Get started- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Grow your Fabric skills and prepare for the DP-600 certification exam by completing the latest Microsoft Fabric challenge.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: DAX filter performance issue

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

DAX filter performance issue

Hi

This is related to https://community.powerbi.com/t5/Desktop/Filter-table-based-on-less-than-and-greater-than-date-value...

This has been impemented but I'm experiencing performance issues when dragging a field into my tablix visualisation

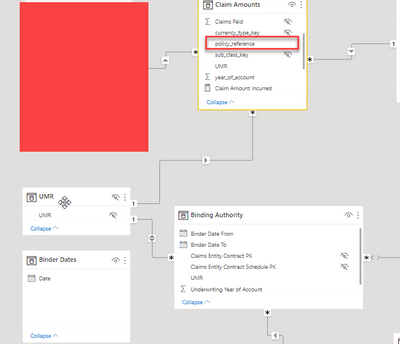

My data model:

The table 'Claim Amounts' has approx. 1.5m rows

I have a measure that sets a value based on the value selected in the Binder Dates[Date] slicer:

The problem I'm experiencing is that when I try to include Policy Reference in the below visual and apply a filter the query just times out. I guess it's because I'm trying to apply a filter on 1.5m rows

Is there another solution to this? This works just fine except for the performance issue

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'd consider expanding the table with the dates so you have a row per day for each item:

Have a look at:

It takes a basic table with From and To dates and then in power query:

1) Adds a Number of Days custom column:

Number.From( [Date To] - [Date From] ) + 1

2) Sets it's data type as whole number.

3) Adds a custom column to contain a list of dates:

List.Dates([Date From], [Number of Days], #duration(1, 0, 0, 0))4) Expands the list to new rows.

5) Removed other columns.

6) Set date column as a date.

I think you can use this to massively increase the speed of the filter on that table.

If you're then still having performance issues the only other thing I can think is to move the filter directly in DAX by getting VALUES ( 'Binding Authority'[UMR] ) and using TREATAS within calculate to move it directly over to your big table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@brian0782 can you rewrite this IF statement by storing the calculations in a variable and using those variables might improve the performance.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I tried this but didn't make much difference to performance:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You could try removing the bridge table and replacing it with a direct many many relationship with Binding Authority set to filter Claim Amounts.

My understanding is the engine should be more optimised for that.

Failing that you need to change the grain of the binding authority so each row represents a day. That makes the filter much simpler. Can share some code later. Sounds odd that adding more rows will speed it up but it massively will.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, I did have this as a many to many but had to change it to get a calculation working. I think when I had this has a direct many to many I was still experiencing performance issues

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'd consider expanding the table with the dates so you have a row per day for each item:

Have a look at:

It takes a basic table with From and To dates and then in power query:

1) Adds a Number of Days custom column:

Number.From( [Date To] - [Date From] ) + 1

2) Sets it's data type as whole number.

3) Adds a custom column to contain a list of dates:

List.Dates([Date From], [Number of Days], #duration(1, 0, 0, 0))4) Expands the list to new rows.

5) Removed other columns.

6) Set date column as a date.

I think you can use this to massively increase the speed of the filter on that table.

If you're then still having performance issues the only other thing I can think is to move the filter directly in DAX by getting VALUES ( 'Binding Authority'[UMR] ) and using TREATAS within calculate to move it directly over to your big table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So I have expanded out the dates and this has massively helped! Performance is hugely improved and no longer timing out.

I would never have thought adding extra rows would improve this.

The only drawback I would say is this would only work in a single select. I'm trying to think of a situation where a user might want to select multiple dates but at the moment this is working.

Many thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Helpful resources

| User | Count |

|---|---|

| 87 | |

| 84 | |

| 70 | |

| 62 | |

| 56 |

| User | Count |

|---|---|

| 137 | |

| 110 | |

| 92 | |

| 84 | |

| 69 |