- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- visual has exceeded available resources

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

visual has exceeded available resources

Hi All,

I've been using PowerBI for the better part of a year now. For the last month and a half or a little more, we've had annoying failures to refresh for 6 of our 14 tile visualizations. It's also inconsistent. Some days certain tiles of the 6 refresh and others they don't. The dashboard in question contains nothing more than 14 pinned single data point cards. They are simple queries made to our Azure SQL Database. On the database, all the queries run immediately with no delay. I have all my visualizations set to connect live to our Azure database. I'm at a loss for what to do. It seems like it's an issue with resources on Microsoft's servers. Here's the error I get:

Please try again later or contact support. If you contact support, please provide these details.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @jgarciabu,

Yes, the issue occurs when a visual has attempted to query too much data for the server to complete the result with the available resources.

As suggested in the error, you may need to try filtering the visual to reduce the amount of data in the result currently.![]()

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

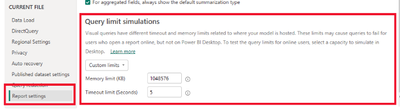

To be clear: you can indeed stop this problem happening in Power BI Desktop by changing the "Query limit simulations" settings (see this blog post for more details), but that only stops the problem happening in Power BI Desktop. You also need to care about what happens after you publish your report to the Power BI Service and it's not so easy to change settings there to avoid the error (see this older post of mine, a companion to the previous post I mentioned, too).

Looking at the other posts on this thread I have some general suggestions for people running into this problem:

- If you're using DirectQuery mode you probably shouldn't be. About 90% of the people I see who are using DirectQuery have made the wrong choice and should be using Import mode instead - it's almost always faster and easier to tune. If you do need to use DirectQuery this recording of a user group presentation I gave on DirectQuery best practices might be useful.

- If you're using Import mode with a relatively small amount of data - I see people here with only a few million rows of data - then it's almost certain that the problem is either the way you have modelled your data or something you are doing in the DAX for a measure. It's hard to give more specific advice because there are so many things that can go wrong, but for example the DAX antipattern of filtering on a whole table in CALCULATE can cause huge memory spikes which lead to errors even on fairly small models. There are a lot (too many?) resources out there on how to tune your reports but this is probably a good place to start.

Chris Webb

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I got this error message today with my SWITCH. Power BI doesn't allow over 50 conditions in the function. Update: I found Chris Webb's solution. I double Query Limit in Report settings and it works.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

** Potentially may help **

Hi all,

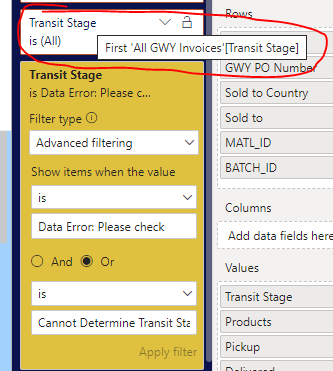

Had this error today in a MATRIX visual. I was pulling a column (Transit Stage) into the "Values" part of the matrix. Tried a simple filter on "Transit Stage" and got the "Resources exceeded" error.

I then realised i was filtering on the First [Transit Stage] value (see circled filter below), which was causing the issue, instead of filtering on the actual column itself.

Solution: I then pulled in the actual transit stage column itself into the visual filter, filtered on that, and it worked a charm.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I had this issue and my personal proble was that the desktop where I was running the gateway was out of memory on the hard drive, I cleaned it and start the service again and then everything worked.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

Is there an option to show the icon on the page and it will be visible only when the report shows the error "visual has exceeded available resources" and after clicking that icon we will jump to the default view where the icon is invisible?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm having the same error for one of the table visuals but the same visual works fine when I publish it to "My workspace". Is there a difference in how personal workspace capacity and Azure capacities are configured?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can someone please provide the soultion to the issue "exceeded the available resources"?

I am unable to understand what is wrong? I don't have large DAX calculations. I have only added 4-6 more columns in one or two tables. My other visuals on the tabs on desktop has larger queries and measures. They all are running and refreshing just fine. I am having issue with just one visual. It doesn't make sense why with just one visual?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I was able to get around this issue by identifying which table caused the slow down. I turned off "Enable load" for that table. Then referenced the result of that table into a new table which I loaded into the report. By keeping the most complex calculations in the background query and only loading its results, my report went from taking 20 minutes to return an error to returning accurate results within 5 seconds.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Any updates on this topic yet?

I'm having the strange thing that when I use publish to the web for my report I get the screenshot below as a result.

Only 1 out of 2 visuals is showing and the other one gives the exceeded memory error. Strangely both visuals (a table and a graph) use exactly the same data and filters (page level filters).

I had 2 years of logfiles and already filtered out 1 year with a report level filter but still keep having this issue.

The biggest DAX that I have is my own calendar table. Furthermore I have quite some Power Query steps.

Any suggestions on what to do?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous

I managed to get around my issue without really intending to. I was looking to improve the performance of my report and rewrote all of my DAX measures using variables. Even a measure as simple as just summing some column was written using a variable and returning the output. The performance of the dashboard was drastically improved and it resolved my issue with the exceeded memory error. SQLBI has a Date table publicly available that you can borrow if you have trouble rewriting your DAX for the table. It's actually a great date table. It has pretty much everything you could possibly want in a date table. Hope this helps!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@cittakaro Sure. None of my DAX is too crazy and is probably pretty basic to someone who is an expert. There might be better ways to do most of what I did but it works and loads quickly so I got what I needed.

This is probably one of the more complicated measures I had in my report. I used to have everything here that you see as variables written into what is now the return statement. Sorry if it seems a bit like a jumbled mess. It's essentially just a series of nested if statements. After I started using variables, the performance of the report loading visuals was almost instantaneous rather than taking upwards of 10-15 seconds just to load a matrix/table visualization, if it loaded those visuals at all.

EarnedTitleLevel# =

VAR SurveylessthanTitle = [SurveyScoreGoal]<=values(TMD[Title Sort])

var SClessthanTitle = [StatusCountGoal]<=values(TMD[Title Sort])

var TTlessthanTitle = [TurnTimeGoal]<=values(TMD[Title Sort])

var AnswerlessthanTitle = [AnswerRateGoal]<=values(TMD[Title Sort])

VAR SurveylessthanSC = [SurveyScoreGoal]<=[StatusCountGoal]

var SClessthanSurvey = [StatusCountGoal]<=[SurveyScoreGoal]

VAR SurveylessthanTT = [SurveyScoreGoal]<=[TurnTimeGoal]

var TTlessthanSurvey = [TurnTimeGoal]<=[SurveyScoreGoal]

var SurveylessthanAnswer = [SurveyScoreGoal]<=[AnswerRateGoal]

var AnswerlessthanSurvey = [AnswerRateGoal]<=[SurveyScoreGoal]

var TTGoal = [TurnTimeGoal]

var SurveyScore = [SurveyScoreGoal]

var MinSurveys = [SurveyReceivedMin]

var MinCR = [CRCountMin]

var AnswerGoal = [AnswerRateGoal]

var statusgoal = [StatusCountGoal]

var CurrentTitle = values(TMD[Title Sort])

return

if(values(TMD[Specialty])="Mainstream PS" || values(TMD[Specialty])="NY PS",

if(isblank(TTGoal) || isblank(SurveyScore), CurrentTitle,

if(MinCR = -1 || MinSurveys = -1,

if(SurveylessthanTitle && SurveylessthanTT,SurveyScore,

if(TTlessthanTitle && TTlessthanSurvey,TTGoal,CurrentTitle)),

if(SurveylessthanTT,SurveyScore,TTGoal))),

if(values(TMD[Specialty])="Hunt PS",

if(isblank(statusgoal) || isblank(SurveyScore), CurrentTitle,

if(MinSurveys = -1 || [RONAgoal]=-1,

if(SurveylessthanTitle && SurveylessthanSC,SurveyScore,

if(SClessthanTitle && SClessthanSurvey,statusgoal,CurrentTitle)),

if(SurveylessthanSC,SurveyScore,statusgoal))),

if(values(TMD[Specialty])="CCS" || values(TMD[Specialty])="Escalation CCS",

if(isblank(statusgoal) || isblank(SurveyScore), CurrentTitle,

if(MinSurveys = -1,

if(SurveylessthanTitle && SurveylessthanSC,SurveyScore,

if(SClessthanTitle && SClessthanSurvey,statusgoal,CurrentTitle)),

if(SurveylessthanSC,SurveyScore,statusgoal))),

if(isblank(AnswerGoal) || isblank(SurveyScore), CurrentTitle,

if(MinSurveys = -1,

if(SurveylessthanTitle && SurveylessthanAnswer,SurveyScore,

if(AnswerlessthanTitle && AnswerlessthanSurvey,AnswerGoal,CurrentTitle)),

if(SurveylessthanAnswer,SurveyScore,AnswerGoal))))))

A slightly simpler example is below here. Just for a bit of context that would make the code make a bit of sense without seeing the report, on each page of this report I've added in drop down slicers that the end user can use as selectors to adjust their goals for different tiers. Each of those slicers is reading off of a simple table that just has values that range from 0-100.

TurnTimeGoal =

var CRCount = [CR Count]

var TT = [TurnTime]

var TCGoal = selectedvalue(TurnTimeTC[Column1])

var PCGoal = selectedvalue(TurnTimePC[Column1])

var ExecGoal = selectedvalue(TurnTimeExec[Column1])

var SrGoal = selectedvalue(TurnTimeSenior[Column1])

return

if(isblank(CRCount),blank(),

if(values(TMD[Specialty])="Mainstream PS" || values(TMD[Specialty])="NY PS",

if(TT<TCGoal,4,

if(TT<PCGoal,3,

if(TT<ExecGoal,2,

if(TT<SrGoal,1,0)))),blank()))

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Follow up:

Is there any update, I am sitting at client's heat and don't have any words at the visual exceed limit error at power BI service reports.

Can anybody help me with this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear all

We were dealing with this issue a year and a half ago and it was an absolute pain. We had numerous conversations with the official Microsoft support at the time and, in all honesty, they couldnt help much except for advising us that the issue was our SQL Server (queriestaking more than 5 seconds...); limit the number of the visuals in a single report. In a nutshell, limit the amount of data to be fetched; make sure to put solid indexing.

So:

1. Check the SQL query performance. End-result in Power BI depends on the performance of your backend.

2. Further tune it with indexing.

3. Consider having an SSAS solution of your data instead of taping on schema tables directly.

good luck.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am having the same issue!!

"visual has exceeded the available resources"

Can anybody help me out, I am at the on-site project and prepared 100+ reports.

Report having an issue of visual limit and no data is able to show but the report is not that much big only few measures are calculating.

Please, can anybody help me out?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just ran into this problem today. I modified a report that I had previously published. The original report worked completely fine. The longest load time was maybe 5 seconds. In this 2nd itteration of the report, I modified one of the measures to use some more complicated logic. I also removed some measures from the model. The data set (which is a set of excel files) is literally the exact same. I've not added anything. At first it worked just fine. I came back to it today and made 0 changes but somehow now it is saying Visual has exceeded available resources. It makes no sense.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I also had this issue today.

This is what I found. I had a data set with 5 years of data and a visual displaying the last 5 days of data by default.

As soon as I went to 6 days the resources are exceeded.

I modified my desktop PBI to 2 years then I could go past viewing over the last 6 days of data.

Most likely the available resoruces are (data set size + visual memory usage + calculations / queries memory usage)

Of course the moderators will only repeat the documentation as the solution since they are not the software developers.

"The documentation is the final answer and correct"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Follow up:

I updated my date ranges once again to reproduce the error to confirm and the resources are not exceeded anymore.

My test outcome was not valid. So this must be an issue within the servers since my original dataset did not reproduce the error.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It must be so, that you have a random number of capacity as a Pro user, and then sometimes your organization is lower the threshhold and sometimes over. Then you get errors. Go figure.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi

I have the same problem. It says in the details "The query exceeded the maximum memory allowed for queries executed in the current workload group."

So it refers to the Power BI service. Works fine on desktop, not when I publish this to organization.

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.