- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- Still getting intermittent data refresh failures

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

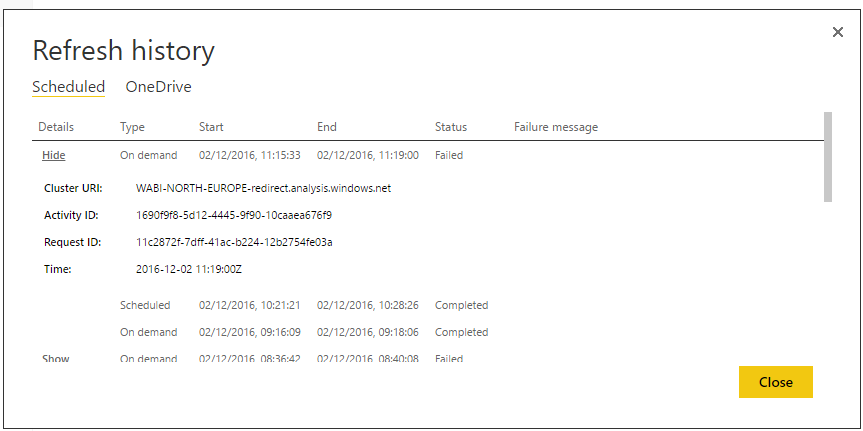

Still getting intermittent data refresh failures

We are still experiencing internittent data refresh failures of our on prem data sources.

We have updated our enterprise gateway installation and it all worked for a day and then we get schedules failing again. Sometimes a manual refesh fails as well. Sometimes the schedule works and sometimes it doesn't.

Anyone else experiencing this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

What's your data source? I suggest you monitor on data source side (like using SQL Profiler for SQL Server). If you still can't get useful information please create support ticket.

Regards,

Simon Hou

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Simon,

I have raised a support ticket.

The pbix files that fail the most are ones with mixed SSAS and SQL server data sources. If we are doing quite a bit of data merging using advanced query editor tools like merging queries these are the ones most affected. Sometimes they work and sometimes they don't. Below is an excerpt from our gateway logs.

This particular fail refreshes no problem if I run it from desktop but it does take about 4-5 minutes to refresh. I never get a timeout when running locally though. It seems this fails in the mornings when we have most of our refeshes scheduled, could it be something on the gateway server when it is processing several refreshes at once.

DM.EnterpriseGateway Error: 0 : 2016-12-07T10:54:21.9607887Z DM.EnterpriseGateway f1a0e749-489d-480b-ac24-ec3ce3dfff76 80550339-995d-4f24-9f4e-7a05f18d68f7 MGTT 2976f9fa-734c-474e-8398-c5be0a4ea120 6CC65CC9 [DM.Pipeline.Common] Non-gateway exception encountered in activity scope: System.ServiceModel.CommunicationException: The socket connection was aborted. This could be caused by an error processing your message or a receive timeout being exceeded by the remote host, or an underlying network resource issue. Local socket timeout was '00:01:00'. ---> System.IO.IOException: The write operation failed, see inner exception. ---> System.ServiceModel.CommunicationException: The socket connection was aborted. This could be caused by an error processing your message or a receive timeout being exceeded by the remote host, or an underlying network resource issue. Local socket timeout was '00:01:00'. ---> System.Net.Sockets.SocketException: An established connection was aborted by the software in your host machine at System.Net.Sockets.Socket.BeginSend(Byte[] buffer, Int32 offset, Int32 size, SocketFlags socketFlags, AsyncCallback callback, Object state) at Microsoft.ServiceBus.Channels.SocketConnection.BeginWrite(Byte[] buffer, Int32 offset, Int32 size, Boolean immediate, TimeSpan timeout, AsyncCallback callback, Object state) --- End of inner exception stack trace --- at Microsoft.ServiceBus.Channels.SocketConnection.BeginWrite(Byte[] buffer, Int32 offset, Int32 size, Boolean immediate, TimeSpan timeout, AsyncCallback callback, Object state) at Microsoft.ServiceBus.Channels.ConnectionStream.BeginWrite(Byte[] buffer, Int32 offset, Int32 count, AsyncCallback callback, Object state) at System.Net.Security._SslStream.StartWriting(Byte[] buffer, Int32 offset, Int32 count, AsyncProtocolRequest asyncRequest) at System.Net.Security._SslStream.ProcessWrite(Byte[] buffer, Int32 offset, Int32 count, AsyncProtocolRequest asyncRequest) --- End of inner exception stack trace --- at System.Net.Security._SslStream.ProcessWrite(Byte[] buffer, Int32 offset, Int32 count, AsyncProtocolRequest asyncRequest) at System.Net.Security._SslStream.BeginWrite(Byte[] buffer, Int32 offset, Int32 count, AsyncCallback asyncCallback, Object asyncState) at Microsoft.ServiceBus.Channels.StreamConnection.BeginWrite(Byte[] buffer, Int32 offset, Int32 size, Boolean immediate, TimeSpan timeout, AsyncCallback callback, Object state) --- End of inner exception stack trace --- Server stack trace: at Microsoft.ServiceBus.Channels.StreamConnection.BeginWrite(Byte[] buffer, Int32 offset, Int32 size, Boolean immediate, TimeSpan timeout, AsyncCallback callback, Object state) at Microsoft.ServiceBus.Channels.BufferedConnection.BeginWrite(Byte[] buffer, Int32 offset, Int32 size, Boolean immediate, TimeSpan timeout, AsyncCallback callback, Object state) at Microsoft.ServiceBus.Channels.FramingDuplexSessionChannel.SendAsyncResult.WriteCore() at Microsoft.ServiceBus.Channels.FramingDuplexSessionChannel.SendAsyncResult..ctor(FramingDuplexSessionChannel channel, Message message, TimeSpan timeout, AsyncCallback callback, Object state) at Microsoft.ServiceBus.Channels.FramingDuplexSessionChannel.OnBeginSend(Message message, TimeSpan timeout, AsyncCallback callback, Object state) at Microsoft.ServiceBus.Channels.OutputChannel.BeginSend(Message message, TimeSpan timeout, AsyncCallback callback, Object state) at Microsoft.ServiceBus.SocketConnectionChannelListener`2.DuplexSessionChannel.BeginSend(Message message, TimeSpan timeout, AsyncCallback callback, Object state) at System.ServiceModel.Dispatcher.DuplexChannelBinder.BeginSend(Message message, TimeSpan timeout, AsyncCallback callback, Object state) at System.ServiceModel.Channels.ServiceChannel.SendAsyncResult.StartSend(Boolean completedSynchronously) at System.ServiceModel.Channels.ServiceChannel.BeginCall(String action, Boolean oneway, ProxyOperationRuntime operation, Object[] ins, TimeSpan timeout, AsyncCallback callback, Object asyncState) at System.ServiceModel.Channels.ServiceChannel.BeginCall(ServiceChannel channel, ProxyOperationRuntime operation, Object[] ins, AsyncCallback callback, Object asyncState) at System.Threading.Tasks.TaskFactory`1.FromAsyncImpl[TArg1,TArg2,TArg3](Func`6 beginMethod, Func`2 endFunction, Action`1 endAction, TArg1 arg1, TArg2 arg2, TArg3 arg3, Object state, TaskCreationOptions creationOptions) at System.ServiceModel.Channels.ServiceChannelProxy.TaskCreator.CreateTask(ServiceChannel channel, ProxyOperationRuntime operation, Object[] inputParameters) at System.ServiceModel.Channels.ServiceChannelProxy.InvokeTaskService(IMethodCallMessage methodCall, ProxyOperationRuntime operation) at System.ServiceModel.Channels.ServiceChannelProxy.Invoke(IMessage message) Exception rethrown at [0]: at System.Runtime.Remoting.Proxies.RealProxy.HandleReturnMessage(IMessage reqMsg, IMessage retMsg) at System.Runtime.Remoting.Proxies.RealProxy.PrivateInvoke(MessageData& msgData, Int32 type) at Microsoft.PowerBI.DataMovement.Pipeline.InternalContracts.IGatewayTransferCallback.TransferCallbackAsync(Byte[] packet) at Microsoft.PowerBI.DataMovement.Pipeline.GatewayCore.ServiceModel.GatewayTransferService.<ProcessTransferTelemetryAsync>d__25.MoveNext() --- End of stack trace from previous location where exception was thrown --- at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task) at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task) at Microsoft.PowerBI.DataMovement.Pipeline.GatewayCore.ServiceModel.GatewayTransferService.<>c__DisplayClass5.<<TransferAsync>b__3>d__a.MoveNext() --- End of stack trace from previous location where exception was thrown --- at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task) at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task) at Microsoft.PowerBI.DataMovement.Pipeline.Common.Diagnostics.PipelineTelemetryService.<ExecuteInActivity>d__3.MoveNext() DM.EnterpriseGateway Error: 0 : 2016-12-07T10:54:21.9607887Z DM.EnterpriseGateway f1a0e749-489d-480b-ac24-ec3ce3dfff76 80550339-995d-4f24-9f4e-7a05f18d68f7 MGTT 2976f9fa-734c-474e-8398-c5be0a4ea120 D9A36E69 [DM.Pipeline.Common.TracingTelemetryService] Event: FireActivityCompletedWithFailureEvent (duration=3, err=CommunicationException, rootcauseErrorEventId=0)

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.