- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- How can we delete rows in power bi push dataset us...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How can we delete rows in power bi push dataset using rest api?

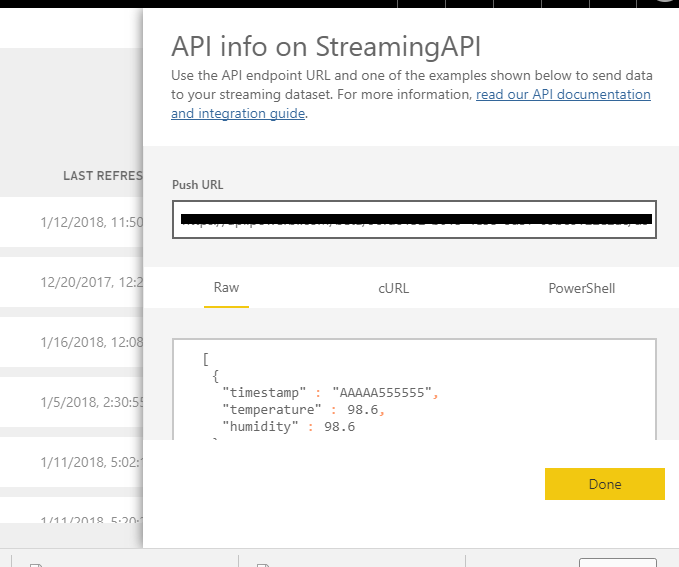

I have to show real time data on my dashboard. And for that, i am using power bi rest api to push data into it in historical data analysis mode. I am able to push data using a rest api push url provided while creating streaming data. But i need to delete all the data before pushing each single row. I am doing this because i want to use all visualizations which are available for historical data analysis mode only. But i dont need historical data, so i want to delete all data before pushing new row. But Its showing 401 Unauthorized error while doing deletion using python's requests library. Do we have to mention group id , dataset id and table name seperatly or the push url generated will work? I am getting push URL from below screen.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This issue is still relevant! In regular push dataset you can do that now, but in simplified streaming api you can't. Such a silly thing. In fact, you can only drop historical data manually and then re-push all data again...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have recently made a post on how to delete data from a push dataset using PowerShell. Maybe you can see if your script is the same to resolve your 401 error.

https://sqlitybi.com/how-to-delete-data-from-push-streaming-dataset-power-bi/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Any idea on how to accomplish this? PushStreaming (hybrid) dataset that needs to be reset every few seconds.

So here is the goal:

Delete all the rows at the rest api endpoint-->Push the new data (say from sql view) ---> the report/visual refrshes. I am working on a requirement where this has to happen every few seconds.

Why am I not doing the Streaming dataset? Because I need to use a custom visual (not to be limited by the few visuals available to the streaming option).

Thank you,

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Is there an update to this? Is this roadmaped? Being able to delete certain rows from a streaming data set with history enabled has been on my xmas wish list for a while. Or, to be able to empty a dataset through the web UI.

Simple no-auth post calls into streaming datasets is super useful, versatile and easy. Yet, from my personal experience, it does feel extra silly to delete and re-create an entire dataset, simply to correct mistakes or undo accidental duplicate posts. I've done it a bunch of times...

Cheers, Gregor

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HI @bhanudaybirla,

>>But i need to delete all the data before pushing each single row

Current streaming dataset has no underlying data, it used receive data pushed data without other operations.

As with the streaming dataset, with the PubNub streaming dataset there is no underlying database in Power BI, so you cannot build report visuals against the data that flows in, and cannot take advantage of report functionality such as filtering, custom visuals, and so on.

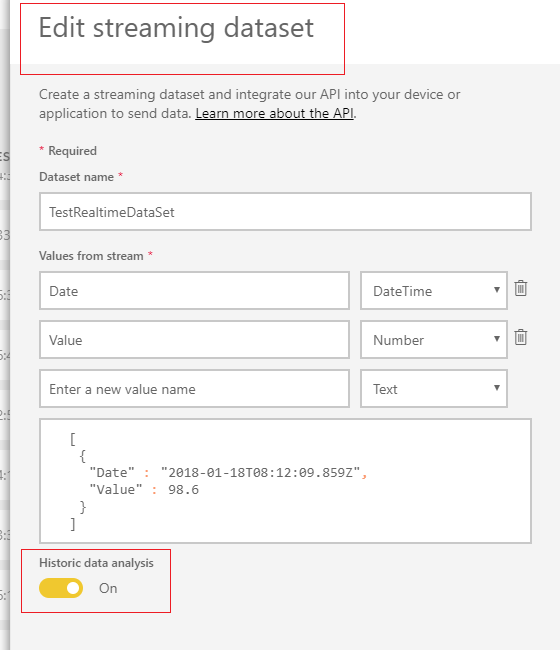

In my opinion, if you not need historical data, you can simply turn off it.

When Historic data analysis is disabled (it is disabled by default), you create a streaming dataset as described earlier in this article. When Historic data analysis is enabled, the dataset created becomes both a streaming dataset and a push dataset. This is equivalent to using the Power BI REST APIs to create a dataset with its defaultMode set to pushStreaming, as described earlier in this article.

Real-time streaming in Power BI

Regards,

Xiaoxin Sheng

If this post helps, please consider accept as solution to help other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

But if i disable historical data analysis, i can not use all power bi visuals.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @bhanudaybirla,

As I said, streaming api not support any operation except push data. So I think your requirement is impossible to achieved at present.

Regards,

Xiaoxin Sheng

If this post helps, please consider accept as solution to help other members find it more quickly.

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.