- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Service

- Cannot refresh or save dataflow - Cannot acquire l...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cannot refresh or save dataflow - Cannot acquire lock for model

Hi all,

Just coming back from holidays (Happy new year to you all!) and various dataflows have sttoped working while I was away which is not good...

I try to refresh on schedule or manually and they don't refresh. There isnt an obvious error since all error files say all entities refreshed correctly, while the dataflow failed to refresh, giving no error message at all.

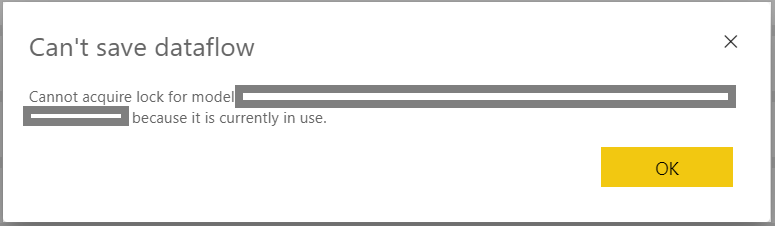

If I try to edit the dataflow and then save, a window like the following appears:

Thanks,

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@NAOS I'm experiencing something similar with one particular dataflow that has two entities connecting to an Azure SQL Database without using a gateway. It started failing on Thursday 02/01. The dataflow refresh status shows as "Failed" but the Entity refresh status for both entities shows as "Completed". There is no error message in the "Error" column. It fails on the scheduled refresh and a manual refresh.

When I try to modify the dataflow and save the changes I get the "cannot acquire lock for model" error message.

If I export the JSON and create a new replica dataflow in the same workspace by importing the JSON it works fine.

I will be interested to know if you find a solution!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@NAOSRather than restart the whole capacity (which we would need to be done out of hours to minimise disruption) I went into Admin Portal >> Capacity Settings and under Workloads >> Dataflows increased the Max Memory by 1%, applied the change and then changed it back to its original setting and applied the change again. This only took a few seconds. The theory was that this would force the dataflow workload to restart which might clear the problem.

It appears to have worked as I am now able to refresh the dataflow ok, so this might be something worth suggesting to your IT Department as being less disruptive than restarting the whole capacity.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @NAOS ,

You could refer to this solved case.

Restart your premium capacity and check if it works.

If this post helps, then please consider Accept it as the solution to help the other members find it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @v-eachen-msft ,

Thanks for your answer. Unfortunately, that doesn't work for me. The users in that thread actually mention that even restarting the premium capacity several times it wouldn't fix that bug.

If you check the answer given to that post, you'll notice it isn't actually the solution nor it fixes the problem.

I've raised a ticket to support and will wait to see what they can add to it.

If you could in the meantime try to find out more about the issue with the team it would be much appreciated since more than a few of our dataflows are presenting this problem...

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the confirmation that you already submitted a support ticket.

Can you please share the support ticket for quick traction on it.

If you have any queries, please let us know.

If this post helps, then please consider Accept it as the solution to help the other members find it more

If this post was helpful may I ask you to mark it as solution and click on thumb symbol?

Best Regards,

Venal.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @venal !

Thanks for your comments. The support request number is 120010222001657. I was advice to check that "Enhanced Dataflows Compute Engine" was active (which it was) and to reset the premium capacity (something our IT department still has to do yet).

I'll post to this thread if I have any updates or something changes.

Thanks,

NAOS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@NAOS I'm experiencing something similar with one particular dataflow that has two entities connecting to an Azure SQL Database without using a gateway. It started failing on Thursday 02/01. The dataflow refresh status shows as "Failed" but the Entity refresh status for both entities shows as "Completed". There is no error message in the "Error" column. It fails on the scheduled refresh and a manual refresh.

When I try to modify the dataflow and save the changes I get the "cannot acquire lock for model" error message.

If I export the JSON and create a new replica dataflow in the same workspace by importing the JSON it works fine.

I will be interested to know if you find a solution!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@PaulKn brilliant! I'm not sure why I hand't tried that yet but it worked perfectly.

These dataflows that aren't working for me query data from different sources and some use gateway and others don't, but nothing really seems to show a pattern. They just suddently stopped working after months without issues or changes to the queries (nor big amounts of data were added either).

Anyway, lessons learned and now, although not the perfect solution, I will add a parameter to set the dataflow ID in my queries so in the future, if it happens again, I just create a new dataflow and add its Id to the parameter to adjust the queries.

Many thanks!

NAOS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@NAOSI didn't really consider creating a new dataflow to be a solution, more a troubleshooting step to rule out any connection issues etc. Whilst we do sometimes use parameters in reports to hold the workspace and dataflow id (so that we can easily switch between a dev and production version of a dataflow), if you have a large number of reports created by users of mixed skillsets like we have then changing the id on all of them isn't really practical.

Did your IT Department try restarting the capacity? We will try that tonight, but in the meantime I guess I will log a case as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @PaulKn ,

We haven't yet restarted our capacity (IT dep. still hasn't done it...), but I'll post here once we do to let you know if that fixed the issue or not.

Kind regards,

NAOS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@NAOSRather than restart the whole capacity (which we would need to be done out of hours to minimise disruption) I went into Admin Portal >> Capacity Settings and under Workloads >> Dataflows increased the Max Memory by 1%, applied the change and then changed it back to its original setting and applied the change again. This only took a few seconds. The theory was that this would force the dataflow workload to restart which might clear the problem.

It appears to have worked as I am now able to refresh the dataflow ok, so this might be something worth suggesting to your IT Department as being less disruptive than restarting the whole capacity.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@PaulKn , @venal , @v-eachen-msft

That worked! I'll try change the last comment to be the solution.

Thanks PaulKn! Great work.

NAOS

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You could check the Issues forum here:

https://community.powerbi.com/t5/Issues/idb-p/Issues

And if it is not there, then you could post it.

If you have Pro account you could try to open a support ticket. If you have a Pro account it is free. Go to https://support.powerbi.com. Scroll down and click "CREATE SUPPORT TICKET".

@ me in replies or I'll lose your thread!!!

Instead of a Kudo, please vote for this idea

Become an expert!: Enterprise DNA

External Tools: MSHGQM

YouTube Channel!: Microsoft Hates Greg

Latest book!: The Definitive Guide to Power Query (M)

DAX is easy, CALCULATE makes DAX hard...

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.