- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- how to Pick a 2 random transaction per user from a...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

how to Pick a 2 random transaction per user from a list of 5000+ transactions using power Query

I have a raw data with 5000+ transactions that has transaction ID, username, date etc. From this i want to extract /pick 2 random transaction for every username in a new table - pls suggest how to achieve this using power query

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Seer_Bug

This solution should work for you with better performance, I have reduced the steps as well (No buffering or sorting). I have given the explanation as comments with the code. Past the code and check, please.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("dZLLCQMxDER78XkDkvyvxewxDSSk/0TgwFPIgmEOD3lGg9ZKmo70et4frqK3zzNJ57GSbeAqBpA3cJUMUDZwlQJQN3CVCtA2cJUG0GnOiUFzTkzu0QFU+NcgUX42SQyrKJfXfLWLFvowmlaSkK0xQcjWWWbINpBAw8y8qtO+HfTfBKb04W8WDoAJjBcQ2rHQAduxSp+QLRxByNb/Zjvf", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"transaction ID" = _t, username = _t, date = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"date", type date}},"en-gb"),

#"Added Index" = Table.AddIndexColumn(#"Changed Type", "Index", 1, 1, Int64.Type),

//Added an Index Column before Generating random numbers, becasue, without the Index, same value is generated.

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each Number.Random()),

// Random number generated

#"Grouped Rows" = Table.Group(#"Added Custom", {"username"}, {{"Count", each _, type table [transaction ID=nullable text, username=nullable text, date=nullable date, Index=number, Custom=number]}}),

//Grouped by Username adding a table type column with all the rows per username

#"Added Custom1" = Table.AddColumn(#"Grouped Rows", "Random 2 IDs", each Table.MaxN([Count], each [Custom], 2)[transaction ID]),

// Adding a column that returns two IDs filtered by the top 2 random numbers. Since Random numbers are independant, MaxN works well here.

#"Removed Columns" = Table.RemoveColumns(#"Added Custom1",{"Count"}),

#"Expanded Random 2 IDs" = Table.ExpandListColumn(#"Removed Columns", "Random 2 IDs")

in

#"Expanded Random 2 IDs"

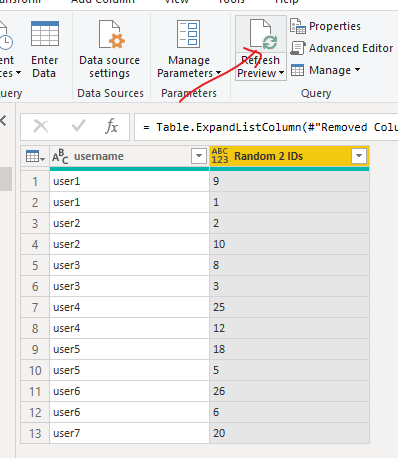

To test if you are really getting the random 2, click on the Refresh button and see the IDs changing.

@Smauro

Thanks a lot for your feedback and advice, this platform is so helpful when contributors like you share their thoughts.

Thanks again 🙂

________________________

If my answer was helpful, please consider Accept it as the solution to help the other members find it

Click on the Thumbs-Up icon if you like this reply 🙂

⭕ Subscribe and learn Power BI from these videos

⚪ Website ⚪ LinkedIn ⚪ PBI User Group

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Step 1: Get your data to Power Query

Simple. Grab the data you want to sample and bring it to PQ. At this point, you will get something like this:

Step 2: Add Random Numbers as a column

Go to “Add Column” > Custom Column and add this formula.

=Number.Random()

Remember: Power Query formulas are case-sensitive. So type exactly. Name this column “Random”

But Power Query gives same random number in all rows …

That is right. As Power Query is a parallel language, each row gets same random number (unlike Excel’s RAND() filled down a column).

Note: your experience with Number.Random() could be different, but as you build transformations, at some point PQ will replace all numbers with same value.

So how to get different numbers per row? Simple, we force PQ to evaluate something per row. A simple thing like index number column will do. This will force PQ to run random formula for all rows.

Hat tip to Gil Raviv for suggesting this technique in a forum post.

Step 3: Add Index Number column & Sort the random numbers

Go to “Add column” > Index number. Now that we have index numbers in a column, this will force PQ to regenerate the random number per row.

Select the random number column and sort it.

Note: You may need to switch Steps 2 & 3 if the random numbers are same all the way thru.

Step 4: Keep top 100 rows

Go to Home > Keep Rows > Keep Top Rows. Enter the sample size you want (100) and Click OK. Your sample is ready.

Step 5: Remove the Random & Index columns

Now that our sample is ready, let’s remove the random & index number columns. We do not need them in the final output (or model). Click on Save & Load (or Close & Apply).

Enjoy the sample.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Seer_Bug

check out this easy code. It takes only Table.Group and within the function 2 random rows are picked.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("dZJNCkIxDAbv0vUTkvT/LI+39AKK99dAlYlQKHyLIekk5DyTpiO9nveHp+jt80zSdZzJFvAUA8gLeEoGKAt4SgGoC3hKBWgLeEoD6PycFYOfs2Jyjg6gwl6DRNlskhhGUQ6veTeLlt0wWmkQ3BoNglvnMoPbgIGGmrkzsO8O+r+BKWvYzcIB0MB4AWE7Fk6A27FwA8EtHEFw67T+uV1v", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"transaction ID" = _t, username = _t, date = _t]),

ChangeType = Table.TransformColumnTypes(Source,{{"date", type date}},"en-gb"),

GroupAndPick2Random = Table.Group(ChangeType, {"username"}, {{"AllRows", each Table.RemoveColumns( Table.FirstN(Table.Sort(Table.Buffer(Table.AddColumn(_, "Random", each Number.Random())), {{"Random", Order.Ascending}}),2),{"username", "Random"}), type table [transaction ID=text, username=text, date=date]}}),

Expand = Table.ExpandTableColumn(GroupAndPick2Random, "AllRows", {"transaction ID", "date"}, {"transaction ID", "date"})

in

Expand

Copy paste this code to the advanced editor in a new blank query to see how the solution works.

If this post helps or solves your problem, please mark it as solution (to help other users find useful content and to acknowledge the work of users that helped you)

Kudoes are nice too

Have fun

Jimmy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Seer_Bug ,

Here's another solution based on @Fowmy's table:

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("dZJBCoQwDEXv0rUDSaxWzyIuvYA6958JdOD9AaHwF4+kLyHbVrwM5X0dZ6b56/vCyj5sJTrItAAYO8i0EaB2kGkVYOog0yaAuYNMmwEaP2fFws9ZsXKOBuDGXguJs9lKEhjFObyPT7N4fRrGJxqI20wDcWtcprgtMHCpWZ8M4reD9m8Qzhp2CzkAGgQvQLYTcgLcTsgNiJscgbg1Wotb7uA+rlvA/gE=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"transaction ID" = _t, username = _t, date = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"date", type date}},"en-gb"),

ListPickRandomN = (l as list, n as number) as list =>

let

RandomN = (n as number, count as number) as list =>

let

getList = List.Transform( List.Random(n) , each Number.Mod(Int64.From(_ * List.Max( {100000, 100*count }) ), count) ),

bufferTheList = List.Buffer(getList),

final = if List.IsDistinct(bufferTheList) then bufferTheList else @RandomN(n, count)

in

final,

countItems = List.Count(l),

returnList = if countItems <= n then l else List.Distinct( List.Transform( RandomN( n, countItems ), (item) => l{item}) )

in

returnList,

#"Select Transactions per User" = Table.Group(#"Changed Type", {"username"}, {{"Selected Transactions", each ListPickRandomN([transaction ID], 2), type {text}} }),

#"Expanded Selected Transactions" = Table.ExpandListColumn(#"Select Transactions per User", "Selected Transactions")

in

#"Expanded Selected Transactions"

It should run fast enough.

As a new table:

let

ListPickRandomN = (l as list, n as number) as list =>

let

RandomN = (n as number, count as number) as list =>

let

getList = List.Transform( List.Random(n) , each Number.Mod(Int64.From(_ * List.Max( {100000, 100*count }) ), count) ),

bufferTheList = List.Buffer(getList),

final = if List.IsDistinct(bufferTheList) then bufferTheList else @RandomN(n, count)

in

final,

countItems = List.Count(l),

returnList = if countItems <= n then l else List.Distinct( List.Transform( RandomN( n, countItems ), (item) => l{item}) )

in

returnList,

#"Select Transactions per User" = Table.Group(#"Your table name", {"username"}, {{"Selected Transactions", each ListPickRandomN([transaction ID], 2), type {text}} }),

#"Expanded Selected Transactions" = Table.ExpandListColumn(#"Select Transactions per User", "Selected Transactions")

in

#"Expanded Selected Transactions"

where you should replace #"Your table name" with your table name, username with your user name column and [transaction ID] with your transaction ID column.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Seer_Bug

Try the following code by pasting it in a blank query in the advanced editor. You can follow the steps to modify your source.

Let me know how it works for 5000 rows.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("dZJNCkIxDAbv0vUTkvT/LI+39AKK99dAlYlQKHyLIekk5DyTpiO9nveHp+jt80zSdZzJFvAUA8gLeEoGKAt4SgGoC3hKBWgLeEoD6PycFYOfs2Jyjg6gwl6DRNlskhhGUQ6veTeLlt0wWmkQ3BoNglvnMoPbgIGGmrkzsO8O+r+BKWvYzcIB0MB4AWE7Fk6A27FwA8EtHEFw67T+uV1v", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"transaction ID" = _t, username = _t, date = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"date", type date}},"en-gb"),

#"Added Index" = Table.AddIndexColumn(#"Changed Type", "Index", 0, 1, Int64.Type),

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each Number.Random() * 1),

#"Grouped Rows" = Table.Group(#"Added Custom", {"username"}, {{"Count", each _, type table [transaction ID=nullable text, username=nullable text, date=nullable date, Custom=number]}}),

#"Added Custom1" = Table.AddColumn(#"Grouped Rows", "Custom", each List.FirstN([Count][Custom],2)),

#"Expanded Count" = Table.ExpandTableColumn(#"Added Custom1", "Count", {"transaction ID", "username", "date", "Index", "Custom"}, {"transaction ID", "username.1", "date", "Index", "Custom.1"}),

#"Expanded Custom" = Table.ExpandListColumn(#"Expanded Count", "Custom"),

#"Inserted Subtraction" = Table.AddColumn(#"Expanded Custom", "Subtraction", each [Custom.1] - [Custom], type number),

#"Filtered Rows" = Table.SelectRows(#"Inserted Subtraction", each [Subtraction] = 0),

#"Removed Columns" = Table.RemoveColumns(#"Filtered Rows",{"username.1", "date", "Index", "Custom.1", "Custom", "Subtraction"})

in

#"Removed Columns"

________________________

If my answer was helpful, please consider Accept it as the solution to help the other members find it

Click on the Thumbs-Up icon if you like this reply 🙂

⭕ Subscribe and learn Power BI from these videos

⚪ Website ⚪ LinkedIn ⚪ PBI User Group

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

its working thanks a ton 🙂

first i copy pasted the code, later tried to do the steps on my own, it worked.

I am a newbie to power query - would it be possible to explain the background logic ( like what does custom1 and custom do, why do we subtract them etc)

Thanks in advance

❤️

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Seer_Bug

Please use the code below, I identified some issues in the code submitted earlier.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("dZJNCkIxDAbv0vUTkvT/LI+39AKK99dAlYlQKHyLIekk5DyTpiO9nveHp+jt80zSdZzJFvAUA8gLeEoGKAt4SgGoC3hKBWgLeEoD6PycFYOfs2Jyjg6gwl6DRNlskhhGUQ6veTeLlt0wWmkQ3BoNglvnMoPbgIGGmrkzsO8O+r+BKWvYzcIB0MB4AWE7Fk6A27FwA8EtHEFw67T+uV1v", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"transaction ID" = _t, username = _t, date = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"date", type date}},"en-gb"),

#"Added Index" = Table.AddIndexColumn(#"Changed Type", "Index", 1, 1, Int64.Type),

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each Number.Random() * [Index] ),

Custom1 = Table.Buffer(#"Added Custom"),

#"Grouped Rows" = Table.Group(Custom1, {"username"}, {{"Count", each _, type table [transaction ID=nullable text, username=nullable text, date=nullable date, Index=number, Custom=number]}}),

#"Added Custom1" = Table.AddColumn(#"Grouped Rows", "Custom", each List.MaxN([Count][Custom],2)),

#"Expanded Custom" = Table.ExpandListColumn(#"Added Custom1", "Custom"),

#"Expanded Count" = Table.ExpandTableColumn(#"Expanded Custom", "Count", {"transaction ID", "username", "date", "Index", "Custom"}, {"transaction ID", "username.1", "date", "Index", "Custom.1"}),

#"Inserted Subtraction" = Table.AddColumn(#"Expanded Count", "Subtraction", each [Custom.1] - [Custom], type number),

#"Filtered Rows" = Table.SelectRows(#"Inserted Subtraction", each [Subtraction] = 0),

#"Removed Other Columns" = Table.SelectColumns(#"Filtered Rows",{"username", "transaction ID"})

in

#"Removed Other Columns"________________________

If my answer was helpful, please consider Accept it as the solution to help the other members find it

Click on the Thumbs-Up icon if you like this reply 🙂

⭕ Subscribe and learn Power BI from these videos

⚪ Website ⚪ LinkedIn ⚪ PBI User Group

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Fowmy

You should try not to buffer a whole table just to tell the engine to actually calculate some random numbers. This can decrease substantially the performance of a query, especially in large tables if we start paginating data in the mashup engine.

Take a look at what I wrote, it only buffers a list of n elements to achieve the same result, which is simply to keep n distinct random numbers in memory.

Adding an index is also not the way to go there, since it actually diminishes the randomness: the lower in the table a value is the higher the chance it will be picked by List.MaxN

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @Fowmy 🙂 this worked too. Help me learn what difference does it create when we use list.MaxN instead of list.FirstN , and why do we use table.buffer

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Seer_Bug

This solution should work for you with better performance, I have reduced the steps as well (No buffering or sorting). I have given the explanation as comments with the code. Past the code and check, please.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("dZLLCQMxDER78XkDkvyvxewxDSSk/0TgwFPIgmEOD3lGg9ZKmo70et4frqK3zzNJ57GSbeAqBpA3cJUMUDZwlQJQN3CVCtA2cJUG0GnOiUFzTkzu0QFU+NcgUX42SQyrKJfXfLWLFvowmlaSkK0xQcjWWWbINpBAw8y8qtO+HfTfBKb04W8WDoAJjBcQ2rHQAduxSp+QLRxByNb/Zjvf", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"transaction ID" = _t, username = _t, date = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"date", type date}},"en-gb"),

#"Added Index" = Table.AddIndexColumn(#"Changed Type", "Index", 1, 1, Int64.Type),

//Added an Index Column before Generating random numbers, becasue, without the Index, same value is generated.

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each Number.Random()),

// Random number generated

#"Grouped Rows" = Table.Group(#"Added Custom", {"username"}, {{"Count", each _, type table [transaction ID=nullable text, username=nullable text, date=nullable date, Index=number, Custom=number]}}),

//Grouped by Username adding a table type column with all the rows per username

#"Added Custom1" = Table.AddColumn(#"Grouped Rows", "Random 2 IDs", each Table.MaxN([Count], each [Custom], 2)[transaction ID]),

// Adding a column that returns two IDs filtered by the top 2 random numbers. Since Random numbers are independant, MaxN works well here.

#"Removed Columns" = Table.RemoveColumns(#"Added Custom1",{"Count"}),

#"Expanded Random 2 IDs" = Table.ExpandListColumn(#"Removed Columns", "Random 2 IDs")

in

#"Expanded Random 2 IDs"

To test if you are really getting the random 2, click on the Refresh button and see the IDs changing.

@Smauro

Thanks a lot for your feedback and advice, this platform is so helpful when contributors like you share their thoughts.

Thanks again 🙂

________________________

If my answer was helpful, please consider Accept it as the solution to help the other members find it

Click on the Thumbs-Up icon if you like this reply 🙂

⭕ Subscribe and learn Power BI from these videos

⚪ Website ⚪ LinkedIn ⚪ PBI User Group

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 100 | |

| 51 | |

| 19 | |

| 12 | |

| 11 |