- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Value and Identifier in 2 Columns

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Value and Identifier in 2 Columns

I'm pretty sure this is rather easy but I cant figure out the best terms to search for the solution.

I have numerical values that I want to sum but their "identifier" is next to the value in another column. Its an exported report and as you can see, the categories change.

| Model Material 1 | Model Material 1 Usage | Model Material 2 | Model Material 2 Usage |

| VeroPureWhite | 56.992 | Agilus30Clear | 33.619 |

| VeroMagenta | 0 | VeroPureWhite | 28.605 |

| VeroPureWhite | 52.155 | Agilus30Clear | 34.051 |

| VeroPureWhite | 39.022 | Agilus30Clear | 26.847 |

| VeroPureWhite | 44.657 | Agilus30Clear | 26.145 |

Whats the best way to properly categorize the data to get the sum of each material type?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So it looks like alot of people had different approaches and i'm still trying to figure out the grouping.

I grouped the data and unpivoted the table. I plan to use the data/time started as the unique indentifier column.

Since the material used falls on a line under the material type used, I need to get these on the same line? The group by function is what is confusing me.

| Printer | Started | Completed | Total Job Time | Total Print Time | Status | Attribute | Value |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 1 | VeroPureWhite |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 1 Usage | 56.992 |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 2 | Agilus30Clear |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 2 Usage | 33.619 |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 3 | TissueMatrix |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 3 Usage | 18.039 |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 4 | VeroMagenta-V |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 4 Usage | 24.561 |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 5 | BoneMatrix |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 5 Usage | 23.18 |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 6 | GelMatrix |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Model Material 6 Usage | 26.864 |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Support Material 1 | SUP706 |

| 00E0F434FC6C | 9/18/20 15:06 | 9/18/20 19:14 | 0.04:08:31 | 0.04:04:32 | Finish | Support Material 1 Usage | 202.76 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 1 | VeroMagenta |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 1 Usage | 0 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 2 | VeroPureWhite |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 2 Usage | 28.605 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 3 | Agilus30Clear |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 3 Usage | 22.325 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 4 | TissueMatrix |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 4 Usage | 9.522 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 5 | BoneMatrix |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 5 Usage | 9.956 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 6 | GelMatrix |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Model Material 6 Usage | 13.025 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Support Material 1 | SUP706 |

| 00E0F434FC6C | 9/16/20 7:18 | 9/16/20 9:26 | 0.02:08:29 | 0.01:57:46 | Finish | Support Material 1 Usage | 95.823 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 1 | VeroPureWhite |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 1 Usage | 52.155 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 2 | Agilus30Clear |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 2 Usage | 34.051 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 3 | TissueMatrix |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 3 Usage | 15.961 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 4 | VeroMagenta-V |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 4 Usage | 17.285 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 5 | BoneMatrix |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 5 Usage | 18.246 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 6 | GelMatrix |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Model Material 6 Usage | 23.779 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Support Material 1 | SUP706 |

| 00E0F434FC6C | 9/11/20 7:52 | 9/11/20 11:29 | 0.03:37:48 | 0.03:32:18 | Finish | Support Material 1 Usage | 169.415 |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Anonymous

I don't know what this dataset has now to do with the original request. Seems like you didn't show us the reald dataset in your initinal post. If you can show us how your orignal dataset is looking like, and what exactly you need.

Here my best guess 🙂

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("vZdNa8MwDIb/Sul5c235I7ZvW1l3Kgy6dofSQ2BmC4R2JC3s58+hJc4YAY+qukXOx5M3UqTX2+2U8ye+UFIt5mY+vZu6mbAz4BOhPTfD2HmhYswZV55bL0UfKC8hBotqX7Wf8WB5eA/1ZFkeQ1OV9aS7cBOaw8upCW+f1TFMd3cU2Mm6LT9CPKENcw5oqN25h4+qPrWSz+tQNkTYXqyUzAhHQ5Vx6bVq21OIS031TUTttQrLuCTSqi5VvIzo/bG83xBhe7GgmDaChqrj0uNhT5pWnZRKJiwNtHvKc6gpdZqk0zBr1AjVdJTCxw+RQufBnDHQMcGdA+F14ZXJaMGX4iVB9jI5BQ4yhwwuNKXSMsM1BVNmDhhcaBIKTAKJUJU3XHCZvU7HNIxZBlRkVqvFJQ5EOm0okDltFheYPIKMN9+mYFenr69Dc/zdZlfrl4Lf5qP+5aVMamZBjlDFmaphEArRk6SXkWT7AM7vd62dR6YmNw9M6LGE4kJzzTwyNXl5xbges3y40EwrjwxNf6lmbtTc4kJzjTwyNUktGFia+s2aLcjI4eYM1FgjxIXmjBdk4nCzUhRje9Arof8fMOjAlE7jmBKxbnc/", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Printer = _t, Started = _t, Completed = _t, #"Total Job Time" = _t, #"Total Print Time" = _t, Status = _t, Attribute = _t, Value = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Printer", type text}, {"Started", type text}, {"Completed", type text}, {"Total Job Time", type duration}, {"Total Print Time", type duration}, {"Status", type text}, {"Attribute", type text}, {"Value", type text}}),

MaterialType = {"Model Material 1", "Model Material 2","Model Material 3", "Model Material 4", "Model Material 5", "Model Material 6", "Support Material 1"},

#"Pivoted Column" = Table.Pivot(#"Changed Type", List.Distinct(#"Changed Type"[Attribute]), "Attribute", "Value"),

AddRecordList = Table.AddColumn

(

#"Pivoted Column",

"RecordList",

(rec)=> List.Transform(MaterialType, (trans)=> Table.Column(Record.ToTable(Record.SelectFields(rec, List.Select(Table.ColumnNames(#"Pivoted Column"), (sel)=> Text.Start(sel,Text.Length(trans))= trans))), "Value"))

),

DeleteColumns = Table.RemoveColumns

(

AddRecordList,

List.Select(Table.ColumnNames(AddRecordList),(sel)=> List.AnyTrue(List.Transform(MaterialType, (trans)=> Text.Start(sel,Text.Length(trans))= trans)))

),

#"Expanded RecordList" = Table.ExpandListColumn(DeleteColumns, "RecordList"),

#"Extracted Values" = Table.TransformColumns(#"Expanded RecordList", {"RecordList", each Text.Combine(List.Transform(_, Text.From), "&&"), type text}),

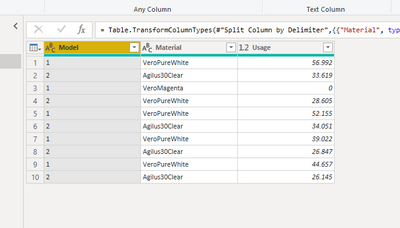

#"Split Column by Delimiter" = Table.SplitColumn(#"Extracted Values", "RecordList", Splitter.SplitTextByDelimiter("&&", QuoteStyle.Csv), {"RecordList.1", "RecordList.2"}),

#"Changed Type1" = Table.TransformColumnTypes(#"Split Column by Delimiter",{{"RecordList.1", type text}, {"RecordList.2", Int64.Type}}),

#"Renamed Columns" = Table.RenameColumns(#"Changed Type1",{{"RecordList.1", "Material"}, {"RecordList.2", "Material Usage"}})

in

#"Renamed Columns"

Copy paste this code to the advanced editor in a new blank query to see how the solution works.

If this post helps or solves your problem, please mark it as solution (to help other users find useful content and to acknowledge the work of users that helped you)

Kudoes are nice too

Have fun

Jimmy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous , I came up with a more generic way of conversion if your dataset consists of more pairs of Material/Usage,

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WCkstyg8oLUoNz8gsSVXSUTI107O0NAIyHNMzc0qLjQ2cc1ITi4B8Y2M9M0NLpVgdiB7fxPTUvJJEoIQBEKObYmShZ2ZgCleMYoGRnqGpKTYLTPQMTA2x6jG21DMwwuYoIzM9CxNzrHpMTPTMTM2x6zE0AbotFgA=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Model Material 1" = _t, #"Model Material 1 Usage" = _t, #"Model Material 2" = _t, #"Model Material 2 Usage" = _t]),

#"Unpivoted Columns" = Table.UnpivotOtherColumns(Source, {}, "Attribute", "Value"),

#"Added Custom" = Table.AddColumn(#"Unpivoted Columns", "Model", each Text.Select([Attribute], {"0".."9"})),

#"Added Index" = Table.AddIndexColumn(#"Added Custom", "Index", 0, 1, Int64.Type),

#"Integer-Divided Column" = Table.TransformColumns(#"Added Index", {{"Index", each Number.IntegerDivide(_, 2), Int64.Type}}),

#"Grouped Rows" = Table.Group(#"Integer-Divided Column", {"Model", "Index"}, {{"Count", each Record.FromList([Value], {"Material", "Usage"})}}),

#"Removed Columns" = Table.RemoveColumns(#"Grouped Rows",{"Index"}),

#"Expanded Count" = Table.ExpandRecordColumn(#"Removed Columns", "Count", {"Material", "Usage"}, {"Material", "Usage"})

in

#"Expanded Count"| Thanks to the great efforts by MS engineers to simplify syntax of DAX! Most beginners are SUCCESSFULLY MISLED to think that they could easily master DAX; but it turns out that the intricacy of the most frequently used RANKX() is still way beyond their comprehension! |

DAX is simple, but NOT EASY! |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I dont think this shows the final output I am looking for.

Essentially, this data is a report generated by our 3D printer showing how much material is used of each type. There could be the same material in different bays of the machine. Each row is an individual project/print.

I want to be able to take all this data and essentially add up the "Model Material # Usage" of each material. As shown below, VeroPureWhite was in multiple material bays.

| Model Material 1 | Model Material 1 Usage | Model Material 2 | Model Material 2 Usage | Model Material 3 | Model Material 3 Usage | Model Material 4 | Model Material 4 Usage |

| VeroPureWhite | 56.992 | Agilus30Clear | 33.619 | TissueMatrix | 18.039 | VeroMagenta-V | 24.561 |

| VeroMagenta | 0 | VeroPureWhite | 28.605 | Agilus30Clear | 22.325 | TissueMatrix | 9.522 |

| VeroPureWhite | 52.155 | Agilus30Clear | 34.051 | TissueMatrix | 15.961 | VeroMagenta-V | 17.285 |

| VeroPureWhite | 39.022 | Agilus30Clear | 26.847 | TissueMatrix | 8.506 | VeroMagenta-V | 12.31 |

| VeroPureWhite | 44.657 | Agilus30Clear | 26.145 | TissueMatrix | 8.535 | VeroMagenta-V | 9.665 |

| VeroPureWhite | 53.209 | Agilus30Clear | 35.149 | TissueMatrix | 16.944 | VeroMagenta-V | 18.458 |

| VeroPureWhite | 0.223 | Agilus30Clear | 0 | TissueMatrix | 0 | VeroMagenta-V | 0 |

| VeroPureWhite | 42.624 | Agilus30Clear | 31.298 | TissueMatrix | 11.424 | VeroMagenta-V | 12.515 |

| VeroPureWhite | 17.799 | Agilus30Clear | 2.116 | TissueMatrix | 2.116 | VeroMagenta-V | 2.116 |

| VeroPureWhite | 0.132 | Agilus30Clear | 0 | TissueMatrix | 0 | VeroMagenta-V | 0 |

| VeroPureWhite | 1.374 | Agilus30Clear | 1.058 | TissueMatrix | 1.058 | VeroMagenta-V | 1.058 |

| VeroPureWhite | 0.008 | Agilus30Clear | 0 | TissueMatrix | 0 | VeroMagenta-V | 0 |

| VeroPureWhite | 0.062 | Agilus30Clear | 0 | TissueMatrix | 0 | VeroMagenta-V | 0 |

| VeroPureWhite | 0 | Agilus30Clear | 0 | TissueMatrix | 0 | VeroMagenta-V | 0 |

| VeroPureWhite | 40.188 | Agilus30Clear | 24.96 | TissueMatrix | 10.583 | VeroMagenta-V | 10.658 |

| VeroPureWhite | 49.989 | Agilus30Clear | 49.728 | TissueMatrix | 49.728 | VeroMagenta-V | 49.728 |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @Anonymous

Or try this:

let

Source = Table.FromRecords(Json.Document(Binary.Decompress(Binary.FromText("5Zc9a8MwEIb/SvCcHrqTTpaylc6BDm06lA6GitZgGrATKJT+9yqQZDk1ibgpZPXHg/T4fc/260+zXL+nYbbsNmnsu2GGzaJZpXH9uB3Ty2e/Sc1cXDJ7nrqP1CzYQ4wkzlNG3H/0w3ay5mFI3SgRdEBYCx6jOG8z4qmfpm3Kh8b+WxLsgYABjJUEt9/HMl/0tenuVhLhDghywB5/5//K2ENOqDBFC2dEHi1QAG+4aOGMyKMGIrAkEe6syKOFCEx0QsJFiSBAlquoSoQDw6hKBEP0klCTCGyBAitl2AiGdPUgD8G1GhkB2Hidi5ysU+W4RIVz4Fnuo1IFunJHLldhyw25VEUE77WpYAtk5LyqqghnFbqhmSe3c7pYBHAclDIMEFmVCzl4KzTIm2sMGG0pCDzJh1CVBASKQZUEBFdYROWAYNTWIk/cNupqkd89KEddhYsyoOpLYkdQdwKt7q1xxZ1AsK2uEpg/H3SNKAKqCrEjqFNgjFzFjaQgb97fbAXKvxJXsfG3Pw==", BinaryEncoding.Base64),Compression.Deflate))),

trsp = Table.Transpose(Table.DemoteHeaders(Source)),

rmv_nm = Table.TransformColumns(trsp, {"Column1", each Text.Remove(_, {"0".."9"})}),

trans = List.Transform(Table.Split(rmv_nm, 2), each Table.PromoteHeaders(Table.Transpose(_))),

cmbTbls = Table.Combine(trans),

grp = Table.Group(cmbTbls, {"Model Material "}, {{"Model Material Usage", each List.Sum([Model Material Usage]), type number}})

in

grp- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

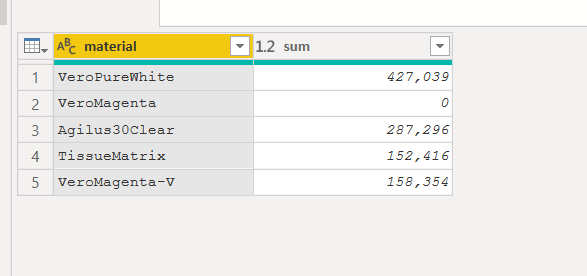

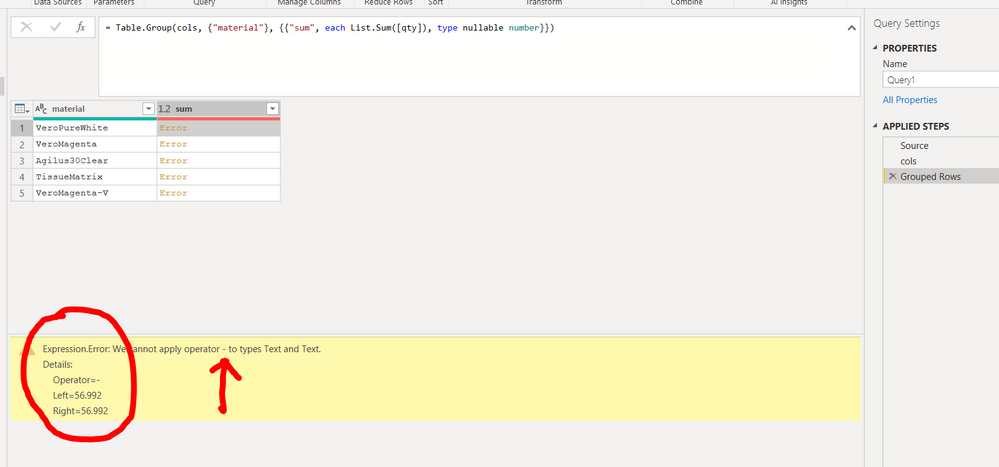

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("rZQxa8NADIX/i+dUSLqTfDeWzIEOJR2CBw8mNYQWnAT686sEN6T125rtTjL69J503u2a7TB9vpyn4e19PA3NqjGnWjUOz/vxcD4mXh+Gfop7SuRS4/A6Ho/nYdOfpvErrlKI0yV+qbTp98PHqX/axl0zmUvTrXb3qUjw/PE9Vgs5G8CqUlJbYiuZ6q34LwVKYqhUysQmQIFRdQEKpCUtBiGpEiuySZ1KbpeQQsaOGKFPICJncmsxQjKwJBDJAKKSO1ZhiZQrssoCgYYd25EzklEoW4EQJtUEGLwsz6AyY3OUXDPqXEhrAZ0LZYWdK5lge2L+bUX2xIaJLxk/4cVLuMaxN5LQFv3LG6HUImsk1h85M4cXxlzjuGvm8uiuo6g/3Ap++N7FxArSHn+7ClZCmKwk5C7H68b25kq1oLWLRKtggrf4X8ic6Lpv", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Model Material 1" = _t, #"Model Material 1 Usage" = _t, #"Model Material 2" = _t, #"Model Material 2 Usage" = _t, #"Model Material 3" = _t, #"Model Material 3 Usage" = _t, #"Model Material 4" = _t, #"Model Material 4 Usage" = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Model Material 1", type text}, {"Model Material 1 Usage", Int64.Type}, {"Model Material 2", type text}, {"Model Material 2 Usage", Int64.Type}, {"Model Material 3", type text}, {"Model Material 3 Usage", Int64.Type}, {"Model Material 4", type text}, {"Model Material 4 Usage", Int64.Type}}),

cols=Table.FromColumns({List.Combine(List.Alternate(Table.ToColumns(#"Changed Type"),1,1,1)),List.Combine(List.Alternate(Table.ToColumns(#"Changed Type"),1,1,0))},{"material","qty"}),

#"Grouped Rows" = Table.Group(cols, {"material"}, {{"sum", each List.Sum([qty]), type nullable number}})

in

#"Grouped Rows"

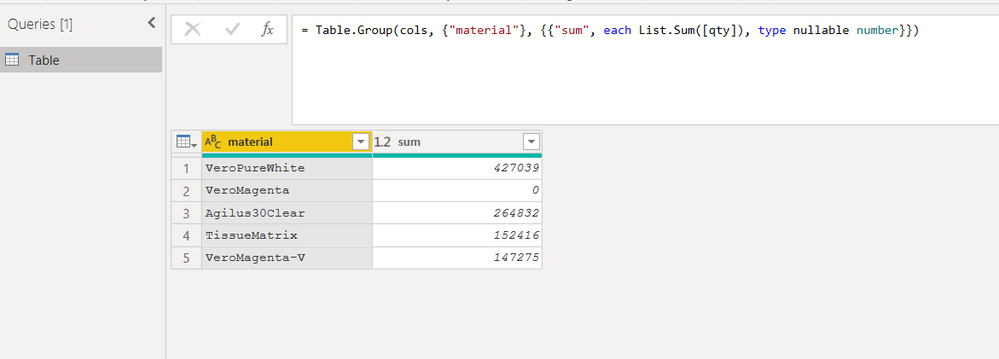

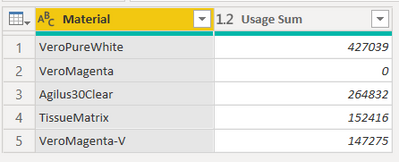

in your local environment the result should looks like

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

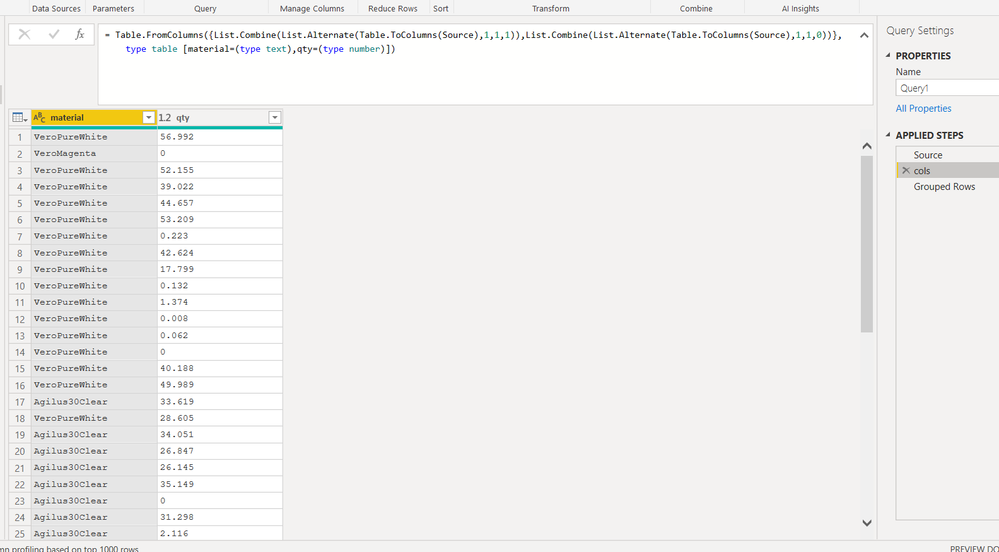

I tryied to use the "type table" expression to set the column name and also the values type, but seem doesn't work: in the following expression the value of column qty are seen as text values and not as number, and error is raised.

PS

does anyone have any idea where (meaning in what part of the function it can be generated) this error message comes from?

Why does it refer to a difference operation between two values?

PPS

I believe the reason lies in the way the groupby function works internally and this error message reveals this internal aspect

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @Anonymous

The type table expression in Power Query doesn't really set the data to a particular type, and even if it could, it wouldn't be easy to write as a dynamic expression.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi @ziying35 ,

it was just an attempt to shorten the code by removing the line needed to change type from text to type number of the "usage" column.

other solutions similar to yours:

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("rZQxa8NADIX/i+dUSLqTfDeWzIEOJR2CBw8mNYQWnAT686sEN6T125rtTjL69J503u2a7TB9vpyn4e19PA3NqjGnWjUOz/vxcD4mXh+Gfop7SuRS4/A6Ho/nYdOfpvErrlKI0yV+qbTp98PHqX/axl0zmUvTrXb3qUjw/PE9Vgs5G8CqUlJbYiuZ6q34LwVKYqhUysQmQIFRdQEKpCUtBiGpEiuySZ1KbpeQQsaOGKFPICJncmsxQjKwJBDJAKKSO1ZhiZQrssoCgYYd25EzklEoW4EQJtUEGLwsz6AyY3OUXDPqXEhrAZ0LZYWdK5lge2L+bUX2xIaJLxk/4cVLuMaxN5LQFv3LG6HUImsk1h85M4cXxlzjuGvm8uiuo6g/3Ap++N7FxArSHn+7ClZCmKwk5C7H68b25kq1oLWLRKtggrf4X8ic6Lpv", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Model Material 1" = _t, #"Model Material 1 Usage" = _t, #"Model Material 2" = _t, #"Model Material 2 Usage" = _t, #"Model Material 3" = _t, #"Model Material 3 Usage" = _t, #"Model Material 4" = _t, #"Model Material 4 Usage" = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Model Material 1", type text}, {"Model Material 1 Usage", Int64.Type}, {"Model Material 2", type text}, {"Model Material 2 Usage", Int64.Type}, {"Model Material 3", type text}, {"Model Material 3 Usage", Int64.Type}, {"Model Material 4", type text}, {"Model Material 4 Usage", Int64.Type}}),

#"Transposed Table" = Table.Combine(List.Transform(Table.Split(Table.Transpose(#"Changed Type"),2),each Table.Transpose(_)))

in

#"Transposed Table"

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("rZQxa8NADIX/i+dUSLqTfDeWzIEOJR2CBw8mNYQWnAT686sEN6T125rtTjL69J503u2a7TB9vpyn4e19PA3NqjGnWjUOz/vxcD4mXh+Gfop7SuRS4/A6Ho/nYdOfpvErrlKI0yV+qbTp98PHqX/axl0zmUvTrXb3qUjw/PE9Vgs5G8CqUlJbYiuZ6q34LwVKYqhUysQmQIFRdQEKpCUtBiGpEiuySZ1KbpeQQsaOGKFPICJncmsxQjKwJBDJAKKSO1ZhiZQrssoCgYYd25EzklEoW4EQJtUEGLwsz6AyY3OUXDPqXEhrAZ0LZYWdK5lge2L+bUX2xIaJLxk/4cVLuMaxN5LQFv3LG6HUImsk1h85M4cXxlzjuGvm8uiuo6g/3Ap++N7FxArSHn+7ClZCmKwk5C7H68b25kq1oLWLRKtggrf4X8ic6Lpv", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Model Material 1" = _t, #"Model Material 1 Usage" = _t, #"Model Material 2" = _t, #"Model Material 2 Usage" = _t, #"Model Material 3" = _t, #"Model Material 3 Usage" = _t, #"Model Material 4" = _t, #"Model Material 4 Usage" = _t]),

tabSplitColumns=Table.Combine(List.Transform(List.Split (Table.ColumnNames(Source),2), each Table.RenameColumns(Table.SelectColumns(Source,_),{{_{0},"material"},{_{1},"usage"}})))

in

tabSplitColumns

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("rZQxa8NADIX/i+dUSLqTfDeWzIEOJR2CBw8mNYQWnAT686sEN6T125rtTjL69J503u2a7TB9vpyn4e19PA3NqjGnWjUOz/vxcD4mXh+Gfop7SuRS4/A6Ho/nYdOfpvErrlKI0yV+qbTp98PHqX/axl0zmUvTrXb3qUjw/PE9Vgs5G8CqUlJbYiuZ6q34LwVKYqhUysQmQIFRdQEKpCUtBiGpEiuySZ1KbpeQQsaOGKFPICJncmsxQjKwJBDJAKKSO1ZhiZQrssoCgYYd25EzklEoW4EQJtUEGLwsz6AyY3OUXDPqXEhrAZ0LZYWdK5lge2L+bUX2xIaJLxk/4cVLuMaxN5LQFv3LG6HUImsk1h85M4cXxlzjuGvm8uiuo6g/3Ap++N7FxArSHn+7ClZCmKwk5C7H68b25kq1oLWLRKtggrf4X8ic6Lpv", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Model Material 1" = _t, #"Model Material 1 Usage" = _t, #"Model Material 2" = _t, #"Model Material 2 Usage" = _t, #"Model Material 3" = _t, #"Model Material 3 Usage" = _t, #"Model Material 4" = _t, #"Model Material 4 Usage" = _t])

in Table.FromColumns( List.Accumulate({0..Table.ColumnCount(Source)/2-1},{{},{}},(s,c)=>let ttc=Table.ToColumns(Source) in {s{0}&ttc{2*c} , s{1}& ttc{2*c+1}} ))

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Anonymous

this solution should work out for you. Is dynamic as well. Condition is that all columns with "Usage" in the name are put in one column and all other as well (This logic we can also change). After the final table is create, I applied a group function to sum all the same materials

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("rZQxa8NADIX/i+dUSLqTfDeWzIEOJR2CBw8mNYQWnAT686sEN6T125rtTjL69J503u2a7TB9vpyn4e19PA3NqjGnWjUOz/vxcD4mXh+Gfop7SuRS4/A6Ho/nYdOfpvErrlKI0yV+qbTp98PHqX/axl0zmUvTrXb3qUjw/PE9Vgs5G8CqUlJbYiuZ6q34LwVKYqhUysQmQIFRdQEKpCUtBiGpEiuySZ1KbpeQQsaOGKFPICJncmsxQjKwJBDJAKKSO1ZhiZQrssoCgYYd25EzklEoW4EQJtUEGLwsz6AyY3OUXDPqXEhrAZ0LZYWdK5lge2L+bUX2xIaJLxk/4cVLuMaxN5LQFv3LG6HUImsk1h85M4cXxlzjuGvm8uiuo6g/3Ap++N7FxArSHn+7ClZCmKwk5C7H68b25kq1oLWLRKtggrf4X8ic6Lpv", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Model Material 1" = _t, #"Model Material 1 Usage" = _t, #"Model Material 2" = _t, #"Model Material 2 Usage" = _t, #"Model Material 3" = _t, #"Model Material 3 Usage" = _t, #"Model Material 4" = _t, #"Model Material 4 Usage" = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Model Material 1", type text}, {"Model Material 1 Usage", Int64.Type}, {"Model Material 2", type text}, {"Model Material 2 Usage", Int64.Type}, {"Model Material 3", type text}, {"Model Material 3 Usage", Int64.Type}, {"Model Material 4", type text}, {"Model Material 4 Usage", Int64.Type}}),

ColumnsMaterial = List.Select(Table.ColumnNames(#"Changed Type"), each not Text.Contains(_, "Usage")),

ColumnsUsage = List.Select(Table.ColumnNames(#"Changed Type"), each Text.Contains(_, "Usage")),

CreateListMaterial = List.Combine(List.Transform(ColumnsMaterial, (column)=> Table.Column(#"Changed Type", column))),

CreateListUsage = List.Combine(List.Transform(ColumnsUsage, (column)=> Table.Column(#"Changed Type", column))),

CombineFinalTable = Table.FromColumns({CreateListMaterial, CreateListUsage}, {"Material", "Usage"}),

#"Grouped Rows" = Table.Group(CombineFinalTable, {"Material"}, {{"Usage Sum", each List.Sum([Usage]), type number}})

in

#"Grouped Rows"

Copy paste this code to the advanced editor in a new blank query to see how the solution works.

If you need any help for this, come back to me

If this post helps or solves your problem, please mark it as solution (to help other users find useful content and to acknowledge the work of users that helped you)

Kudoes are nice too

Have fun

Jimmy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @Anonymous , maybe you're intended to convert the dataset like this?

If so, try the codes below,

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WCkstyg8oLUoNz8gsSVXSUTI107O0NAIyHNMzc0qLjQ2cc1ITi4B8Y2M9M0NLpVgdiB7fxPTUvJJEoIQBEKObYmShZ2ZgCleMYoGRnqGpKTYLTPQMTA2x6jG21DMwwuYoIzM9CxNzrHpMTPTMTM2x6zE0AbotFgA=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Model Material 1" = _t, #"Model Material 1 Usage" = _t, #"Model Material 2" = _t, #"Model Material 2 Usage" = _t]),

#"Merged Columns" = Table.CombineColumns(Source,{"Model Material 1", "Model Material 1 Usage"},Combiner.CombineTextByDelimiter(";", QuoteStyle.None),"1"),

#"Merged Columns1" = Table.CombineColumns(#"Merged Columns",{"Model Material 2", "Model Material 2 Usage"},Combiner.CombineTextByDelimiter(";", QuoteStyle.None),"2"),

#"Unpivoted Columns" = Table.UnpivotOtherColumns(#"Merged Columns1", {}, "Model", "Value"),

#"Split Column by Delimiter" = Table.SplitColumn(#"Unpivoted Columns", "Value", Splitter.SplitTextByDelimiter(";", QuoteStyle.Csv), {"Material", "Usage"}),

#"Changed Type" = Table.TransformColumnTypes(#"Split Column by Delimiter",{{"Material", type text}, {"Usage", type number}})

in

#"Changed Type"| Thanks to the great efforts by MS engineers to simplify syntax of DAX! Most beginners are SUCCESSFULLY MISLED to think that they could easily master DAX; but it turns out that the intricacy of the most frequently used RANKX() is still way beyond their comprehension! |

DAX is simple, but NOT EASY! |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous What is the expected output or is that the expected output? Sorry, having trouble following, can you post sample data as text and expected output?

Not really enough information to go on, please first check if your issue is a common issue listed here: https://community.powerbi.com/t5/Community-Blog/Before-You-Post-Read-This/ba-p/1116882

Also, please see this post regarding How to Get Your Question Answered Quickly: https://community.powerbi.com/t5/Community-Blog/How-to-Get-Your-Question-Answered-Quickly/ba-p/38490

The most important parts are:

1. Sample data as text, use the table tool in the editing bar

2. Expected output from sample data

3. Explanation in words of how to get from 1. to 2.

@ me in replies or I'll lose your thread!!!

Instead of a Kudo, please vote for this idea

Become an expert!: Enterprise DNA

External Tools: MSHGQM

YouTube Channel!: Microsoft Hates Greg

Latest book!: The Definitive Guide to Power Query (M)

DAX is easy, CALCULATE makes DAX hard...

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.