- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Spliting rows with spicific columns

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Spliting rows with spicific columns

Hello Everyone and thank you in advance for your help,

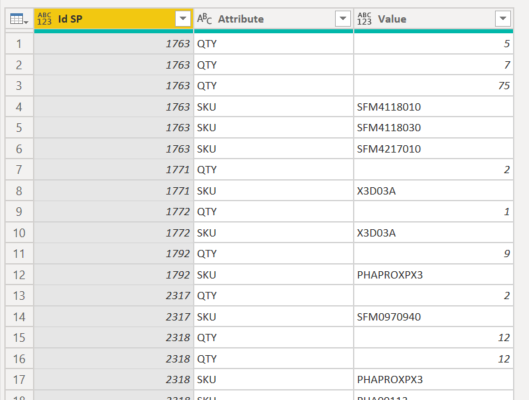

I'm struggling with this problem, here's an example:

I manage to split the rows using M but I can't find a way to make the split while keeping the QTY attached to each SKU. I would really appreaciate any help!

Thank you for your time,

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous ,

- Check the column "Row" and unpivot other columns

- Check the resulting "Attribute"-column and extract the first 3 characters from it

- Pivot that new column with "Values" in the values-section

Imke Feldmann (The BIccountant)

If you liked my solution, please give it a thumbs up. And if I did answer your question, please mark this post as a solution. Thanks!

How to integrate M-code into your solution -- How to get your questions answered quickly -- How to provide sample data -- Check out more PBI- learning resources here -- Performance Tipps for M-queries

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

let

Source = Table.FromRecords(Json.Document(Binary.Decompress(Binary.FromText("lZC9CsJAEAbf5atT3F9ArlNjTEyh0RxyiG8QtBIL8d3V4/aygoXXzS4sM+zpgf31DisLHDonYTFfLBEGBXu5jWNgzdgwLon7wb+PdQDFlpqxYRwPn0X0K+avkh9VHWP+DJAlFagsvU76/ria9IN3WXojSC+FyAowKcC7dgrYbdsUgE2zBhWga2r8/ICihFkqiGQ+WV/28ws=", BinaryEncoding.Base64),Compression.Deflate))),

rows=Table.RowCount(Source),

ncols=(Table.ColumnCount(Source)-1)/2,

lstrecs=List.Transform({0..rows-1}, (r)=> List.Transform({1..ncols}, each [Row=r+1,SKU=Table.Column(Source, "SKU"&Text.From(_)){r},QTY=Table.Column(Source, "QTY"&Text.From(_)){r}])),

recs=List.Combine(lstrecs),

tfr=Table.FromRecords(recs),

#"Filtrate righe" = Table.SelectRows(tfr, each ([QTY] <> null))

in

#"Filtrate righe"- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

let

Source = Table.FromRecords(Json.Document(Binary.Decompress(Binary.FromText("lZC9CsJAEAbf5atT3F9ArlNjTEyh0RxyiG8QtBIL8d3V4/aygoXXzS4sM+zpgf31DisLHDonYTFfLBEGBXu5jWNgzdgwLon7wb+PdQDFlpqxYRwPn0X0K+avkh9VHWP+DJAlFagsvU76/ria9IN3WXojSC+FyAowKcC7dgrYbdsUgE2zBhWga2r8/ICihFkqiGQ+WV/28ws=", BinaryEncoding.Base64),Compression.Deflate))),

cols=Table.ColumnNames(Source),

rows=Table.RowCount(Source),

ncols=(Table.ColumnCount(Source)-1)/2,

lstrecs=List.Transform({0..rows-1}, (r)=> List.Transform({1..ncols}, each [Row=r+1,SKU=Table.Column(Source, cols{_}){r},QTY=Table.Column(Source, cols{ncols+_}){r}])),

recs=List.Combine(lstrecs),

tfr=Table.FromRecords(recs),

#"Filtrate righe" = Table.SelectRows(tfr, each ([QTY] <> null))

in

#"Filtrate righe"- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

let

Source = Table.FromRecords(Json.Document(Binary.Decompress(Binary.FromText("lZC9CsJAEAbf5atT3F9ArlNjTEyh0RxyiG8QtBIL8d3V4/aygoXXzS4sM+zpgf31DisLHDonYTFfLBEGBXu5jWNgzdgwLon7wb+PdQDFlpqxYRwPn0X0K+avkh9VHWP+DJAlFagsvU76/ria9IN3WXojSC+FyAowKcC7dgrYbdsUgE2zBhWga2r8/ICihFkqiGQ+WV/28ws=", BinaryEncoding.Base64),Compression.Deflate))),

ttc=Table.ToColumns(Source),

c1=List.Repeat(ttc{0},5),

sku=List.Combine(List.Range(ttc,1,5)),

qty=List.Combine(List.Range(ttc,6,5)),

tfc=Table.FromColumns({c1,sku,qty},{"idx","sku","qty"}),

#"Ordinate righe" = Table.Sort(tfc,{{"idx", Order.Ascending}}),

#"Filtrate righe" = Table.SelectRows(#"Ordinate righe", each ([sku] <> null))

in

#"Filtrate righe"- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @Anonymous

Try this:

let

Source = Table.FromRecords(Json.Document(Binary.Decompress(Binary.FromText("lZC9CsJAEAbf5atT3F9ArlNjTEyh0RxyiG8QtBIL8d3V4/aygoXXzS4sM+zpgf31DisLHDonYTFfLBEGBXu5jWNgzdgwLon7wb+PdQDFlpqxYRwPn0X0K+avkh9VHWP+DJAlFagsvU76/ria9IN3WXojSC+FyAowKcC7dgrYbdsUgE2zBhWga2r8/ICihFkqiGQ+WV/28ws=", BinaryEncoding.Base64),Compression.Deflate))),

trans = Table.ToList(Source, each List.Transform(List.RemoveItems(List.Zip(List.Split(List.Skip(_), 5)), {{null, null}}), (lst)=>{_{0}}&lst)),

result = Table.FromRows(List.Combine(trans), {"Row", "SKU", "QTY"})

in

result- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous ,

- Check the column "Row" and unpivot other columns

- Check the resulting "Attribute"-column and extract the first 3 characters from it

- Pivot that new column with "Values" in the values-section

Imke Feldmann (The BIccountant)

If you liked my solution, please give it a thumbs up. And if I did answer your question, please mark this post as a solution. Thanks!

How to integrate M-code into your solution -- How to get your questions answered quickly -- How to provide sample data -- Check out more PBI- learning resources here -- Performance Tipps for M-queries

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I managed to make it work! I was extracting just the first 3 letters of the column instead of splitting it!

Thank you so much! This will save me a ton of time!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey there!

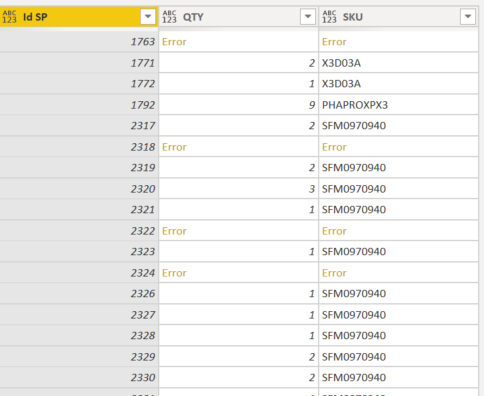

@ImkeFthis looks like a great and easy way to approach this problem but I might be doing something wrong, could you please advice?

Until this step it seems like the data is ready for being pivoted but it shows the following error:

Thank you so much for your help!!!

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.