- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Optimize Query with Large CSV

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Optimize Query with Large CSV

Hi,

I'm pretty new to power query, so forgive me if I'm missing something easy.

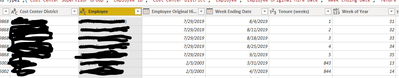

I have a source file (csv) that looks like this, over 1 million rows

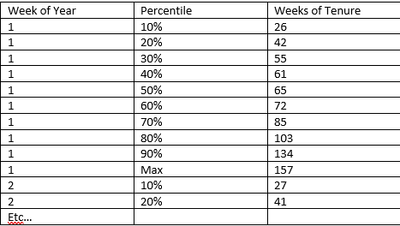

I'm trying to transform the data into this:

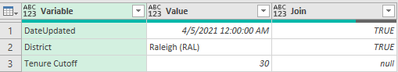

Lastly, I have a variables table I maintain in Excel, so I can change a variable and refresh.

First query: load csv (first table), join with variables to filter to the selected district:

let

Source = Csv.Document(File.Contents("C:\Users\TristenHannah\Dropbox (USICLLC)\USIC\Capacity Model\tixlochrsmaster.csv"),[Delimiter=",", Columns=13, Encoding=1252, QuoteStyle=QuoteStyle.None]),

#"Promoted Headers" = Table.PromoteHeaders(Source, [PromoteAllScalars=true]),

#"Changed Type" = Table.TransformColumnTypes(#"Promoted Headers",{{"Cost Center District", type text}, {"Cost Center Supervisor Group", type text}, {"Employee", type text}, {"Employee ID", Int64.Type}, {"Week Ending Date", type datetime}, {"Closed Locates", Int64.Type}, {"Worked Hours", Int64.Type}, {"Closed Tickets", Int64.Type}, {"Employee Original Hire Date", type datetime}, {"Employee Job Title", type text}, {"Tenure (weeks)", Int64.Type}, {"Week of Year", Int64.Type}, {"TPH", type text}}),

#"Added Custom" = Table.AddColumn(#"Changed Type", "join", each "District"),

#"Merged Queries" = Table.NestedJoin(#"Added Custom", {"Cost Center District", "join"}, Variables, {"Value", "Variable"}, "Variables", JoinKind.Inner),

#"Removed Columns" = Table.RemoveColumns(#"Merged Queries",{"Variables", "join"})

in

#"Removed Columns"

Second query: use the output of the first, calculate percentiles for each week of the year, group, (plan is to unpivot and normalize the data, but I'm not that far)

let

Source = tixlochrsmaster,

buffer = Table.Buffer(Source),

addcolpercentile1 = Table.AddColumn(buffer, "percentile1", (OT) => List.Percentile(Table.SelectRows(buffer,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.1)),

addcolpercentile2 = Table.AddColumn(addcolpercentile1, "percentile2", (OT) => List.Percentile(Table.SelectRows(addcolpercentile1,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.2)),

addcolpercentile3 = Table.AddColumn(addcolpercentile2, "percentile3", (OT) => List.Percentile(Table.SelectRows(addcolpercentile2,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.3)),

addcolpercentile4 = Table.AddColumn(addcolpercentile3, "percentile4", (OT) => List.Percentile(Table.SelectRows(addcolpercentile3,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.4)),

addcolpercentile5 = Table.AddColumn(addcolpercentile4, "percentile5", (OT) => List.Percentile(Table.SelectRows(addcolpercentile4,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.5)),

addcolpercentile6 = Table.AddColumn(addcolpercentile5, "percentile6", (OT) => List.Percentile(Table.SelectRows(addcolpercentile5,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.6)),

addcolpercentile7 = Table.AddColumn(addcolpercentile6, "percentile7", (OT) => List.Percentile(Table.SelectRows(addcolpercentile6,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.7)),

addcolpercentile8 = Table.AddColumn(addcolpercentile7, "percentile8", (OT) => List.Percentile(Table.SelectRows(addcolpercentile7,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.8)),

addcolpercentile9 = Table.AddColumn(addcolpercentile8, "percentile9", (OT) => List.Percentile(Table.SelectRows(addcolpercentile8,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.9)),

addcolpercentile10 = Table.AddColumn(addcolpercentile9, "percentile10", (OT) => List.Max(Table.SelectRows(addcolpercentile9,(IT) => IT[Week of Year] = OT[Week of Year] and IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"])),

#"Grouped Rows" = Table.Group(addcolpercentile10, {"Week of Year"}, {{"percentile1", each List.Average([percentile1]), type number}, {"percentile2", each List.Average([percentile2]), type number}, {"percentile3", each List.Average([percentile3]), type number}, {"percentile4", each List.Average([percentile4]), type number}, {"percentile5", each List.Average([percentile5]), type number}, {"percentile6", each List.Average([percentile6]), type number}, {"percentile7", each List.Average([percentile7]), type number}, {"percentile8", each List.Average([percentile8]), type number}, {"percentile9", each List.Average([percentile9]), type number}, {"percentile10", each List.Average([percentile10]), type number}})

in

#"Grouped Rows"

Every step to the second-to-last runs okay, but slow. The Grouped Rows step is super slow. Been running on my laptop for over an hour and nothing yet.

Any thoughts to make this more efficient?

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is good that you got this working in the query editor (albeit slow), but this kind of analysis should be done on the DAX side after you load your data. You don't need to add the percentile columns or do the slow group step.

That would also allow you to load the data for all your districts at the same time and then use a slicer to view one at a time. You can then do other calculations too without doing a separate query again.

Regards,

Pat

Did I answer your question? Mark my post as a solution! Kudos are also appreciated!

To learn more about Power BI, follow me on Twitter or subscribe on YouTube.

@mahoneypa HoosierBI on YouTube

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So I made the mistake of thinking that since the preview loaded in an acceptable time, that the query would refresh in excel in an acceptable time. Was not the case. So here's what I did:

I loaded into power bi and created a second table, grouping by district and week of year (since those are the dimensions I wish to derive percentiles off of). I only care about employees with > 26 weeks of tenure, so prior to grouping I filtered out those <=26. Post grouping, I also filtered out any results that had a count of less than 11 (so percentile.exc doesn't break if I go down to deciles)

I used this for the first quintile

firstquintile = CALCULATE(PERCENTILE.EXC(tixlochrsmaster[Tenure (weeks)],0.2),FILTER(tixlochrsmaster,tixlochrsmaster[Tenure (weeks)] > 26 && tixlochrsmaster[Cost Center District] = tenurepercentiles[Cost Center District] && tixlochrsmaster[Week of Year] = tenurepercentiles[Week of Year]))

I get an error saying "percentile value should be in the range 1/(n+1)... n/(n+1)"

Well the smallest N I have is 11, so that's .083-.917. 0.2 definitely falls in that range. The formula gives a result if the percentile used is 0.5.

EDIT:

I added a count with the exact same filters to troubleshoot and sorted ascending. My N is indeed large enough:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is good that you got this working in the query editor (albeit slow), but this kind of analysis should be done on the DAX side after you load your data. You don't need to add the percentile columns or do the slow group step.

That would also allow you to load the data for all your districts at the same time and then use a slicer to view one at a time. You can then do other calculations too without doing a separate query again.

Regards,

Pat

Did I answer your question? Mark my post as a solution! Kudos are also appreciated!

To learn more about Power BI, follow me on Twitter or subscribe on YouTube.

@mahoneypa HoosierBI on YouTube

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Pat. I downloaded DAX Studio today but I definitely need a lot of youtube time to figure out how to use it.

Meanwhile, all I had to do was step away from the computer and clear my head. A solution came easily enough. I reversed the order of operations. I did the group first, then merged the data and performed the percentile calculations. Still takes a few minutes to run but progress. I'm not really a programmer or anything, just some guy trying to solve a business problem.

let

Source = tixlochrsmaster,

buffer = Table.Buffer(Source),

#"Grouped Rows" = Table.Group(buffer, {"Week of Year"}, {{"Count", each Table.RowCount(_), Int64.Type}}),

#"Merged Queries" = Table.NestedJoin(#"Grouped Rows", {"Week of Year"}, tixlochrsmaster, {"Week of Year"}, "tixlochrsmaster", JoinKind.LeftOuter),

#"Removed Columns" = Table.RemoveColumns(#"Merged Queries",{"Count"}),

addcolperc1 = Table.AddColumn(#"Removed Columns", "percentile1", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.1)),

addcolperc2 = Table.AddColumn(addcolperc1, "percentile2", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.2)),

addcolperc3 = Table.AddColumn(addcolperc2, "percentile3", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.3)),

addcolperc4 = Table.AddColumn(addcolperc3, "percentile4", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.4)),

addcolperc5 = Table.AddColumn(addcolperc4, "percentile5", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.5)),

addcolperc6 = Table.AddColumn(addcolperc5, "percentile6", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.6)),

addcolperc7 = Table.AddColumn(addcolperc6, "percentile7", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.7)),

addcolperc8 = Table.AddColumn(addcolperc7, "percentile8", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.8)),

addcolperc9 = Table.AddColumn(addcolperc8, "percentile9", each List.Percentile(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"],0.9)),

addcolperc10 = Table.AddColumn(addcolperc9, "percentile10", each List.Max(Table.SelectRows([tixlochrsmaster], (IT) => IT[#"Tenure (weeks)"] > 26)[#"Tenure (weeks)"])),

#"Removed Columns1" = Table.RemoveColumns(addcolperc10,{"tixlochrsmaster"}),

#"Unpivoted Columns" = Table.UnpivotOtherColumns(#"Removed Columns1", {"Week of Year"}, "Attribute", "Value")

in

#"Unpivoted Columns"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Good to hear you improved the refresh time, but I still would encourage you to do these analyses with DAX measures (I didn't mean that you needed DAX studio). Doing this kind of analysis in the query will make it technically more challenging and greatly reduce the flexibility of your reports.

As a fellow non-programmer, it's also good to hear you plan to continue to learn more Power BI.

Regards,

Pat

Did I answer your question? Mark my post as a solution! Kudos are also appreciated!

To learn more about Power BI, follow me on Twitter or subscribe on YouTube.

@mahoneypa HoosierBI on YouTube

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.