- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Not getting the exact number of records

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Not getting the exact number of records

Hi,

my problem is, that i am not getting the exact number of records when i load it into my Excel Sheet.

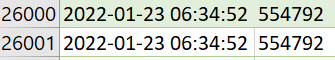

It should return 25.429 records. But i am getting 26.000:

There were also 26.000 records loaded:

But actually I should just get 25.429.

Do i have to use an offset and a limit?

Any other idea?

this is my code:

thanks in advance

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @BA_Pete,

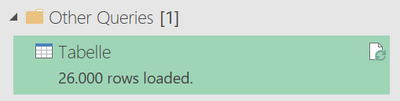

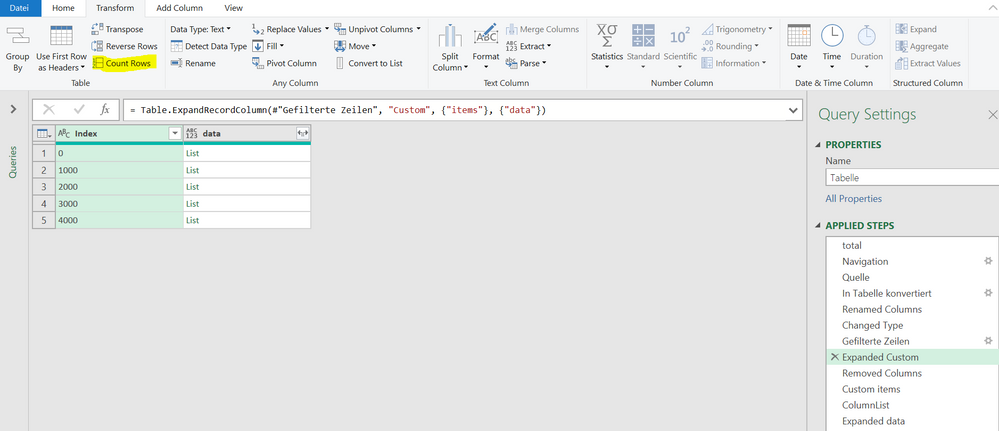

yes this is the number of rows i am getting in #"total" ... more precise in #"number" i get 25,429.

the problem is that i am getting 5000 rows here:

- #"Custom items"

- #"Expanded data"

and here 5:

- #"Expanded Custom"

i am not sure if i did it right but i just clicked "count rows" at the right place:

also i am not sure if i use the index correctly. Because i think up to there i have a rounded number of rows.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @julhelp ,

It sounds like you've done the row count correctly as you have identified that your nested object expansions are adding more rows to the original total.

It means that your nested objects have more than one row/value in them. Therefore, when you expand the nested object, your #"Number" rows have to duplicate in order to accomodate the extra rows from the nested object.

I'm afraid I can't really help you beyond this point. You're going to need to go down your nested object list and click on each cell to see how many records are in each nested object. Any that contain more than 1 record need to be amended as per your desired outcome.

You *MAY* be able to use something like this:

Table.RowCount([Custom])

List.Count([data])

...in a custom column to count the number of rows in each nested object before expanding, but I haven't tested.

Pete

Now accepting Kudos! If my post helped you, why not give it a thumbs-up?

Proud to be a Datanaut!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think the index is not working well because there is the same record any time for the index: 0,1000,2000,3000,4000...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @julhelp ,

I don't know what the relevance of the index is at all.

Try this:

1) Select your #"number" step. Select the table unique ID column. Go to Home tab > Keep Rows > Keep Duplicates. This should resolve to an empty table (assuming unique records at source).

Delete the keep duplicates step.

2) Select your #"Expanded Custom" step. Repeat step 1. This will show you which records are creating duplicate rows in your table, if any.

Delete the keep duplicates step.

3) Do the same as step 2 for your #"Custom items" and #"Expanded data" steps. This will show you cumulative duplicates created by each of these steps in aggregate.

4) Investigate and resolve as necessary.

Pete

Now accepting Kudos! If my post helped you, why not give it a thumbs-up?

Proud to be a Datanaut!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @julhelp ,

On what basis are you expecting to have 25,429 rows? Is this the number of rows in the source table (#"total")?

If so, then you appear to be introducing duplicate rows into your source table due to crossjoins. You should go through each step in your query taking a row count, paying particular attention to the following steps:

- #"Expanded Custom"

- #"Custom items"

- #"Expanded data"

If the row count is different before/after a specific step, then that step is creating the duplicates. I have flagged the above stps to focus on as they all expanded previously nested data, so are the most likely source of crossjoin duplications.

Pete

Now accepting Kudos! If my post helped you, why not give it a thumbs-up?

Proud to be a Datanaut!

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.