- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Re: Making Data Model From Flat File

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Making Data Model From Flat File

Dear Community,

I have 1 flat CSV file which I'd like to break down by it's dimensions and create star schema in my data model.

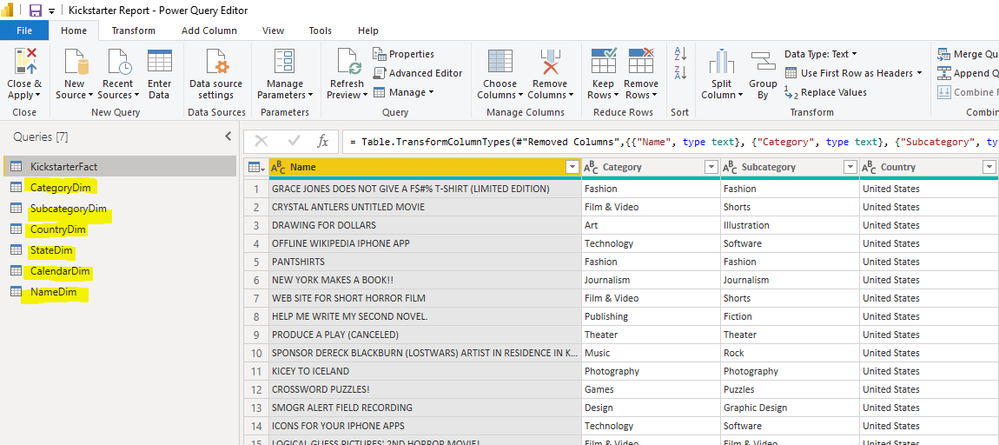

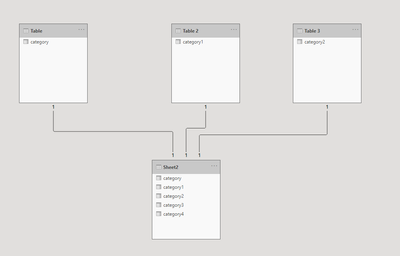

I loaded the file in power query and with the "Reference" function i created the Dimension tables as shown below:

NOTE: Each one of the dimension tables was created as it follows: right click on the fact table-> reference->remove other columns->remove duplicates.

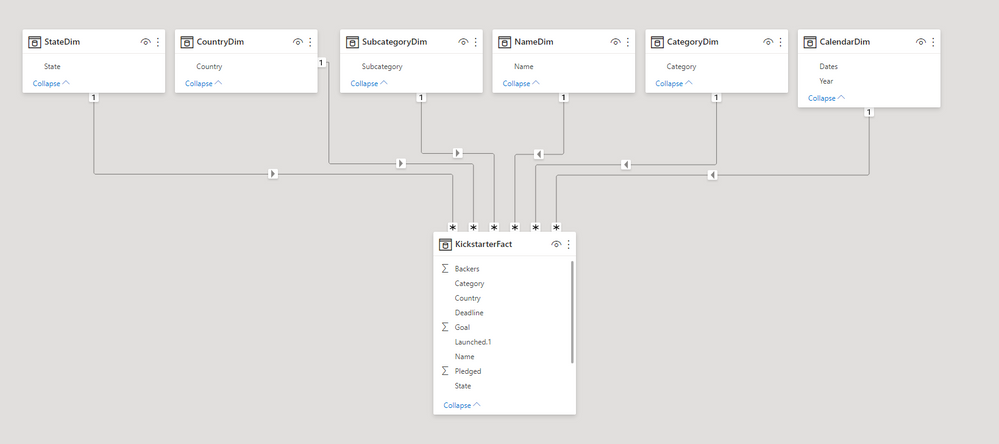

My data model looks fine and exactly how i imagined it:

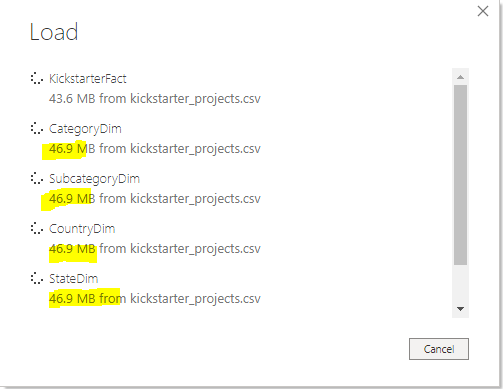

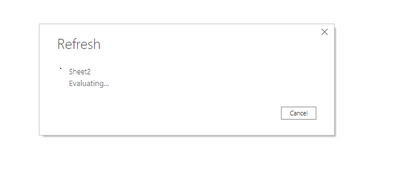

The only problem i got is loading. The original (fact table) CSV is 46MB file... When i load it with the dimensions it loads up 6 tables by 46MB (the calendar one was created from blank query).Please see here:

Can anyone help me understand why this is happening? For example in the category table i have 15 rows, but it still loads 46MB. I've never experienced something like that so far (maybe because i was using smaller files).But just to create a simple measure now it takes more than 30 seconds.

All due respect,

Atanas

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous,

How about creating the dim tables with dax formular rather than in power query?

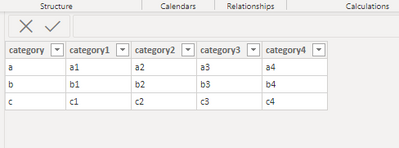

For example,my oringinal table is as below from an Excel file:

To achieve what you need:

Create dim tables as below:

Table = VALUES(Sheet2[category])Then create relationships as you need:

Pls note that the direction should be from fact table side to dim table side.

Then you would see when you refresh the table,it will only load one time:

Best Regards,

Kelly

Did I answer your question? Mark my post as a solution!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous It is not loading 46mb. It is processing the 46mb file to load the data. The actual 15 record table is probably a few KB at most. You could validate this using DAX Studio and the Vertipaq Analyzer feature which will tell you the size of each loaded table.

this is an issue working with flat tables. With no database on the back end power query has to fully process the entire file even if you have filtered it to just one record.

But it is best practice to do this in PQ. Consider if your csv file has the customer number and name in it.

You could create a DIM table of that info, but by using DAX, you cannot remove that redundant customer name info from the FACT table. You can in power query. Smaller model over all.

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@v-kelly-msft and @edhans - thank you both for your information.

I will try it with Values. That will work perfectly. This is also what Aaron from Maven Analytics recommends in his Advanced DAX course. Using Values is easy and cool way to do it.

@edhans - will try to get rid of my current labelling of the tables 🙂 I learned to arrange my tables like so from Chris Dutton of Maven Analytics.

Thank you one more time and wish you all the best,

Atanas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm glad you got an answer you can work with @Anonymous but long term I would advise against working in DAX to shape and model your data. For the very simple example you have shown, VALUES() works, but when the data is more complex - for example, you have additional fields beyond the key field in the flat table, Power Query is the better practice as it can ensure uniqueness of the first key, then add the relevant fields (think Customer Key is unique, and customer name may be, but as you add more fields like state or zip code, those will not be unique), and the DAX gets a bit crazy. From the DAX master himself:

The earlier you fix your data, the better. Delaying data transformation along data supply chain creates a technical debt, which you pay only on data you actually consume.

Additionally, the DAX method will not allow you to remove the redundant data from the flat table, whereas Power Query will if you use the approach I listed above - summarized:

- Connect to the flat table

- Disable load on it

- Create as many references as necessary for all subsequent DIM and FACT tables, with each table only keeping the necessary columns to create a Star Schema.

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@edhans- Thank you very much. I will consider and experiment with your suggestion.

I understand VALUES is not the best option, at first i was just surprised by the total MB of the data model. Usually I am not Power BI user, but Power Pivot. And when i create similar dim tables from a flat file Excel loads only the rows I told Power Query to filter. So the cardinality of the tables is low and performance is ok. I was truly shocked to see table with 15 rows in it to be 46MB.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous It is not loading 46mb. It is processing the 46mb file to load the data. The actual 15 record table is probably a few KB at most. You could validate this using DAX Studio and the Vertipaq Analyzer feature which will tell you the size of each loaded table.

this is an issue working with flat tables. With no database on the back end power query has to fully process the entire file even if you have filtered it to just one record.

But it is best practice to do this in PQ. Consider if your csv file has the customer number and name in it.

You could create a DIM table of that info, but by using DAX, you cannot remove that redundant customer name info from the FACT table. You can in power query. Smaller model over all.

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great @Anonymous - glad we finally got to your question. 😁

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous,

How about creating the dim tables with dax formular rather than in power query?

For example,my oringinal table is as below from an Excel file:

To achieve what you need:

Create dim tables as below:

Table = VALUES(Sheet2[category])Then create relationships as you need:

Pls note that the direction should be from fact table side to dim table side.

Then you would see when you refresh the table,it will only load one time:

Best Regards,

Kelly

Did I answer your question? Mark my post as a solution!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

That is how it works. Power Query doesn't start at the first query and work down, it starts at the bottom (last) query and works backwards, so 6 tables from 1 will cause it to process that first source table 6 times.

One thing you might want to try that may help is all of your DIM tables (except calendar) are based on the fact table, and the fact table is itself loading.

Instead, mark the fact table to "disable load" and create a reference from the original FACT table to a new one that is just a pointer. Probably no further transformations. If you go to Query Dependency View, you'd see your Load Disabled FACT table at the top, and all DIMS and new FACT table at the bottom.

And someting to consider: Get rid of DIM and FACT in your table names. Will not help performance one bit, but it is best practice to use table and field names in the language of your audience with no tech speak or CamelCase. If two words, use a space. "Sales Data" - not SalesData, or SalesFACT or any other nerdy stuff. 😁

I love you have your DIMs at the top and FACT on the bottom. Learned from Rob Collie or Matt Allington? Filters flow downhill! 👍

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI ReportingHelpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.