- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Extract max value for every column in a table

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Extract max value for every column in a table

Hi all,

Given a table with N columns Col_1, Col_2, ..., Col_N, and M rows, with N arbitrarily large, what is the best way to get a table with one row and N columns in which the value for Col_j j=1,2,..,N is the maximum in that column in the initial table.

I'm interested in a solution that can handle large N and that doesn't use the names of the columns explicitly; perhaps using Table.ColumnNames or similar. Sample PBIX attached

Many thanks

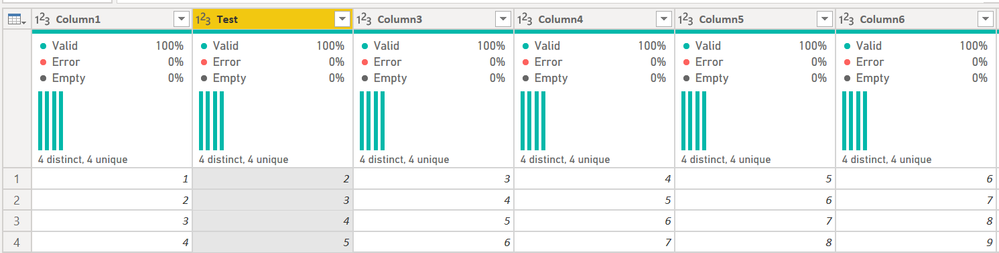

Initial table

| Column1 | Column2 | Column3 | Column4 | Column5 | Column6 | .... | ColumnN |

| 1 | 5 | 3 | 4 | 5 | 6 | 145 | |

| 2 | 3 | 6 | 7 | 6 | 9 | 12 | |

| 3 | 4 | 5 | 6 | 7 | 8 | 321 | |

| 4 | 2 | 4 | 5 | 8 | 2 | 33 |

Result table

| Column1 | Column2 | Column3 | Column4 | Column5 | Column6 | ..... | ColumnN |

| 4 | 5 | 6 | 7 | 8 | 9 | 321 |

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @AlB

you can use Table.Profile, then taking the column Column and Max, Transpose it. For the sum take Record.FieldValues and then sum it. Here an example

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMlRQ0lEyBRHGIMIEzjUDEQoKSrE60UpGcHmwqDmcZYmkCJt+sEoLJEVgeSNUlRZwMZCiWAA=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Column1 " = _t, #"Column2 " = _t, #"Column3 " = _t, #"Column4 " = _t, #"Column5 " = _t, #"Column6 " = _t, #" .... " = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1 ", Int64.Type}, {"Column2 ", Int64.Type}, {"Column3 ", Int64.Type}, {"Column4 ", Int64.Type}, {"Column5 ", Int64.Type}, {"Column6 ", Int64.Type}, {" .... ", type text}}),

Table = Table.Profile(#"Changed Type"),

Select = Table.Transpose(Table.SelectColumns(Table, {"Column", "Max"})),

#"Promoted Headers" = Table.PromoteHeaders(Select, [PromoteAllScalars=true]),

#"Changed Type1" = Table.TransformColumnTypes(#"Promoted Headers",{{" .... ", type text}, {"Column1 ", Int64.Type}, {"Column2 ", Int64.Type}, {"Column3 ", Int64.Type}, {"Column4 ", Int64.Type}, {"Column5 ", Int64.Type}, {"Column6 ", Int64.Type}}),

#"Added Custom" = Table.AddColumn(#"Changed Type1", "Sum Of Max", (add)=> List.Sum(List.Transform(Record.FieldValues(add), each if Value.Is(_,type number) then _ else Number.FromText(_))))

in

#"Added Custom"

Copy paste this code to the advanced editor in a new blank query to see how the solution works.

If this post helps or solves your problem, please mark it as solution (to help other users find useful content and to acknowledge the work of users that helped you)

Kudoes are nice too

Have fun

Jimmy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @AlB

Another solution, but I like @Jimmy801 approach with Table.Profile, neat.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMlTSUTIFYmMgNoGyzYDY0MRUKVYnWskIKgcSM4fSliB5I7A0ujaQEguQFiNDsDxIzghJjQWUb2ysFBsLAA==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t, Column3 = _t, Column4 = _t, Column5 = _t, Column6 = _t, ColumnN = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1", Int64.Type}, {"Column2", Int64.Type}, {"Column3", Int64.Type}, {"Column4", Int64.Type}, {"Column5", Int64.Type}, {"Column6", Int64.Type}, {"ColumnN", Int64.Type}}),

FindMax = List.Transform(Table.ToColumns(#"Changed Type"), each List.Accumulate( _ ,0,(state,current)=>if state>current then state else current)),

#"Converted to Table" = Table.FromList(FindMax, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

#"Transposed Table" = Table.Transpose(#"Converted to Table"),

Final = Table.RenameColumns(#"Transposed Table" , List.Zip({Table.ColumnNames(#"Transposed Table"), Table.ColumnNames(#"Changed Type")}))

in

FinalPhil

Did I answer your question? Then please mark my post as the solution.

If I helped you, click on the Thumbs Up to give Kudos.

Blog :: YouTube Channel :: Connect on Linkedin

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @AlB - I know you already have two solutions, but I gave it some thought and came up with this. I needed this pattern for another potential project so thought I would work through it as well.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("zY65EcAwDMN2Ue0ipPPO4vP+a4TwFC6gCtBxjFK1cujhDFe4wxPe8AUdHEyhClfIwha68EUgClN4/aYwhSlMYQpTmMIU/ajZxi5TcrT27LIlx2vQNmNyes35Aw==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t, Column3 = _t, Column4 = _t, Column5 = _t, Column6 = _t, Column7 = _t, Column8 = _t, Column9 = _t, Column10 = _t, Column11 = _t, Column12 = _t, Column13 = _t, Column14 = _t, Column15 = _t, Column16 = _t, Column17 = _t, Column18 = _t, Column19 = _t, Column20 = _t, Column21 = _t, Column22 = _t, Column23 = _t, Column24 = _t, Column25 = _t, Column26 = _t, Column27 = _t, Column28 = _t, Column29 = _t, Column30 = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1", Int64.Type}, {"Column2", Int64.Type}, {"Column3", Int64.Type}, {"Column4", Int64.Type}, {"Column5", Int64.Type}, {"Column6", Int64.Type}, {"Column7", Int64.Type}, {"Column8", Int64.Type}, {"Column9", Int64.Type}, {"Column10", Int64.Type}, {"Column11", Int64.Type}, {"Column12", Int64.Type}, {"Column13", Int64.Type}, {"Column14", Int64.Type}, {"Column15", Int64.Type}, {"Column16", Int64.Type}, {"Column17", Int64.Type}, {"Column18", Int64.Type}, {"Column19", Int64.Type}}),

#"Renamed Columns" = Table.RenameColumns(#"Changed Type",{{"Column2", "Test"}}),

varColumnNames = List.Buffer(Table.ColumnNames(#"Renamed Columns")),

varColumnCount = List.Count(varColumnNames),

MaxValues =

List.Generate(

()=> [varMaxValue = List.Max(Table.Column(#"Renamed Columns", Table.ColumnNames(#"Renamed Columns"){0})), varCounter = 0],

each [varCounter] < varColumnCount,

each [varMaxValue = List.Max(Table.Column(#"Renamed Columns", Table.ColumnNames(#"Renamed Columns"){varCounter})), varCounter = [varCounter] + 1],

each [varMaxValue]

),

MaxTable = Table.FromColumns({MaxValues}),

#"Transposed Table" = Table.Transpose(MaxTable, Table.ColumnNames(#"Renamed Columns"))

in

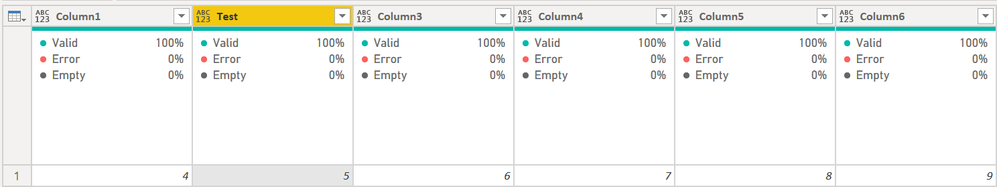

#"Transposed Table"Turns this:

to this:

Surprisingly it worked on the text values in your columns 23-33. I was expecting List.Max to fail there, but it it didn't.

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @AlB , you might want to try this single-line solution in spite that this thread is already marked "solved",

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("zY65EcAwDMN2Ue0ipPPO4vP+a4TwFC6gCtBxjFK1cujhDFe4wxPe8AUdHEyhClfIwha68EUgClN4/aYwhSlMYQpTmMIU/ajZxi5TcrT27LIlx2vQNmNyes35Aw==", BinaryEncoding.Base64), Compression.Deflate))),

#"Changed Type" = Function.Invoke(Table.TransformColumnTypes, {Source, List.Accumulate(Table.ColumnNames(Source), {}, (s,c)=>s&{{c,Int64.Type}})}),

Solution = Table.FromColumns(List.Transform(Table.ToColumns(#"Changed Type"), each {List.Max(_)}), Table.ColumnNames(Source))

in

Solution

| Thanks to the great efforts by MS engineers to simplify syntax of DAX! Most beginners are SUCCESSFULLY MISLED to think that they could easily master DAX; but it turns out that the intricacy of the most frequently used RANKX() is still way beyond their comprehension! |

DAX is simple, but NOT EASY! |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @AlB , you might want to try this single-line solution in spite that this thread is already marked "solved",

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("zY65EcAwDMN2Ue0ipPPO4vP+a4TwFC6gCtBxjFK1cujhDFe4wxPe8AUdHEyhClfIwha68EUgClN4/aYwhSlMYQpTmMIU/ajZxi5TcrT27LIlx2vQNmNyes35Aw==", BinaryEncoding.Base64), Compression.Deflate))),

#"Changed Type" = Function.Invoke(Table.TransformColumnTypes, {Source, List.Accumulate(Table.ColumnNames(Source), {}, (s,c)=>s&{{c,Int64.Type}})}),

Solution = Table.FromColumns(List.Transform(Table.ToColumns(#"Changed Type"), each {List.Max(_)}), Table.ColumnNames(Source))

in

Solution

| Thanks to the great efforts by MS engineers to simplify syntax of DAX! Most beginners are SUCCESSFULLY MISLED to think that they could easily master DAX; but it turns out that the intricacy of the most frequently used RANKX() is still way beyond their comprehension! |

DAX is simple, but NOT EASY! |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great. Yet another option. Very interesting. Thanks

It works on the text columns as well because in the initial table you are using, the "alphabetical" sorting (based on their ASCII values) coincides in all cases with the numerical sorting. But if for instance in column 30 you had

Column30

| 30 |

| 31 |

| 321 |

| 33 |

it would still pick the 33 instead of the 321

Actually, it wasn't my intention to have text columns. I just pasted the 30 columns on "Enter Data" and the automatically generated #"Changed Type" step changed the type of only the first 19 columns to Int. I only realised that later. Not sure if that is the usual behavior? Any ideas?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ahhh... yes, you are right. I think now that List.Max() works on text as alpha sort, so "33" > "321." As for automaticallly changing data types, I don't know. I turned that off on unstructured data months ago when that new option was given to us in global settings, so I always manually set my data types, and this is one of the reasons. You assume it does everything, and doesn't. Or you go back and add more data, and it doesn't redo the Change Type on the full new dataset, just keeps the old change type there for the first X columns.

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @AlB - I know you already have two solutions, but I gave it some thought and came up with this. I needed this pattern for another potential project so thought I would work through it as well.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("zY65EcAwDMN2Ue0ipPPO4vP+a4TwFC6gCtBxjFK1cujhDFe4wxPe8AUdHEyhClfIwha68EUgClN4/aYwhSlMYQpTmMIU/ajZxi5TcrT27LIlx2vQNmNyes35Aw==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t, Column3 = _t, Column4 = _t, Column5 = _t, Column6 = _t, Column7 = _t, Column8 = _t, Column9 = _t, Column10 = _t, Column11 = _t, Column12 = _t, Column13 = _t, Column14 = _t, Column15 = _t, Column16 = _t, Column17 = _t, Column18 = _t, Column19 = _t, Column20 = _t, Column21 = _t, Column22 = _t, Column23 = _t, Column24 = _t, Column25 = _t, Column26 = _t, Column27 = _t, Column28 = _t, Column29 = _t, Column30 = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1", Int64.Type}, {"Column2", Int64.Type}, {"Column3", Int64.Type}, {"Column4", Int64.Type}, {"Column5", Int64.Type}, {"Column6", Int64.Type}, {"Column7", Int64.Type}, {"Column8", Int64.Type}, {"Column9", Int64.Type}, {"Column10", Int64.Type}, {"Column11", Int64.Type}, {"Column12", Int64.Type}, {"Column13", Int64.Type}, {"Column14", Int64.Type}, {"Column15", Int64.Type}, {"Column16", Int64.Type}, {"Column17", Int64.Type}, {"Column18", Int64.Type}, {"Column19", Int64.Type}}),

#"Renamed Columns" = Table.RenameColumns(#"Changed Type",{{"Column2", "Test"}}),

varColumnNames = List.Buffer(Table.ColumnNames(#"Renamed Columns")),

varColumnCount = List.Count(varColumnNames),

MaxValues =

List.Generate(

()=> [varMaxValue = List.Max(Table.Column(#"Renamed Columns", Table.ColumnNames(#"Renamed Columns"){0})), varCounter = 0],

each [varCounter] < varColumnCount,

each [varMaxValue = List.Max(Table.Column(#"Renamed Columns", Table.ColumnNames(#"Renamed Columns"){varCounter})), varCounter = [varCounter] + 1],

each [varMaxValue]

),

MaxTable = Table.FromColumns({MaxValues}),

#"Transposed Table" = Table.Transpose(MaxTable, Table.ColumnNames(#"Renamed Columns"))

in

#"Transposed Table"Turns this:

to this:

Surprisingly it worked on the text values in your columns 23-33. I was expecting List.Max to fail there, but it it didn't.

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI Reporting- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks. I hadn't used Table.Group( ) without a group before

Awesome. Thanks. So that's like the pandas describe method. It solves the problem at hand neatly but going a bit further, this would be limited to the functions offered by Table.Profile

Great. Thank you. I like the Table.ToColumns. Neat and allows for the customization of the function to be applied to each column. But wouldn't List.Max just be enough, instead of the List.Accumulate?

FindMax = List.Transform(Table.ToColumns(#"Changed Type"), each List.Max(_))

I came up with another solution myself that uses Expression.Evaluate but Table.ToColumns renders that unnecessary:

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMlRQ0lEyBRHGIMIEzjUDEYYmpkqxOtFKRnAFYGFzOMsSrMoIrAibAWCVFmDdRoZgVWAFRqhKLeBixsZKsbEA", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t, Column3 = _t, Column4 = _t, Column5 = _t, Column6 = _t, ColumnN = _t]),

ChangedType = Table.TransformColumnTypes(Source,{{"Column1", Int64.Type}, {"Column2", Int64.Type}, {"Column3", Int64.Type}, {"Column4", Int64.Type}, {"Column5", Int64.Type}, {"Column6", Int64.Type}, {"ColumnN", Int64.Type}}),

colnames_ = Table.ColumnNames(ChangedType),

list_ = List.Transform(colnames_,each List.Max(Expression.Evaluate("ChangedType[" & _ & "]", [_=_, ChangedType=ChangedType]))),

res_ = #table(colnames_, {list_}),

ChangedType1 = Table.TransformColumnTypes(res_, List.Zip({colnames_, List.Repeat({Int64.Type},List.Count(colnames_))}))

in

ChangedType1

Many thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @AlB

just currious about what you meant with this. Do you see any downside to Table.Profile in your scenario?

Awesome. Thanks. So that's like the pandas describe method. It solves the problem at hand neatly but going a bit further, this would be limited to the functions offered by Table.Profile

Jimmy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Your solution is perfect for my scenario, probably the neatest for this case.

I was just saying that if we needed something other than what Table.Profile provides (below), for instance the sum of the absolute value of the elements in the column, the other solutions would be able to hadle that with a minor change.

minimum

maximum

average

standard deviation

count

null count

distinct count

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @AlB

But wouldn't List.Max just be enough, instead of the List.Accumulate?

Doh. Yes,of course!

Regards

Phil

Did I answer your question? Then please mark my post as the solution.

If I helped you, click on the Thumbs Up to give Kudos.

Blog :: YouTube Channel :: Connect on Linkedin

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @AlB

Another solution, but I like @Jimmy801 approach with Table.Profile, neat.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMlTSUTIFYmMgNoGyzYDY0MRUKVYnWskIKgcSM4fSliB5I7A0ujaQEguQFiNDsDxIzghJjQWUb2ysFBsLAA==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t, Column3 = _t, Column4 = _t, Column5 = _t, Column6 = _t, ColumnN = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1", Int64.Type}, {"Column2", Int64.Type}, {"Column3", Int64.Type}, {"Column4", Int64.Type}, {"Column5", Int64.Type}, {"Column6", Int64.Type}, {"ColumnN", Int64.Type}}),

FindMax = List.Transform(Table.ToColumns(#"Changed Type"), each List.Accumulate( _ ,0,(state,current)=>if state>current then state else current)),

#"Converted to Table" = Table.FromList(FindMax, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

#"Transposed Table" = Table.Transpose(#"Converted to Table"),

Final = Table.RenameColumns(#"Transposed Table" , List.Zip({Table.ColumnNames(#"Transposed Table"), Table.ColumnNames(#"Changed Type")}))

in

FinalPhil

Did I answer your question? Then please mark my post as the solution.

If I helped you, click on the Thumbs Up to give Kudos.

Blog :: YouTube Channel :: Connect on Linkedin

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @AlB

you can use Table.Profile, then taking the column Column and Max, Transpose it. For the sum take Record.FieldValues and then sum it. Here an example

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMlRQ0lEyBRHGIMIEzjUDEQoKSrE60UpGcHmwqDmcZYmkCJt+sEoLJEVgeSNUlRZwMZCiWAA=", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Column1 " = _t, #"Column2 " = _t, #"Column3 " = _t, #"Column4 " = _t, #"Column5 " = _t, #"Column6 " = _t, #" .... " = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1 ", Int64.Type}, {"Column2 ", Int64.Type}, {"Column3 ", Int64.Type}, {"Column4 ", Int64.Type}, {"Column5 ", Int64.Type}, {"Column6 ", Int64.Type}, {" .... ", type text}}),

Table = Table.Profile(#"Changed Type"),

Select = Table.Transpose(Table.SelectColumns(Table, {"Column", "Max"})),

#"Promoted Headers" = Table.PromoteHeaders(Select, [PromoteAllScalars=true]),

#"Changed Type1" = Table.TransformColumnTypes(#"Promoted Headers",{{" .... ", type text}, {"Column1 ", Int64.Type}, {"Column2 ", Int64.Type}, {"Column3 ", Int64.Type}, {"Column4 ", Int64.Type}, {"Column5 ", Int64.Type}, {"Column6 ", Int64.Type}}),

#"Added Custom" = Table.AddColumn(#"Changed Type1", "Sum Of Max", (add)=> List.Sum(List.Transform(Record.FieldValues(add), each if Value.Is(_,type number) then _ else Number.FromText(_))))

in

#"Added Custom"

Copy paste this code to the advanced editor in a new blank query to see how the solution works.

If this post helps or solves your problem, please mark it as solution (to help other users find useful content and to acknowledge the work of users that helped you)

Kudoes are nice too

Have fun

Jimmy

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Pretty sure you'll need to use List.Generate, and probalby ExpressionEvaluate.

FWIW, you can group data without a group and just pick the max value from each column, but this will not dynamically get the column names nor assign them. That is what List.Generate would do. Tedious.

Below just does the max of the first 3 columns in your example.

I'd have to think about it if there was a better way than List.Generate.....

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("zY65EcAwDMN2Ue0ipPPO4vP+a4TwFC6gCtBxjFK1cujhDFe4wxPe8AUdHEyhClfIwha68EUgClN4/aYwhSlMYQpTmMIU/ajZxi5TcrT27LIlx2vQNmNyes35Aw==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [Column1 = _t, Column2 = _t, Column3 = _t, Column4 = _t, Column5 = _t, Column6 = _t, Column7 = _t, Column8 = _t, Column9 = _t, Column10 = _t, Column11 = _t, Column12 = _t, Column13 = _t, Column14 = _t, Column15 = _t, Column16 = _t, Column17 = _t, Column18 = _t, Column19 = _t, Column20 = _t, Column21 = _t, Column22 = _t, Column23 = _t, Column24 = _t, Column25 = _t, Column26 = _t, Column27 = _t, Column28 = _t, Column29 = _t, Column30 = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"Column1", Int64.Type}, {"Column2", Int64.Type}, {"Column3", Int64.Type}, {"Column4", Int64.Type}, {"Column5", Int64.Type}, {"Column6", Int64.Type}, {"Column7", Int64.Type}, {"Column8", Int64.Type}, {"Column9", Int64.Type}, {"Column10", Int64.Type}, {"Column11", Int64.Type}, {"Column12", Int64.Type}, {"Column13", Int64.Type}, {"Column14", Int64.Type}, {"Column15", Int64.Type}, {"Column16", Int64.Type}, {"Column17", Int64.Type}, {"Column18", Int64.Type}, {"Column19", Int64.Type}}),

#"Grouped Rows" =

Table.Group(

#"Changed Type",

{},

{

{"Max1", each List.Max([Column1]), type nullable number},

{"Max2", each List.Max([Column2]), type nullable number},

{"Max3", each List.Max([Column3]), type nullable number}

}

)

in

#"Grouped Rows"

Did I answer your question? Mark my post as a solution!

Did my answers help arrive at a solution? Give it a kudos by clicking the Thumbs Up!

DAX is for Analysis. Power Query is for Data Modeling

Proud to be a Super User!

MCSA: BI ReportingHelpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.