- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Power Query

- Power BI Embedded - can't upload PBIX to workspace

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Power BI Embedded - can't upload PBIX to workspace

Please help! We're using Power BI Embedded. We're planning to migrate to the new "embedded capacity" eventually, but for now, still using the "old" Power BI Embedded with workspace collections.

I have a PBIX file in Import mode. I periodically open it locally, refresh it, then re-post it to my workspace colleciton. I use the Power BI command line tool to upload the file, with a commmand like...

powerbi import -f "C:\[path\filename]" -n [name] -o true

Recently, as the file has gotten slightly larger over time, my import has been failing more and more frequently. I get the reponse "read ECONNRESET" -- which seems to indicate a timeout.

Now, the file is just over 100 MB -- well below the 1 GB limit. But now, it is timing out every single time. Today/tonight, I've tried it many times. Morning, day, night...thinking maybe it will work at some hour of the day...maybe during "off hours"...guessing that maybe the Azure servers are less loaded at certain times.

Because I don't think that my Internet connection is the problem. I can upload a 100 MB file to another destination in ~1-2 minutes. But when trying to upload this PBIX to my workspace collection, it runs for ~10 minutes before returning "read ECONNRESET".

Assuming I can't make the file any smaller, is there anything I can do?

Has anyone else been able to use the Power BI CLI to import files of this size, with no problem? Could it be my Internet connection?

Would importing the file using the API, instead of the CLI, have a higher chance of succeeding (i.e., not timing out)? (But using the API seems much harder. Can it be done by submitting a single POST request, like using Postman? Or, would I have to execute a bunch of JavaScript [or other] code, somewhere, to make a series of API calls, to get the app token, etc.?)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are numerous ways to accomplish this, but if you are having issues with file size or upload speed this technique always works for me. My numerous PowerBI files all average over 400 MB, which should not be a problem but most upload speeds are throttled such that importing them into a workspace ID is problematic. When the upload does work, it typically takes over 25 minutes. This technique takes about one.

This assumes you have access to a fileshare in portal.azure.com and have uploaded your pbix file there.

Launch Cloud Shell by clicking on this button.

Note: If this is your first time launching Cloud Shell you'll have to provision a persistent file share for it. Read this to understand the pricing and process. It's really cheap.

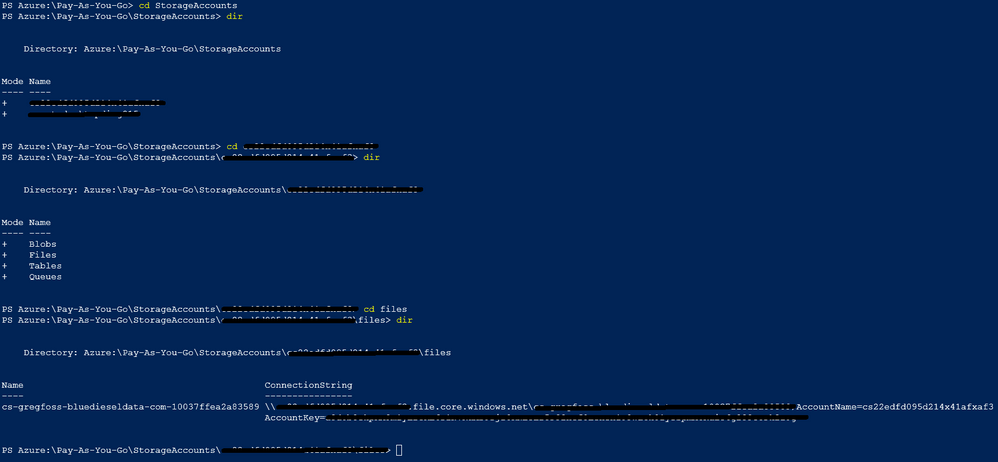

Navigate to the location where you have uploaded your pbix. Just use simple dir and cd commands.

The ConnectionString will be used to mount that fileshare as a drive in Cloud Shell.

Mount the fileshare as a drive.

The syntax follows this layout:

net use <DesiredDriveLetter>: \\<MyStorageAccountName>.file.core.windows.net\<MyFileShareName> <AccountKey> /user:Azure\<MyStorageAccountName>

Access the file and load it into a workspace. The easiest way 've found to do this is using the PowerBI-Cli found here.

PowerBI-Cli is now deprecated however, it is a very useful tool that I'll continue to use until something better comes along.

You will have to load the powerbi commands using npm.

npm install powerbi-cli -g

Using the following syntax, you can load your pbix file into a workspace. Even the largest files take about a minute.

powerbi import -c <collection> -w <workspaceId> -k <accessKey> -f <file> -n [name] -o [overwrite]

Hope this helps!

Greg

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@kevhav wrote:

Please help! We're using Power BI Embedded. We're planning to migrate to the new "embedded capacity" eventually, but for now, still using the "old" Power BI Embedded with workspace collections.

I have a PBIX file in Import mode. I periodically open it locally, refresh it, then re-post it to my workspace colleciton. I use the Power BI command line tool to upload the file, with a commmand like...

powerbi import -f "C:\[path\filename]" -n [name] -o trueRecently, as the file has gotten slightly larger over time, my import has been failing more and more frequently. I get the reponse "read ECONNRESET" -- which seems to indicate a timeout.

Now, the file is just over 100 MB -- well below the 1 GB limit. But now, it is timing out every single time. Today/tonight, I've tried it many times. Morning, day, night...thinking maybe it will work at some hour of the day...maybe during "off hours"...guessing that maybe the Azure servers are less loaded at certain times.

Because I don't think that my Internet connection is the problem. I can upload a 100 MB file to another destination in ~1-2 minutes. But when trying to upload this PBIX to my workspace collection, it runs for ~10 minutes before returning "read ECONNRESET".

Assuming I can't make the file any smaller, is there anything I can do?

Has anyone else been able to use the Power BI CLI to import files of this size, with no problem? Could it be my Internet connection?

Would importing the file using the API, instead of the CLI, have a higher chance of succeeding (i.e., not timing out)? (But using the API seems much harder. Can it be done by submitting a single POST request, like using Postman? Or, would I have to execute a bunch of JavaScript [or other] code, somewhere, to make a series of API calls, to get the app token, etc.?)

I'm not able to reproduce the issue you described, I don't have any problem when importing a pbix file more than 500mb. I think the CLI underlying is calling the API, if you don't like it, you could try my C# sample to import a pbix file.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Threading.Tasks;

//Install-Package Microsoft.PowerBI.Api

using Microsoft.PowerBI.Api.V1;

using Microsoft.PowerBI.Api.V1.Models;

using System.IO;

using System.Threading;

using Microsoft.Rest;

namespace importPbix_PBIE

{

class Program

{

//power bi workspace collection accesskey

static string accessKey = "KJixxxxxxxxxViXY8iE/zNVHROCmcaOIFr6a2vmQ==";

static string workspaceCollectionName = "yourworkspname";

static string workspaceId = "79c71xxxxx2192fe0b";

static string filePath = @"C:\test\test.pbix";

static void Main(string[] args)

{

var task = ImportPbix(workspaceCollectionName, workspaceId, "mytestdataset", filePath);

task.Wait();

Console.ReadKey();

}

static async Task<Import> ImportPbix(string workspaceCollectionName, string workspaceId, string datasetName, string filePath)

{

using (var fileStream = File.OpenRead(filePath.Trim('"')))

{

using (var client = await CreateClient())

{

// Set request timeout to support uploading large PBIX files

client.HttpClient.Timeout = TimeSpan.FromMinutes(60);

client.HttpClient.DefaultRequestHeaders.Add("ActivityId", Guid.NewGuid().ToString());

// Import PBIX file from the file stream

var import = await client.Imports.PostImportWithFileAsync(workspaceCollectionName, workspaceId, fileStream, datasetName);

// Example of polling the import to check when the import has succeeded.

while (import.ImportState != "Succeeded" && import.ImportState != "Failed")

{

import = await client.Imports.GetImportByIdAsync(workspaceCollectionName, workspaceId, import.Id);

Console.WriteLine("Checking import state... {0}", import.ImportState);

Thread.Sleep(1000);

}

return import;

}

}

}

static async Task<PowerBIClient> CreateClient()

{

// Create a token credentials with "AppKey" type

var credentials = new TokenCredentials(accessKey, "AppKey");

// Instantiate your Power BI client passing in the required credentials

var client = new PowerBIClient(credentials);

// Override the api endpoint base URL. Default value is https://api.powerbi.com

client.BaseUri = new Uri("https://api.powerbi.com");

return client;

}

}

}

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 102 | |

| 53 | |

| 21 | |

| 12 | |

| 12 |