- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- modeling data

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

modeling data

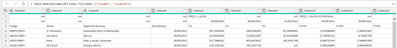

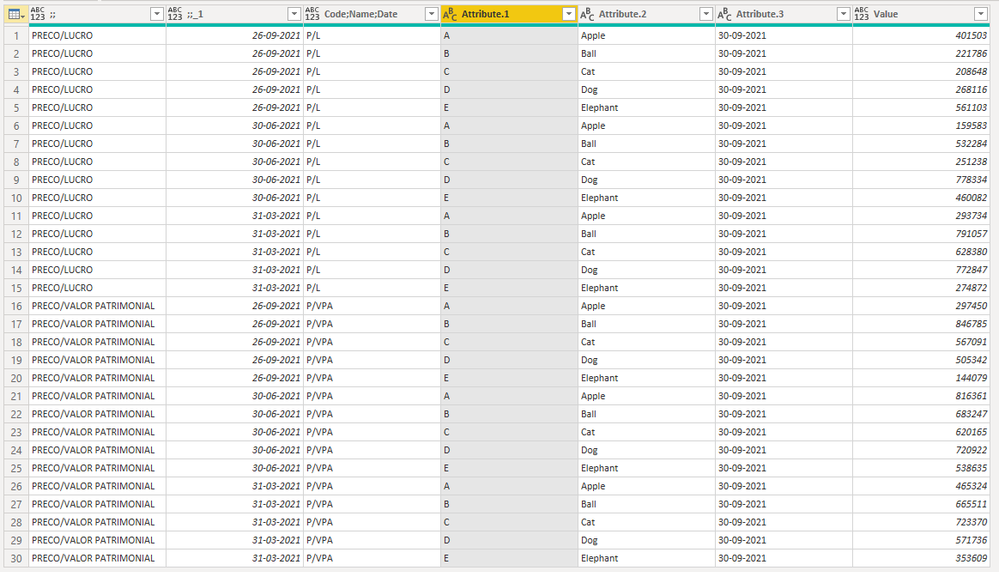

I'm having a great deal of difficulty in doing the data modeling, could someone give me a light on how to make this table

with the perfect columns transpose the dates contained in it?

just wanted to be able to separate each code by dates so I could create viewsI've never taken data structured this way, so I don't know how to proceed.A preview of this data is attached.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You could transform this into a proper table. I will post an example below...

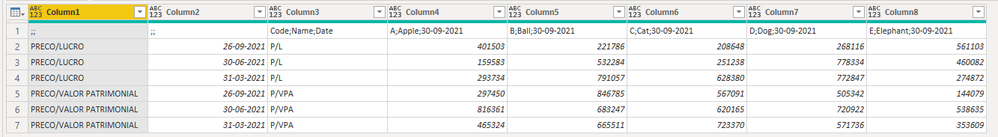

Let's say, you are starting with the following imported data... (remove any step that promotes 1st row as headers)

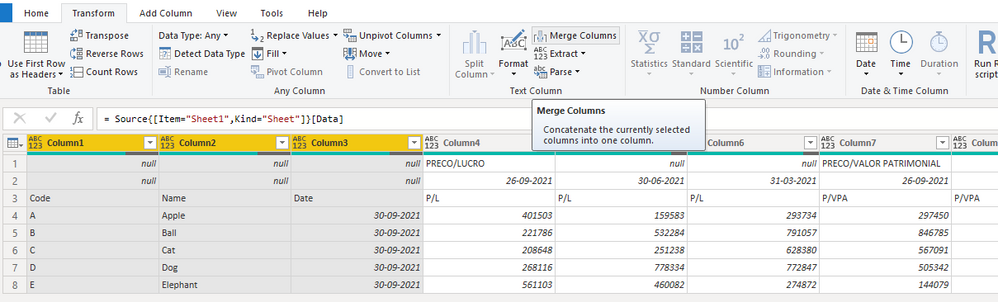

Step 1: Identify the pivot columns. In this case, those are the first 3 columns. Select those 3 pivot columns and merge them together, use a delimiter like a semicolon (;) or any other character that won't be there in the data values of these columns.

It will give you the following...

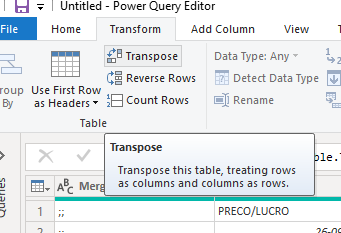

Step 2: Transpose this table using the following option.

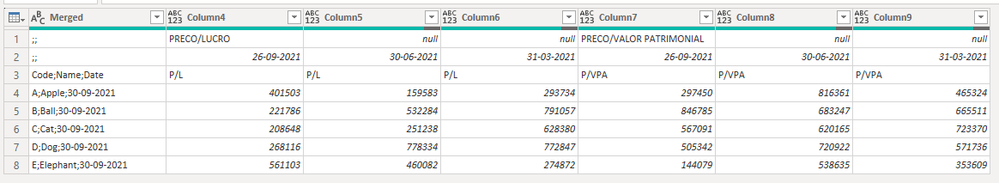

This will transpose the table and give you the following...

Step 3: Use "Fill Down" on those pivot columns that have null values in them.

This will give you the following output.

Step 4: Promote the first row as headers

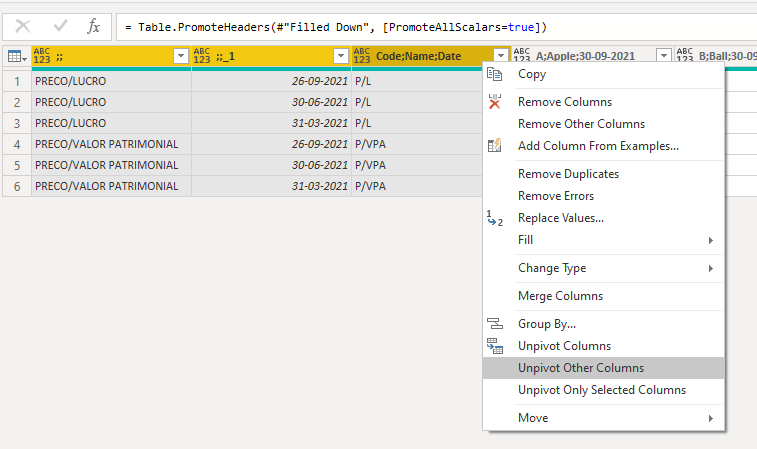

Step 5: Select the first 3 Pivot columns and unpivot the other columns. (Use Shift+Click or Ctrl+Click to select multiple columns)

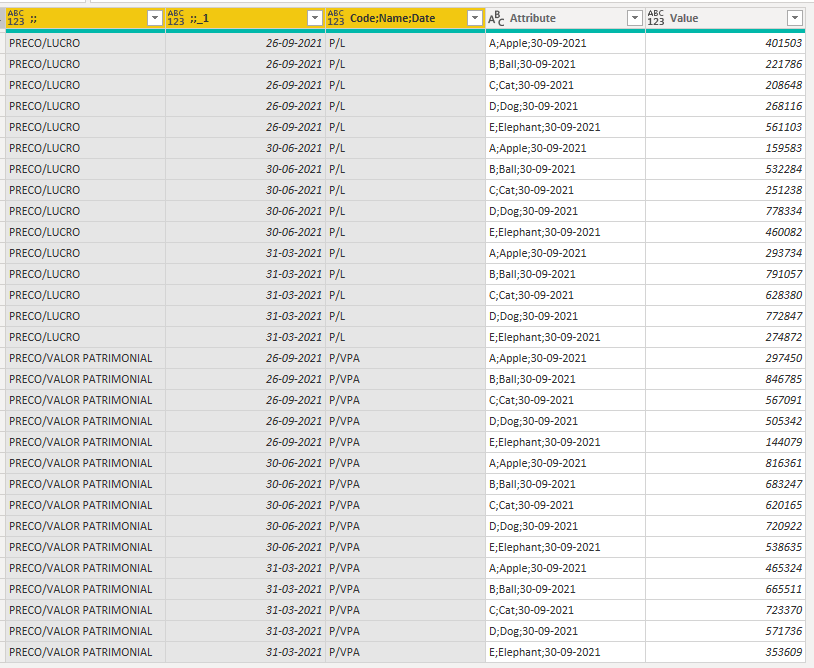

This will give you the following output.

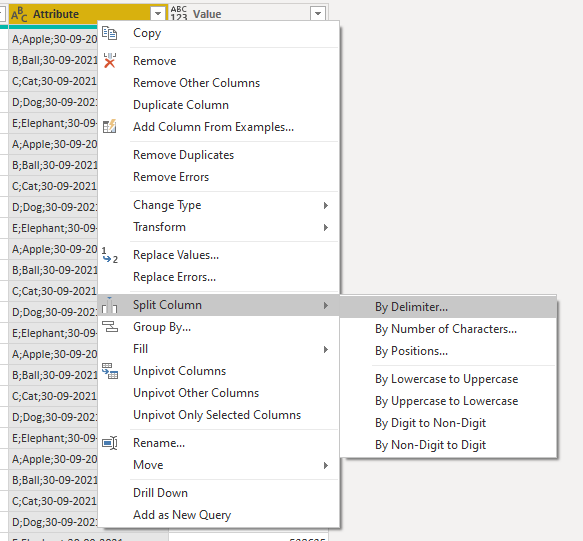

Step 6: Split back the merged column using the same delimiter that was used earlier.

This will give you the following output.

You could further rename the column names, change the data types, and so on. Use these steps as a general idea. The actual steps that you need could be different depending on your input file. But it will be a combination of similar steps.

You don't have to know any advanced stuff to do all these. These are pretty simple steps like merging, transposing, splitting, unpivoting, renaming column headers, choosing the correct data types etc... If you observe your input file and think about how to sequence these simple transformations to get the final output, you will be able to do it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You could transform this into a proper table. I will post an example below...

Let's say, you are starting with the following imported data... (remove any step that promotes 1st row as headers)

Step 1: Identify the pivot columns. In this case, those are the first 3 columns. Select those 3 pivot columns and merge them together, use a delimiter like a semicolon (;) or any other character that won't be there in the data values of these columns.

It will give you the following...

Step 2: Transpose this table using the following option.

This will transpose the table and give you the following...

Step 3: Use "Fill Down" on those pivot columns that have null values in them.

This will give you the following output.

Step 4: Promote the first row as headers

Step 5: Select the first 3 Pivot columns and unpivot the other columns. (Use Shift+Click or Ctrl+Click to select multiple columns)

This will give you the following output.

Step 6: Split back the merged column using the same delimiter that was used earlier.

This will give you the following output.

You could further rename the column names, change the data types, and so on. Use these steps as a general idea. The actual steps that you need could be different depending on your input file. But it will be a combination of similar steps.

You don't have to know any advanced stuff to do all these. These are pretty simple steps like merging, transposing, splitting, unpivoting, renaming column headers, choosing the correct data types etc... If you observe your input file and think about how to sequence these simple transformations to get the final output, you will be able to do it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I know this post is quite old. But I am currently working through a similar process with a project.

What I would like to know is,

Would it better to keep your data at the data source formatted similar to the orginal post, and then transform the data in Power BI.

or

Model the data in the format you suggested at the data source.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

1) If you can format the data at the source itself, that is better rather than doing all these transformations in PowerQuery. Forget the technical reasons, it will be easy for someone to else go through the code and debug or edit it later if there is a requirement. This will also help in identifying any error in the data immediately by determining if the error is the source or in the transformation.

2) Other than that, the major difference is that if you add any columns or perform some calculations in Power Query, it will be performed before importing the data and the transformed data will be stored in persistent storage. But if you add a calculated column in DAX, it is not stored and everytime it is calculated during the data refresh and these columns will occupy the RAM & CPU. Generally, I prefer to add any such columns either in the source or using Power Query and then import the data and avoid using Calculated Columns in DAX. Ofcourse, there are some exceptions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for this, it is extremely helpful!

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 107 | |

| 98 | |

| 78 | |

| 65 | |

| 53 |

| User | Count |

|---|---|

| 144 | |

| 104 | |

| 100 | |

| 86 | |

| 64 |