- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: how to optimize filter by a measure using disc...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

how to optimize filter by a measure using disconnected table

Hi

my model has two tables that show coordinates from openstreetmap, I am using a measure to calculate the distance bewtween two coordinates see blog here https://radacad.com/dynamic-distances-in-power-bi

the report work like this, you select a point, and a distance, and the measure will filter all the points < distance, now the report works perfectly till the data is around 1 Millions, after that it becomve very slow, once I loaded the whole data around 15 millions, the measure take nearly 11 seconds ?

I attached an example, I loaded the data for turkey alone

https://app.box.com/s/86scgz5nm0ad7s8uitd84vc7k4lgtrgk

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @mim ,

this is my best (at the moment) for the distance_filter measure, it's called a little different:

distance_filter tom =

var _d = max(distance[Value])

return

if([Kilometers] <= _d , 1 , BLANK())As you can see, I dropped the caching for [Kilometers] as it's calculated on a row basis, the column 'amenity'[index].

My 1st attempt was useless.

Nevertheless, instead of returning the kilometers, the formula returns 1 if the condition is met, otherwise explicitly BLANK().

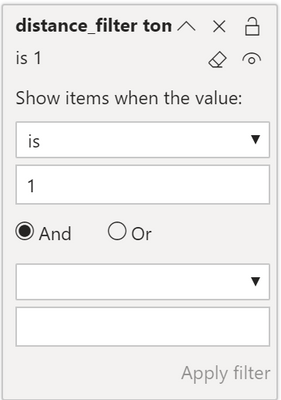

I use this setting for the filter on both visuals, the matrix, and the icon map:

These are the performance counters, using the performance analyzer inside Power BI Desktop:

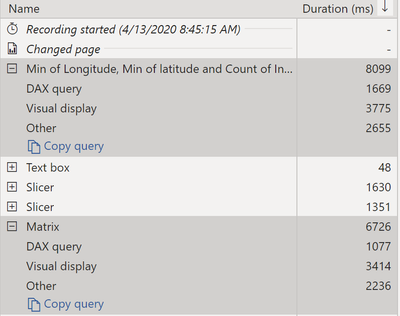

The adjusted distance_filter tom measure in combination with the adjusted visual filter creates these performance measurements:

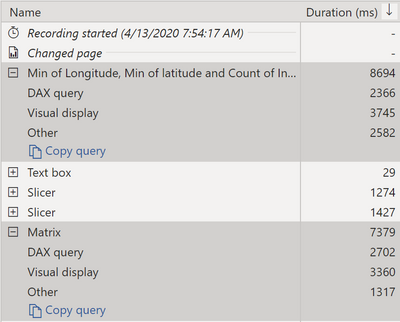

In contrast, the original distance_filter and not is blank visual level filter:

Using the adjusted measure and the adjusted visual level filter setting provide slight improvements.

I think it's not possible to optimize the [kilometers] measure, it is applied to each row, the index column. As the algorithm is more complex (the nature of this algorithm) the formula engine has to be used.

Wondering, how this will end.

Regards,

Tom

Did I answer your question? Mark my post as a solution, this will help others!

Proud to be a Super User!

I accept Kudos 😉

Hamburg, Germany

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @mim ,

here is the DAX for the distant filter @Anonymous already mentioned:

distance_filter =

var _km = [Kilometers]

var _d = max(distance[Value])

return

if(

_km <= _d

, _km

)

Regards,

Tom

Stay safe, stay healthy, and happy holidays

Did I answer your question? Mark my post as a solution, this will help others!

Proud to be a Super User!

I accept Kudos 😉

Hamburg, Germany

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

using variable make it worst from 10 seconds to 40 seconds !!!!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @mim ,

can you please try again, I changed the DAX a little.

Regards,

Tom

Did I answer your question? Mark my post as a solution, this will help others!

Proud to be a Super User!

I accept Kudos 😉

Hamburg, Germany

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@TomMartens nope, you can try it by yourself in the pbix file I shared it, and see the difference ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey @mim ,

this is my best (at the moment) for the distance_filter measure, it's called a little different:

distance_filter tom =

var _d = max(distance[Value])

return

if([Kilometers] <= _d , 1 , BLANK())As you can see, I dropped the caching for [Kilometers] as it's calculated on a row basis, the column 'amenity'[index].

My 1st attempt was useless.

Nevertheless, instead of returning the kilometers, the formula returns 1 if the condition is met, otherwise explicitly BLANK().

I use this setting for the filter on both visuals, the matrix, and the icon map:

These are the performance counters, using the performance analyzer inside Power BI Desktop:

The adjusted distance_filter tom measure in combination with the adjusted visual filter creates these performance measurements:

In contrast, the original distance_filter and not is blank visual level filter:

Using the adjusted measure and the adjusted visual level filter setting provide slight improvements.

I think it's not possible to optimize the [kilometers] measure, it is applied to each row, the index column. As the algorithm is more complex (the nature of this algorithm) the formula engine has to be used.

Wondering, how this will end.

Regards,

Tom

Did I answer your question? Mark my post as a solution, this will help others!

Proud to be a Super User!

I accept Kudos 😉

Hamburg, Germany

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

wow, perfect, it is back to 3 seconds , I can't belive a simple change made that difference

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Best

D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

https://en.wikipedia.org/wiki/Geographical_distance

Best

D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

First, your distance_filter should use a VARiable to capture the distance and then use it in the subsequent IF.

However, to speed this calculation up to an insane speed... you'll need to create a matrix (only one part of it, say, the upper part) that will store all the distances between points. In a word, you have to precalculate the distances. You can do it easily in Power Query.

Of course, such a matrix must be unpivoted into a 3-column table:

Ref Point, To Point, Distance

Best

D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have 15 Miilions record, If i precalculate then it become 15 Millions * 15 Millions , which is a lot 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It does not matter it's a lot. What matters is the number of distinct values in a column. The fewer, the better. I've got tables with more than 200 millions of rows and calculations are instant.

When you calculate the table as I indicated, make sure that the distances are, for instance, in full meters, not fractions of meters. So, the distance should be an int. Even better, make it in full km's.

By the way, at the very minimum you only need (15 * 15 - 15) / 2 = 105 mln distances. That's not a lot. You can do the calculation in Power Query or you can use Python or R script for this.

Best

D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous , check your math, if only I have 1 million records, the combination will be 1 million X 1 million = 1 trillion !!!!

and I can't divide/2 as I need one column to filter, so pre calculation is not feasible

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Best

D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can figure out the rest. If you cannot use a very precise number, use quick approximations. That's the way to conquer this problem.

Best

D

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you tried running Dax Studio and seeing if it can help you pinpoint the slow part of your code ?

Did this post answer your question? Mark it as a solution so others can find it!

Help when you know. Ask when you don't!

Join the conversation at We Talk BI find out more about me at Slow BI

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did, the fixing is the problem, it seems everything is run by the FE 😞 which is bad i guess

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 114 | |

| 99 | |

| 83 | |

| 70 | |

| 61 |

| User | Count |

|---|---|

| 149 | |

| 114 | |

| 107 | |

| 89 | |

| 67 |