- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: calculate difference between time stamps for u...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

calculate difference between time stamps for unique ID

Grateful for help with the following issue.

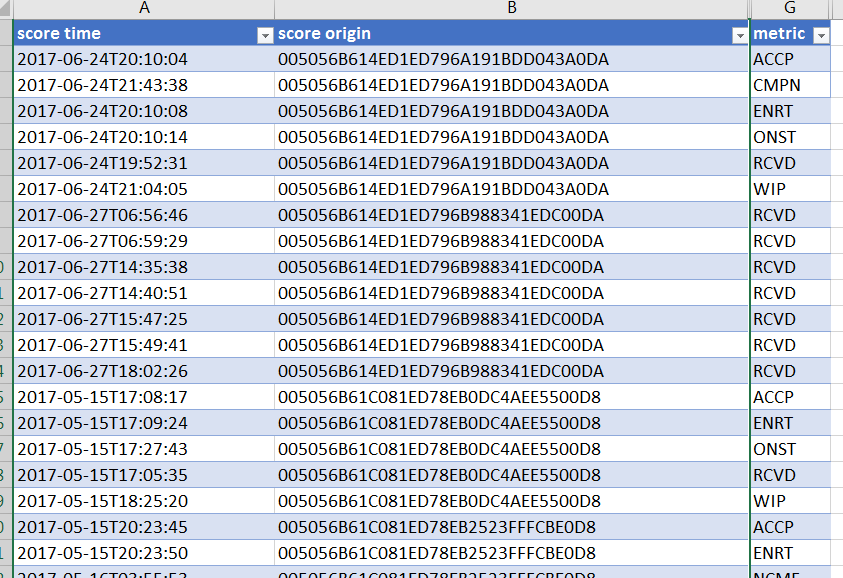

I have the following table with unique job IDs (score origin) and want to compare the difference in time between when that job ID is in a different state (metric column). More specifically the difference in time between when it is 'ACCP' (accepted) and when it is 'ONST' (on site).

I have created new tables to try and make it easier to compare the difference in time stamps, but to no avail. Grateful if anyone could point me in the right direction, preferably if I was able to create a new column with time difference betewen when the job is 'accepted' (ACCP) and when someone is actually 'on site' (ONST).

Many thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @DR,

Please try this workaround:

Create a new table.

Table_1 = SELECTCOLUMNS ( FILTER ( 'time difference', 'time difference'[metric] = "ONST" ), "score time", 'time difference'[score time], "score origin", 'time difference'[score origin] )

In original table, add a calculated column which displays the time difference between "ACCP" and "ONST".

time diff = DATEDIFF ( 'time difference'[score time], IF ( 'time difference'[metric] = "ACCP", LOOKUPVALUE ( Table_1[score time], Table_1[score origin], 'time difference'[score origin] ), BLANK () ), SECOND )

Regards,

Yuliana Gu

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

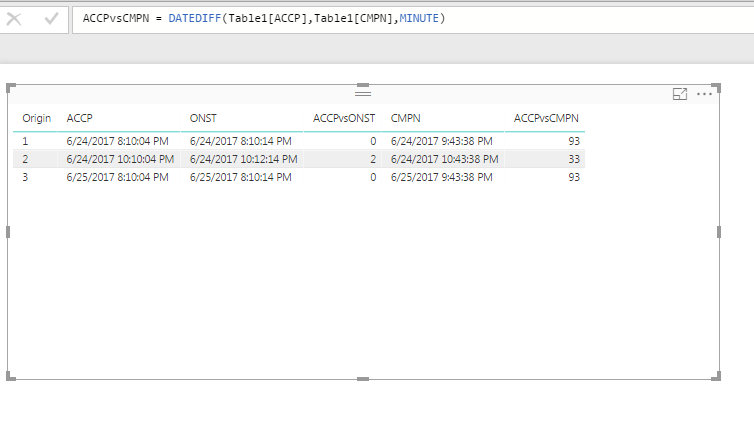

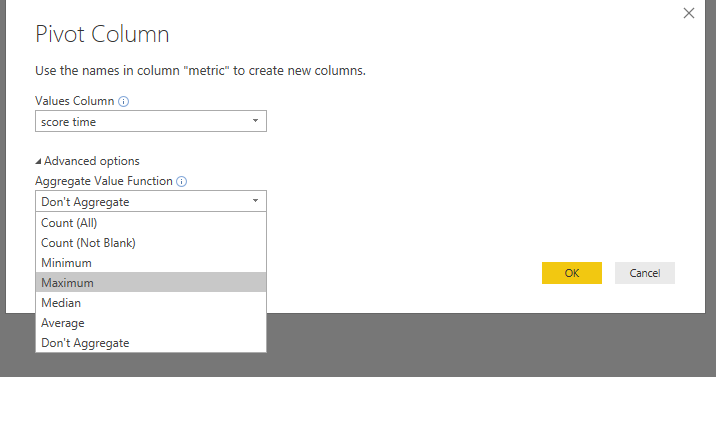

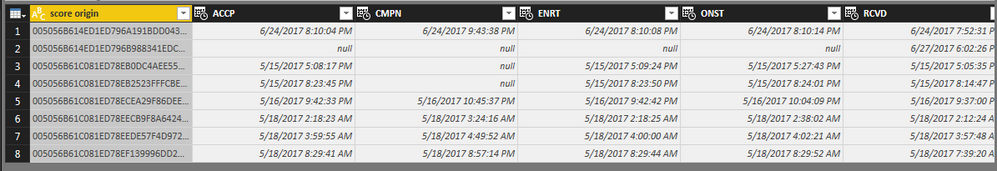

I usually tell people NOT to pivot their data, but the quickest / easiest way I can think to resolve this is to put your data into a Pivot table. First, I had to mass change your "T" in your time column to a blank so PowerBI can easily identify it as a Date Time column.

With the **Metric** column selected in Query Editors, choose 'Pivot Column' under 'Transform' Tab. Make sure under Advanced NOT to Summarize.

Now you can easily use DateDiff to calucatle time difference as needed... FOrrest

Please give Kudos or Mark as a Solution!

https://www.linkedin.com/in/forrest-hill-04480730/

Proud to give back to the community!

Thank You!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Very much obliged for this.

However, I notice that I get a number of columns with errors or blanks once I have pivoted. Is this because there are some duplications where an individual has register 'ACCP' twice for the same ID?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

DOH! I didnt' noticed the duplicates in your data. Can you copy/past some sample data (instead of the picture) so we can quickly dump it into Power BI and play with the manipulation... ?

FOrrest

Please give Kudos or Mark as a Solution!

https://www.linkedin.com/in/forrest-hill-04480730/

Proud to give back to the community!

Thank You!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

| score time | score origin | metric |

| 2017-06-24T20:10:04 | 005056B614ED1ED796A191BDD043A0DA | ACCP |

| 2017-06-24T21:43:38 | 005056B614ED1ED796A191BDD043A0DA | CMPN |

| 2017-06-24T20:10:08 | 005056B614ED1ED796A191BDD043A0DA | ENRT |

| 2017-06-24T20:10:14 | 005056B614ED1ED796A191BDD043A0DA | ONST |

| 2017-06-24T19:52:31 | 005056B614ED1ED796A191BDD043A0DA | RCVD |

| 2017-06-24T21:04:05 | 005056B614ED1ED796A191BDD043A0DA | WIP |

| 2017-06-27T06:56:46 | 005056B614ED1ED796B988341EDC00DA | RCVD |

| 2017-06-27T06:59:29 | 005056B614ED1ED796B988341EDC00DA | RCVD |

| 2017-06-27T14:35:38 | 005056B614ED1ED796B988341EDC00DA | RCVD |

| 2017-06-27T14:40:51 | 005056B614ED1ED796B988341EDC00DA | RCVD |

| 2017-06-27T15:47:25 | 005056B614ED1ED796B988341EDC00DA | RCVD |

| 2017-06-27T15:49:41 | 005056B614ED1ED796B988341EDC00DA | RCVD |

| 2017-06-27T18:02:26 | 005056B614ED1ED796B988341EDC00DA | RCVD |

| 2017-05-15T17:08:17 | 005056B61C081ED78EB0DC4AEE5500D8 | ACCP |

| 2017-05-15T17:09:24 | 005056B61C081ED78EB0DC4AEE5500D8 | ENRT |

| 2017-05-15T17:27:43 | 005056B61C081ED78EB0DC4AEE5500D8 | ONST |

| 2017-05-15T17:05:35 | 005056B61C081ED78EB0DC4AEE5500D8 | RCVD |

| 2017-05-15T18:25:20 | 005056B61C081ED78EB0DC4AEE5500D8 | WIP |

| 2017-05-15T20:23:45 | 005056B61C081ED78EB2523FFFCBE0D8 | ACCP |

| 2017-05-15T20:23:50 | 005056B61C081ED78EB2523FFFCBE0D8 | ENRT |

| 2017-05-16T03:55:53 | 005056B61C081ED78EB2523FFFCBE0D8 | NCMF |

| 2017-05-15T20:24:01 | 005056B61C081ED78EB2523FFFCBE0D8 | ONST |

| 2017-05-15T20:14:47 | 005056B61C081ED78EB2523FFFCBE0D8 | RCVD |

| 2017-05-15T20:24:27 | 005056B61C081ED78EB2523FFFCBE0D8 | WIP |

| 2017-05-16T21:42:33 | 005056B61C081ED78ECEA29F86DEE0D8 | ACCP |

| 2017-05-16T22:45:37 | 005056B61C081ED78ECEA29F86DEE0D8 | CMPN |

| 2017-05-16T21:42:42 | 005056B61C081ED78ECEA29F86DEE0D8 | ENRT |

| 2017-05-16T22:04:09 | 005056B61C081ED78ECEA29F86DEE0D8 | ONST |

| 2017-05-16T21:37:00 | 005056B61C081ED78ECEA29F86DEE0D8 | RCVD |

| 2017-05-16T22:41:28 | 005056B61C081ED78ECEA29F86DEE0D8 | WIP |

| 2017-05-18T02:18:23 | 005056B61C081ED78EECB9F8A64240D8 | ACCP |

| 2017-05-18T03:24:16 | 005056B61C081ED78EECB9F8A64240D8 | CMPN |

| 2017-05-18T02:18:25 | 005056B61C081ED78EECB9F8A64240D8 | ENRT |

| 2017-05-18T02:38:02 | 005056B61C081ED78EECB9F8A64240D8 | ONST |

| 2017-05-18T02:12:24 | 005056B61C081ED78EECB9F8A64240D8 | RCVD |

| 2017-05-18T02:47:31 | 005056B61C081ED78EECB9F8A64240D8 | WIP |

| 2017-05-18T03:59:55 | 005056B61C081ED78EEDE57F4D9720D8 | ACCP |

| 2017-05-18T04:49:52 | 005056B61C081ED78EEDE57F4D9720D8 | CMPN |

| 2017-05-18T04:00:00 | 005056B61C081ED78EEDE57F4D9720D8 | ENRT |

| 2017-05-18T04:02:21 | 005056B61C081ED78EEDE57F4D9720D8 | ONST |

| 2017-05-18T03:48:50 | 005056B61C081ED78EEDE57F4D9720D8 | RCVD |

| 2017-05-18T03:57:48 | 005056B61C081ED78EEDE57F4D9720D8 | RCVD |

| 2017-05-18T04:45:50 | 005056B61C081ED78EEDE57F4D9720D8 | WIP |

| 2017-05-18T08:29:41 | 005056B61C081ED78EF139996DD220D8 | ACCP |

| 2017-05-18T20:57:14 | 005056B61C081ED78EF139996DD220D8 | CMPN |

| 2017-05-18T08:29:44 | 005056B61C081ED78EF139996DD220D8 | ENRT |

| 2017-05-18T08:29:52 | 005056B61C081ED78EF139996DD220D8 | ONST |

| 2017-05-18T07:39:20 | 005056B61C081ED78EF139996DD220D8 | RCVD |

| 2017-05-18T20:46:41 | 005056B61C081ED78EF139996DD220D8 | WIP |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @DR,

Have you worked it out? If yes, please kindly mark the helpful reply as an answer so that it can benefit more users. If you still have any question, please feel free to ask.

Regards,

Yuliana Gu

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You were right about the Errors being duplicates. You'll have to descide how you want to handle that data... Since your values are times, Min is Earliest and Max is Latest. The 'null's are blanks in your original data, so ignore them, or go back to your data origin to resolve?

I think the biggest now is 'What if I want First RCVD but Last CMPN??? I don't have an answer for you on that one, sorry... Hopefully someone else jumps on this and might be able to build something simular with custom columns in DAX or something...?

Please give Kudos or Mark as a Solution!

https://www.linkedin.com/in/forrest-hill-04480730/

Proud to give back to the community!

Thank You!

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 110 | |

| 96 | |

| 77 | |

| 63 | |

| 55 |

| User | Count |

|---|---|

| 143 | |

| 109 | |

| 89 | |

| 84 | |

| 66 |