- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Web scraping advice

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Web scraping advice

Hello SuperUsers

Looking for your valuable advice on how to best scrape website below for tables.

Which method is most efficient, via VBA or with Power BI .. or is there another better way?

Any advice will be very much appreciated - Thank you!

The URL is =

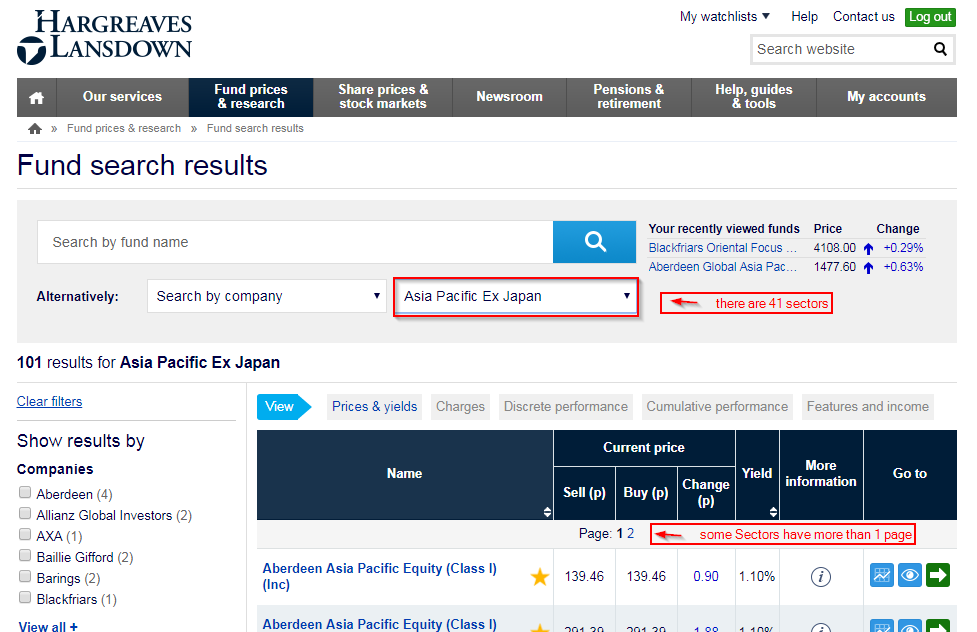

www.hl.co.uk/funds/fund-discounts,-prices--and--factsheets/search-results?sectorid=121*&lo=0&page=1**&tab=prices

*There are 41 Sectors so this value changes

**Some Sectors have more than 1 page

See pic below ...

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This isn’t such an easy task in PowerBI. Also, because the website doesn’t like parametrized queries too much, so one even has to rearrange the order of the arguments in the URL (Step: “RearragnedURL”).

Also, it takes quite some time to retrieve the data, but I believe this has more to do with the website and not with the query itself.

You have to dive into the HTML to retrieve all Sector IDs. Then you call them on their 1st page and retrieve the number of pages (step: “ListOfAllPages “). After that you call each page. Apply this function (“WebCall”) on each sector (in step: "CallEachFunction "):

let

// Function for each Sector-Information

WebCall = (SectorID as text) =>

let

Source = Web.Page(Web.Contents("http://www.hl.co.uk/funds/fund-discounts,-prices--and--factsheets/search-results?sectorid="&SectorID&"&lo=0&page=1&tab=prices")),

Custom1 = Source{[ClassName="table-styled table-sortable"]}[Data],

ListOfAllPages = if List.Count(List.Skip(Text.Split(Custom1{0}[Name], "#(lf)")))=0 then {"1"} else List.Skip(Text.Split(Custom1{0}[Name], "#(lf)")),

#"Converted to Table" = Table.FromList(ListOfAllPages, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

RearragnedURL = Table.AddColumn(#"Converted to Table", "Custom", each Web.Page(Web.Contents("http://www.hl.co.uk/funds/fund-discounts,-prices--and--factsheets/search-results?tab=prices§orid="&SectorID&"&lo=0&page="&[Column1]&"")){[ClassName="table-styled table-sortable"]}[Data]),

#"Expanded Custom" = Table.ExpandTableColumn(RearragnedURL, "Custom", {"Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}, {"Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}),

#"Filtered Rows" = Table.SelectRows(#"Expanded Custom", each not Text.StartsWith([Name], "Page:")),

#"Changed Type" = try Table.TransformColumnTypes(#"Filtered Rows",{{"Name", type text}, {"Name2", type text}, {"Current price Sell (p)", type number}, {"Current price Buy (p)", type number}, {"Current price Change (p)", type number}, {"Yield", Percentage.Type}, {"More information", type text}, {"Go to", type any}}) otherwise null

in

try #"Changed Type" otherwise null,

// Start of main query: Dig into Html to retrieve a table with all Sectors

Source = Lines.FromBinary(Web.Contents("http://www.hl.co.uk/funds")),

#"Converted to Table" = Table.FromList(Source, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

#"Added Index" = Table.AddIndexColumn(#"Converted to Table", "Index", 0, 1),

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each if Text.Contains([Column1], "search-sector") then [Index] else null),

#"Filled Down" = Table.FillDown(#"Added Custom",{"Custom"}),

#"Filtered Rows" = Table.SelectRows(#"Filled Down", each [Custom] <> null),

#"Added Custom1" = Table.AddColumn(#"Filtered Rows", "Custom.1", each if Text.Start([Column1], 13)="<option value" then Text.BetweenDelimiters([Column1], """","""") else null),

#"Filtered Rows1" = Table.SelectRows(#"Added Custom1", each ([Custom.1] <> null)),

#"Added Custom4" = Table.AddColumn(#"Filtered Rows1", "Sector", each Text.BetweenDelimiters([Column1], ">", "<")),

#"Removed Other Columns" = Table.SelectColumns(#"Added Custom4",{"Custom.1", "Sector"}),

// Call function and cleanup

CallEachFunction = Table.AddColumn(#"Removed Other Columns", "WebCall", each WebCall([Custom.1])),

FilterOutEmpties = Table.SelectRows(CallEachFunction, each ([WebCall] <> null)),

ExpandColumns = Table.ExpandTableColumn(FilterOutEmpties, "WebCall", {"Column1", "Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}, {"Column1", "Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}),

#"Changed Type" = Table.TransformColumnTypes(ExpandColumns,{{"Current price Sell (p)", type number}, {"Current price Buy (p)", type number}, {"Current price Change (p)", type number}, {"Yield", type number}})

in

#"Changed Type"Another option would be to use List.Generate to increment the page number until the WebPage doesn’t return anything any more.

Imke Feldmann

www.TheBIccountant.com -- How to integrate M-code into your solution -- Check out more PBI- learning resources here

Imke Feldmann (The BIccountant)

If you liked my solution, please give it a thumbs up. And if I did answer your question, please mark this post as a solution. Thanks!

How to integrate M-code into your solution -- How to get your questions answered quickly -- How to provide sample data -- Check out more PBI- learning resources here -- Performance Tipps for M-queries

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I recommend you to use power automate desktop or cloud. Power automate desktop is a very intuitive tool.

Regards.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This isn’t such an easy task in PowerBI. Also, because the website doesn’t like parametrized queries too much, so one even has to rearrange the order of the arguments in the URL (Step: “RearragnedURL”).

Also, it takes quite some time to retrieve the data, but I believe this has more to do with the website and not with the query itself.

You have to dive into the HTML to retrieve all Sector IDs. Then you call them on their 1st page and retrieve the number of pages (step: “ListOfAllPages “). After that you call each page. Apply this function (“WebCall”) on each sector (in step: "CallEachFunction "):

let

// Function for each Sector-Information

WebCall = (SectorID as text) =>

let

Source = Web.Page(Web.Contents("http://www.hl.co.uk/funds/fund-discounts,-prices--and--factsheets/search-results?sectorid="&SectorID&"&lo=0&page=1&tab=prices")),

Custom1 = Source{[ClassName="table-styled table-sortable"]}[Data],

ListOfAllPages = if List.Count(List.Skip(Text.Split(Custom1{0}[Name], "#(lf)")))=0 then {"1"} else List.Skip(Text.Split(Custom1{0}[Name], "#(lf)")),

#"Converted to Table" = Table.FromList(ListOfAllPages, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

RearragnedURL = Table.AddColumn(#"Converted to Table", "Custom", each Web.Page(Web.Contents("http://www.hl.co.uk/funds/fund-discounts,-prices--and--factsheets/search-results?tab=prices§orid="&SectorID&"&lo=0&page="&[Column1]&"")){[ClassName="table-styled table-sortable"]}[Data]),

#"Expanded Custom" = Table.ExpandTableColumn(RearragnedURL, "Custom", {"Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}, {"Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}),

#"Filtered Rows" = Table.SelectRows(#"Expanded Custom", each not Text.StartsWith([Name], "Page:")),

#"Changed Type" = try Table.TransformColumnTypes(#"Filtered Rows",{{"Name", type text}, {"Name2", type text}, {"Current price Sell (p)", type number}, {"Current price Buy (p)", type number}, {"Current price Change (p)", type number}, {"Yield", Percentage.Type}, {"More information", type text}, {"Go to", type any}}) otherwise null

in

try #"Changed Type" otherwise null,

// Start of main query: Dig into Html to retrieve a table with all Sectors

Source = Lines.FromBinary(Web.Contents("http://www.hl.co.uk/funds")),

#"Converted to Table" = Table.FromList(Source, Splitter.SplitByNothing(), null, null, ExtraValues.Error),

#"Added Index" = Table.AddIndexColumn(#"Converted to Table", "Index", 0, 1),

#"Added Custom" = Table.AddColumn(#"Added Index", "Custom", each if Text.Contains([Column1], "search-sector") then [Index] else null),

#"Filled Down" = Table.FillDown(#"Added Custom",{"Custom"}),

#"Filtered Rows" = Table.SelectRows(#"Filled Down", each [Custom] <> null),

#"Added Custom1" = Table.AddColumn(#"Filtered Rows", "Custom.1", each if Text.Start([Column1], 13)="<option value" then Text.BetweenDelimiters([Column1], """","""") else null),

#"Filtered Rows1" = Table.SelectRows(#"Added Custom1", each ([Custom.1] <> null)),

#"Added Custom4" = Table.AddColumn(#"Filtered Rows1", "Sector", each Text.BetweenDelimiters([Column1], ">", "<")),

#"Removed Other Columns" = Table.SelectColumns(#"Added Custom4",{"Custom.1", "Sector"}),

// Call function and cleanup

CallEachFunction = Table.AddColumn(#"Removed Other Columns", "WebCall", each WebCall([Custom.1])),

FilterOutEmpties = Table.SelectRows(CallEachFunction, each ([WebCall] <> null)),

ExpandColumns = Table.ExpandTableColumn(FilterOutEmpties, "WebCall", {"Column1", "Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}, {"Column1", "Name", "Name2", "Current price Sell (p)", "Current price Buy (p)", "Current price Change (p)", "Yield", "More information", "Go to"}),

#"Changed Type" = Table.TransformColumnTypes(ExpandColumns,{{"Current price Sell (p)", type number}, {"Current price Buy (p)", type number}, {"Current price Change (p)", type number}, {"Yield", type number}})

in

#"Changed Type"Another option would be to use List.Generate to increment the page number until the WebPage doesn’t return anything any more.

Imke Feldmann

www.TheBIccountant.com -- How to integrate M-code into your solution -- Check out more PBI- learning resources here

Imke Feldmann (The BIccountant)

If you liked my solution, please give it a thumbs up. And if I did answer your question, please mark this post as a solution. Thanks!

How to integrate M-code into your solution -- How to get your questions answered quickly -- How to provide sample data -- Check out more PBI- learning resources here -- Performance Tipps for M-queries

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi ImkeF, I am trying to understand if my web scraping use case is possible with Power BI or Power Query. I have looked at products like Import.io, and Parsehub, to automate the process, but was wondering if Power BI can perform the use case. In the use case,I have a list of parts in an Excel Spreadsheet. Today, I have a very repetition iterative proccess where I (1) Enter a single part number in the search section of the website, (in order to find information on the part number). (2) After I input the part number and press enter, I manually copy the results of the search from the website. (The result is contained in fields in the website). (3) I paste the results in Excel. And then I repeat the whole process again for the next part number in my Excel spreadsheet list. Can this process be automated in Power BI Get Data from Web or Power Query?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Power BI is not an automation-tool per se, but if there is a URL that identifies the data that you need, you can write queries that get the current content everytime you refresh the queries. Have you ever tried Power BI to import data from web?

Imke Feldmann (The BIccountant)

If you liked my solution, please give it a thumbs up. And if I did answer your question, please mark this post as a solution. Thanks!

How to integrate M-code into your solution -- How to get your questions answered quickly -- How to provide sample data -- Check out more PBI- learning resources here -- Performance Tipps for M-queries

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Wow! @ImkeF this is amazing!

Thank you so much for going to all the trouble of helping me out.

Very much appreciated!

Thank you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @KM007,

This is possible to make using the M language in the query editor.

Check this post. This is made to power query in excel but it also works in power bi.

Regards,

MFelix

Regards

Miguel Félix

Did I answer your question? Mark my post as a solution!

Proud to be a Super User!

Check out my blog: Power BI em PortuguêsHelpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 115 | |

| 99 | |

| 88 | |

| 70 | |

| 62 |

| User | Count |

|---|---|

| 151 | |

| 120 | |

| 103 | |

| 87 | |

| 68 |