- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Vertipaq Engine: VALUE vs HASH

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Vertipaq Engine: VALUE vs HASH

Hi guys,

this time I need a help from you.

I'm working on a dataset which size became about 600 MB and consumes three times more RAM when loaded. That's not what I'd expect so I've downloaded the great tool Vertipaq Analyzer (from @AlbertoFerrari, @marcorusso ) and tried to find out which table/column consumes the most RAM. Till now it's boring staff, but then I saw that Int64 columns don't use value encoding but dictionary encoding. And it also means that the size used by these columns explodes.

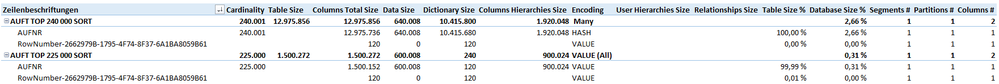

After some experiments I have found out that if I have an Int64 column with 225 000 rows, Vertipaq still uses a value encoding, but if it is ~ 240 000+ rows it uses dictionary encoding and the consumed memory is 8x bigger than expected, see the screenshot below.

Does anybody know the algorithms or rules used in Vertipaq? Can we influece those somehow in PowerBI?

EDIT: And I've read the Vertipaq part of Definitive Guide to DAX, The: Business intelligence with Microsoft Excel, SQL Server Analysis Servic... and The VertiPaq Engine in DAX.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm facing the same problem with the HASH encoding leading to a much larger column size than an equivalent column with VALUE encoding (between 3 and 4 times larger).

I'm testing if we can force the encoding to be VALUE using encoding hints, as suggested in this article:

I will update my answer if I get a solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Anonymous,

you can't force it, but you can give a hint to the Vertipaq Analyzer, that it should use Value instead of Hash. It can be set by using Tabular Editor in a PBIT file. You can also write a simple C# code which does the transformation for all columns, which supports Value encoding and so on. I will write a blog post about that, the topic is waiting in a queue 😉

You can use for example following snippet in the Tabular Editor:

// for all Int64 columns set EncodingHint

foreach(var column in Model.Tables.SelectMany(t => t.Columns))

{

if(column.DataType == DataType.Int64)

column.EncodingHint = EncodingHintType.Value;

}

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cool, look forward to that article and I will take a look at hinting using Tabular Editor

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 115 | |

| 101 | |

| 73 | |

| 70 | |

| 47 |

| User | Count |

|---|---|

| 145 | |

| 106 | |

| 105 | |

| 90 | |

| 65 |