- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Share your thoughts on DirectQuery for Power B...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

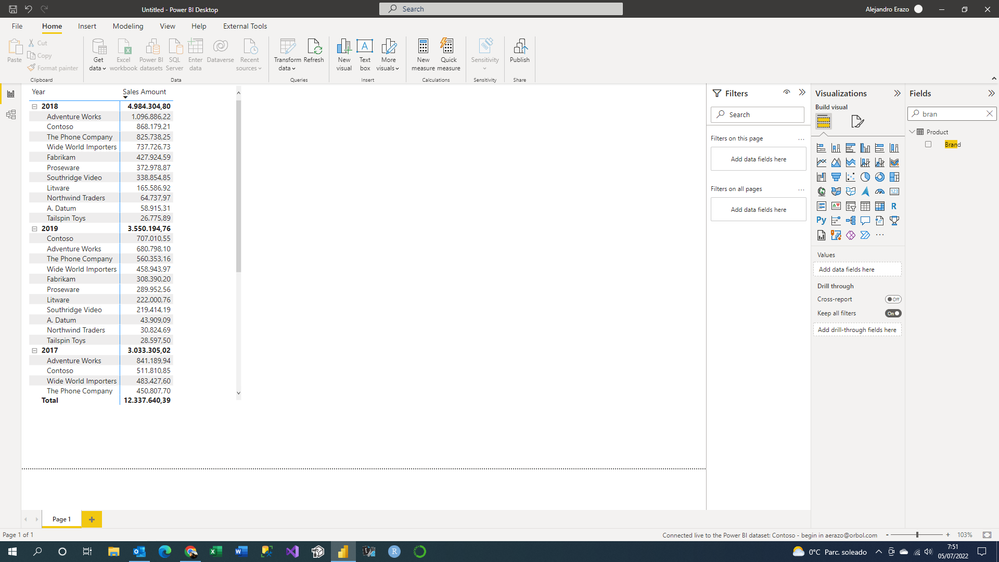

Share your thoughts on DirectQuery for Power BI datasets and Azure Analysis Services (preview)

Hit Reply and let us know what you think of the DirectQuery for Power BI datasets and Azure Analysis Services. To learn more about this feature, please visit this blog post or our documentation.

Here are some areas that we'd like to hear about in particular:

- Performance

- Query editor experience--the remote model query doesn't show up in the query editor and only in the data source settings dialog. What are your thoughts?

- Navigator experience

- Thoughts around governance and permissions for models that leverage this feature

- Nesting models, i.e. building a composite model on top of a composite model

- Automatic page refresh for live connect in composite models

Thanks and we look forward to hearing your feedback!

- The Power BI Modeling Team

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can Microsoft confirm if this is the expected behaviour ?

Workaround after ongoing troubleshooting with user.

Issue related to table key columns being hidden due to Object Level Security being set in Azure SQL Server Analysis Services Database.

Relationships remained after converting from Live Connection to Direct Query when allocated Confidential role access in Azure.

So if a key column has Object level security then all relationships that use that column would be deleted.

Can Microsoft confirm if this is the expected behaviour ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

As mentionned in the limitations of the feature, only users that have Build permission on the datasets can see the report/visuals (4th bullet point):

The build permission is not something we necessarly want to grant to any user who wants to consult the «composite» report ... is there any workaround for this limitation?

Thanks,

Gabriel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

thanks for the feedback, this is something we're planning to change: just 'read' permissions will be required when we make this feature generally available.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi! Do we have any updates regarding the read-only release for using two sets in PRO environments?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

we are still waiting on infrastructure changes to happen in the datacenters across the globe before we can make this available to PRO.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes! Weird to give changing priviledge to someone would be only reading the dataset outside the original report - a Power BI thin report or in Excel.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Getting this error in the service after days of developing in desktop with no issue/warning in local refreshes. Very discouraging and wouldn't recommend to anyone to develop in desktop using this feature. I have to now import the datasets I am using instead. I have learned not to use any features in preview going forward if I want to manage expectations well. Preview???? NO THANKS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I understand that this is annoying - we don't actively stop you from doing this in Desktop as we don't know if you are going to publish to the Service. So instead of "breaking" Desktop for those that never publish to the Service, we allow you to do it and then it breaks when you publish to the Service. If we warned for everything that is not going to work in the Service that would be a very jarring experience and get in the way a lot while it might not even be relevant for you. Please see https://docs.microsoft.com/en-us/power-bi/connect-data/desktop-directquery-datasets-azure-analysis-s... for a list of limitations in this preview, your exact scenario is listed as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It would be great if there were an officially sanctioned and clear approach (or more than one) to managing large datasets (+30GB) using directquery (or not). This is especially the case because it seems like directquery is currently the best way to deal with huge datasets in PBI. Haven't been able to load huge tables to dataflows, datamart, or blob without timeout errors, etc. I am fully in the MS ecosystem, so I connect to Azure SQL Database using directquery. The tables in the DB are indexed (columnstore) and I'm using auto aggregation. I'm pretty sure that's all I can do (or mostly all), but I'm not entirely sure. Because it's a little bit of witchcraft. Would love a video or post like this: dealing with huge datasets from SQL Server et al to PBI.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This thread isn't related to large models (import or direct query). This is related to connecting to Analysis Services in a Direct Query mode rather than a Live Connection mode.

It looks like there are a number of posts under the desktop section of the sight that maybe helpful:

As a Consultant myself working with large models (primarily against Snowflake), the advice is to import the data into the model in the first place. with large data this requires implementing incremental refresh. (Note that a P1 capacity has a model size limit of 25GB Capacity and SKUs in Power BI embedded analytics - Power BI | Microsoft Docs.)

If you can't scale up the capacity, or reduce data size through optimisation and rationalisation, then utilise direct query with aggregations; auto-aggregations (which may be helpful dependant on the repetitive nature of the queries) or manual aggregations.

There is plenty of material on these topics if you Bing search. GuyInACube has some good material as a starting point:

- https://www.youtube.com/watch?v=s0j6d3UAw9U&list=PLv2BtOtLblH3lwpQ5NBq6kD6fsVziO9sE

- https://www.youtube.com/watch?v=EhGF372t0sU&list=PLv2BtOtLblH0cQ7rWV2SVLGoplKdy0LtD

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Thanks for adding this feature. Really useful; however we are having some issues at my company.

As a tenant admin, connecting to a PBI dataset (switched to DirectQuery) + an Excel file is working fine.

But for my colleague (not an admin, but workspace admin), he is getting an error

"Your credentials could not be authenticated: "Credentials are missing." altough everything is set up correctly in the source (we tried with several kinds). He also enabled the feature in Power BI desktop.

I checked all the tenant admin settings (mentionned here) too.

Do you have any idea on what could cause the problem ?

Thanks for your help

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

sounds like a permission problem or credentials not being configured for the source. Have you checked the source settings in the Service?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

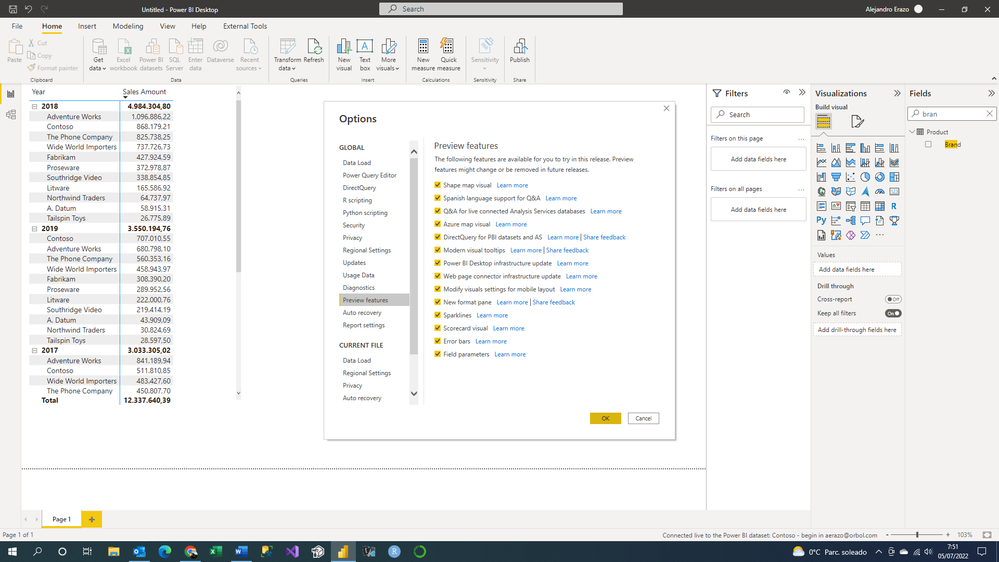

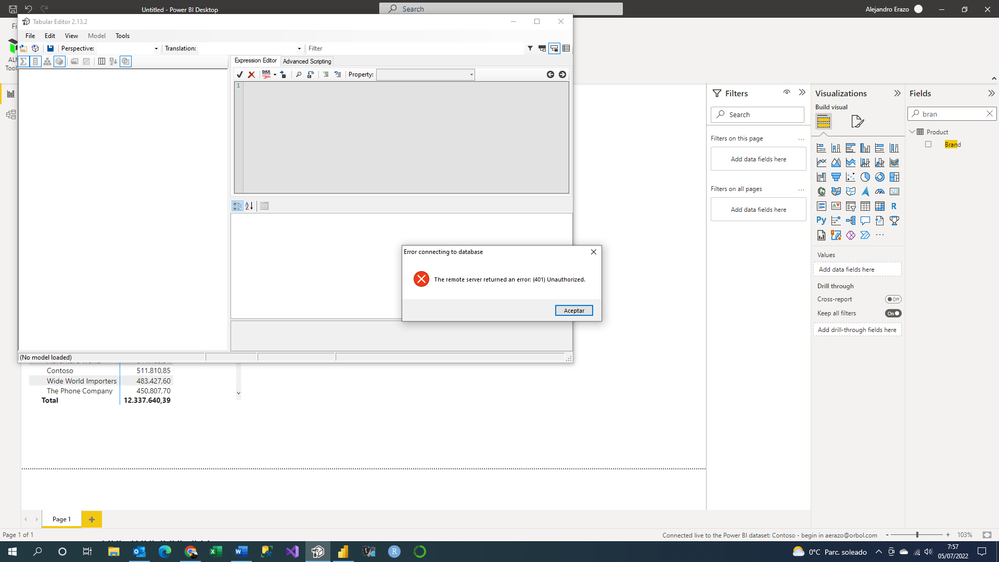

I'm having trouble developing the composite models.

I connect to a powerbi service dataset and create a model in direct query.

I then enabled DirectQuery for PowerBi datasets and the AS option.

Despite this, it doesn't give me the option to connect to any other file.

Also, I went to External Tools and tried to open the Tabular Editor and it gives me an error: The remote server returned an error: (401) Unauthorized

Am I doing something wrong?

Do I need to enable anything else for composite models to work?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

not sure. Did you restart Desktop after enabling the preview?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, I did. But nothing happens even though I am connected live to the published dataset.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

is this dataset published on Pro/Premium/PPU? Is it not located in "my workspace"?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

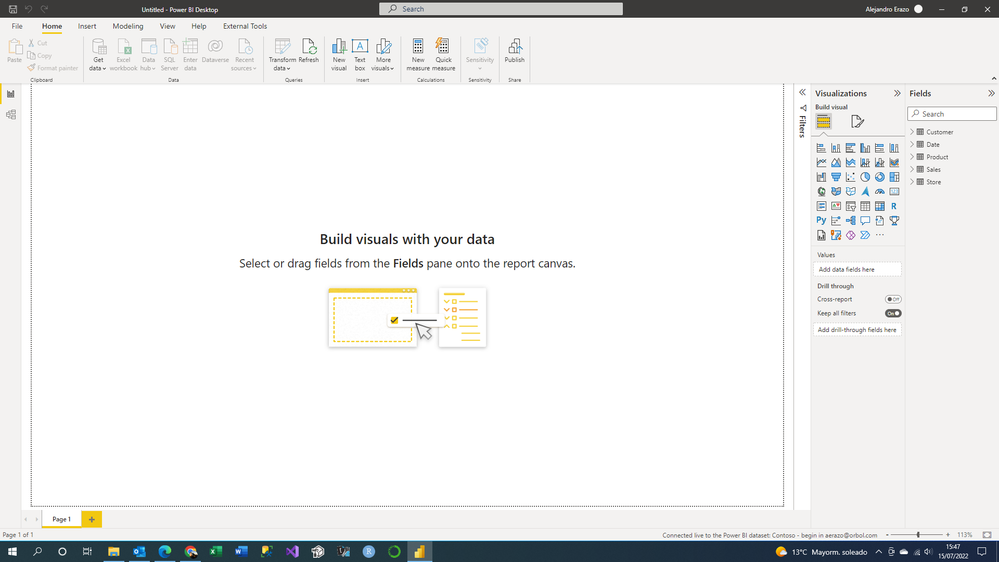

The report is published with a free account, I do not have a pro or premium.

Also, it is published on my workspace.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

from the documentation: "

Using DirectQuery on datasets from “My workspace” isn't currently supported.": https://docs.microsoft.com/en-us/power-bi/connect-data/desktop-directquery-datasets-azure-analysis-s...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

With another workspace, it just worked, thank you very much

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ok great!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello

This feature could support SQL Server Analysis Services 2022 now. It's great !

Composite models on SQL Server Analysis Services | Microsoft Power BI Blog | Microsoft Power BI

Is it possible to support SQL Server Analysis Services 2019 in the future ?

Thank you.

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 111 | |

| 94 | |

| 83 | |

| 67 | |

| 59 |

| User | Count |

|---|---|

| 151 | |

| 121 | |

| 104 | |

| 87 | |

| 67 |