- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Measure Optimization Help

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Measure Optimization Help

I think my DAX code would benefit from optimization. My report takes up to a minute to load visuals after making a slicer selection, and it makes the report unusable. I'd appreciate any suggestions to improve my work!

I have a central fact table, Jobs, and I'm trying to add columns with data from other related fact tables (using the ID field). Item1, Item2, etc. data are spread across a handful of tables and I'm trying to get counts for Fail and Pass for all Items all in one place:

Results = ADDCOLUMNS(Jobs,

"Item1_PASS", COUNTAX ( FILTER ('DataTable1', 'DataTable1'[Item1] = "Pass" && 'DataTable1'[ID]=Jobs[ID]), 'DataTable1'[Item1]),

"Item1_FAIL", COUNTAX ( FILTER ('DataTable1', 'DataTable1'[Item1] = "Fail" && 'DataTable1'[ID]=Jobs[ID]), 'DataTable1'[Item1]),My understanding was that I need to create measures (in a separate measure table) that calculate a sum for each of the columns that I'm adding to Jobs:

Item1_FAIL measure:

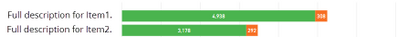

Item1_FAIL = SUM('Results'[Item1_FAIL])The end goal is a visual with counts of Pass and Fail for each Item that will work with slicers that use other columns from Jobs, like this:

Fail Count =

SWITCH (

FIRSTNONBLANK( 'Item_key'[Item Name], 1),

"Full description for Item1.", 'My Measures'[Item1_FAIL],

Here is a screenshot of my relationship diagram for reference:

Again, this report has become very frustrating to use because of the load times - I have over 200 Items to display results for. I imagine I could set up my code in a better way, but I'm not sure what that would be. Thanks in advance for suggestions, and please let me know where I can clarify anything.

@Anonymous

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

looking at the current code I have 2 observations:

1) you use nested iterator functions (FILTER nested in COUNTAX) - this is heavy lifting performance wise

e.g. here (taken from your model)

Rough_Pass = COUNTAX(FILTER(RoughInspections_merged, RoughInspections_merged[RoughInspectionStatus]="Pass"), RoughInspections_merged[RoughInspectionStatus])

this should give exactly same numbers, with much better performance

Rough_Pass= CALCULATE ( COUNTA ( RoughInspections_merged[RoughInspectionStatus] ), RoughInspections_merged[RoughInspectionStatus] = "Pass" )

2) you calculate a new table in Memory to just do sum based on filter - you don't need to calculate table for that

I'd try the following:

Item1_FAIL = CALCULATE ( COUNTA ( 'DataTable1'[Item1] ), 'DataTable1'[Item1] = "Fail" )

also you can have a look at this video, it's quite helpful on where to start optimization

https://www.sqlbi.com/tv/optimizing-dax-queries/

Thank you for the kudos 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aha! Thanks @Stachu, I appreciate the specific suggestion. I've just started changing the code over to use CALCULATE(COUNTA() instead of COUNTAX(FILTER() and it appears the time to load after a slicer change has already improved. I'll follow up once I have all the code changed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Another question - I'm calculating population statistics to compare to the sliced stats, like this:

Population Rough Total Failures = COUNTX(FILTER(ALL(RoughInspections_merged), RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier]="Fail"), RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier])

Any suggestion for how to rework/optimize this using the pattern that @Stachu suggested? CALCULATE(COUNTA(...

I've tried this but it's clearly not right:

CALCULATE(COUNTA(ALL(RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier])), ALL(RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier]="Fail"))

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

if you add ALL inside COUNTA it counts all

CALCULATE (

COUNTA ( RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier] ),

FILTER (

ALL ( RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier] ),

RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier] = "Fail"

)

)

Thank you for the kudos 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks @Stachu. I believe I entered that measure as you suggested and it appears it's still being affected by slicer selections. I was hoping for something that is unaffected by slicers. Any thoughts?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I figured that last issue out, the DAX needed to be this way to get a count unaffected by slicers:

CALCULATE(COUNTA(RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier]), FILTER(ALL(RoughInspections_merged), RoughInspections_merged[RoughAirBarrier.CantileverAirBarrier] = "Fail"))

Note that inside the ALL() function is the entire "RoughInspections_merged" table, not a specific column in that table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@jscottNRG, this looks pretty complex. A couple of things come to mind right away:

You have a LOT of bi-directional relationships. These can sometimes produce results that are unexpected, wrong, or lead to slow measures. I typically avoid bi-directional filters unless they're truly necessary.

You mention that Jobs is a fact table, and you're relating it to other fact tables (which is likely why you need the bi-directional filters). This is NOT a best practice when designing your data model. Fact tables should relate to dimension tables. There are other ways to use DAX to "harvest" a value from another fact table (typically using FILTER() and SELECTEDVALUE() or variables).

There are a lot of relationships, period. Are ALL of these tables necessary? Can you group/combine/merge some of these tables together in Query Editor?

You may want to use some SQL to join some of these tables into a single fact table. Aim for a star schema.

The community (myself included) is willing to help you, but you'll need to provide us some more information first. Can you post the file on dropbox? If not, can you make the data anonymous first? Can you send a screenshot of each of the tables that are involved in the measures / calculated tables you're looking for?

It's extremely hard to troubleshoot DAX when we don't know what the data looks like, or what the result should be.

Cheers,

~ Chris

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Wow @Anonymous thank you again for your thorough guidance ond offer to help on this!

I've tried to make the changes you suggested:

- join fact tables in the Query Editor to reduce the number of relationships (i.e. instead of using relationships between RoughInspections and RoughAirBarrier, RoughAirSealing, RoughCustom, etc. they are joined at the query stage into a fact table, "RoughInspections_merged")

- switch bi-directional relationships cross filter direction from "Both" to "Single" where possible (there are some where PBI says "the cardinality you selected isn't valid for this relationship" when I try to do this and I don't understand why)

- use dimension tables to relate to fact tables for slicer use -- there are still a few fact-to-fact table relationships that I'm not sure how to properly fix

Still, after these changes, my "Rough Inspection Results" page takes between 50-60 seconds to update visuals when a slicer selection is made. I've made an anonymous dataset and uploaded my Power BI file to Google Drive so others can access to review. Note that I first made the changes you recommended to the "Rough" part of the dataset; there are a lot of measures to update so I wanted to see if I could improve the performance before editing the rest. I deleted the relationships on the "Final" side so I could be sure that it wasn't those relationships slowing things down.

Please let me know if I can explain anything or if any part of my problem is unclear. I'm hopeful that the performance of my report can be improved, but maybe I'm just asking too much of Power BI? Thanks again to the Power BI community for helping a rookie like myself out!

Jonathan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Adding a comment to bump this to the top of the forum homepage, I'm still looking for help determining what issues remain in my report.

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 109 | |

| 99 | |

| 77 | |

| 66 | |

| 54 |

| User | Count |

|---|---|

| 144 | |

| 104 | |

| 102 | |

| 87 | |

| 64 |