- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Improving formula engine performance

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Improving formula engine performance

I have a query that's taking 55 seconds for the FE and 1 for the SE

DAX Studio obviously gives me the SQL and SE timings, but I can't see how to either

a) see what's taking 55 seconds in the FE

b) optimise FE performance

The query only includes 1 calculated column (basically: if x isblank, "no", "yes") and 1 measure (sum sales). The other fields are straight from the dwh.

Is there a way of improving FE performance?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you messed around with the Power Query diagnostic options (Tools tab) or DAX Performance Analyzer (View tab)?

@ me in replies or I'll lose your thread!!!

Instead of a Kudo, please vote for this idea

Become an expert!: Enterprise DNA

External Tools: MSHGQM

YouTube Channel!: Microsoft Hates Greg

Latest book!: The Definitive Guide to Power Query (M)

DAX is easy, CALCULATE makes DAX hard...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Greg_Deckler - thanks again for your help

I've used the DAX PA to generate performance data for export to DAX Studio, and have been running queries there - following the approach of commenting out fields to see what's causing issues.

Haven't used PQ diagnostic tracing - will need to learn more about it. Does it help query optimisation?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, it provides a breakdown of basically each step of the query and how long it is taking.

@ me in replies or I'll lose your thread!!!

Instead of a Kudo, please vote for this idea

Become an expert!: Enterprise DNA

External Tools: MSHGQM

YouTube Channel!: Microsoft Hates Greg

Latest book!: The Definitive Guide to Power Query (M)

DAX is easy, CALCULATE makes DAX hard...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, it provides a breakdown of basically every step in your query and how long it is taking.

@ me in replies or I'll lose your thread!!!

Instead of a Kudo, please vote for this idea

Become an expert!: Enterprise DNA

External Tools: MSHGQM

YouTube Channel!: Microsoft Hates Greg

Latest book!: The Definitive Guide to Power Query (M)

DAX is easy, CALCULATE makes DAX hard...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Query diagnostics will help you troubleshoot your power query refresh, but not your DAX expressions. Can you post your DAX expression so we can propose optimization changes?

Regards,

Pat

Did I answer your question? Mark my post as a solution! Kudos are also appreciated!

To learn more about Power BI, follow me on Twitter or subscribe on YouTube.

@mahoneypa HoosierBI on YouTube

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

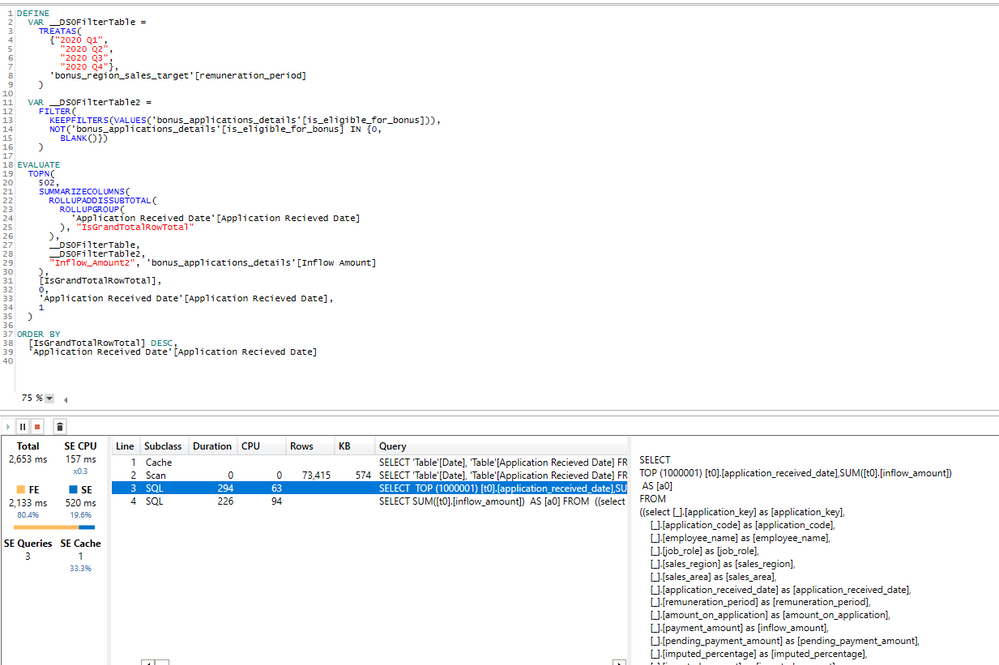

Thanks @mahoneypat - here's the DAX that's taking 56 seconds to run

DEFINE

VAR __DS0FilterTable =

TREATAS(

{"2020 Q1",

"2020 Q2",

"2020 Q3",

"2020 Q4"},

'bonus_region_sales_target'[remuneration_period]

)

VAR __DS0FilterTable2 =

FILTER(

KEEPFILTERS(VALUES('bonus_applications_details'[is_eligible_for_bonus])),

NOT('bonus_applications_details'[is_eligible_for_bonus] IN {0,

BLANK()})

)

EVALUATE

TOPN(

502,

SUMMARIZECOLUMNS(

ROLLUPADDISSUBTOTAL(

ROLLUPGROUP(

'Application Received Date'[Application Recieved Date],

'bonus_applications_details'[application_code],

'bonus_applications_details'[fundraise_name],

'bonus_applications_details'[investor_name],

'adviser_contacts'[adviser_code],

'adviser_contacts'[adviser_name],

'current_intermediaries'[intermediary_code],

'current_intermediaries'[company_name],

'bonus_applications_details'[amount_on_application],

'bonus_applications_details'[pending_payment_amount],

'bonus_applications_details'[imputed_percentage],

'dbt_marts oisales__bonus_accelerators'[activation_type],

'bonus_applications_details'[sales_region],

'bonus_applications_details'[remuneration_period],

'bonus_applications_details'[investor_code],

'bonus_applications_details'[is_cleared],

'bonus_bdm_sales_target'[employee_name]

), "IsGrandTotalRowTotal"

),

__DS0FilterTable,

__DS0FilterTable2,

"Amount", 'bonus_applications_details'[Amount]

),

[IsGrandTotalRowTotal],

0,

'Application Received Date'[Application Recieved Date],

1,

'bonus_applications_details'[application_code],

1,

'bonus_applications_details'[fundraise_name],

1,

'bonus_applications_details'[investor_name],

1,

'adviser_contacts'[adviser_code],

1,

'adviser_contacts'[adviser_name],

1,

'current_intermediaries'[intermediary_code],

1,

'current_intermediaries'[company_name],

1,

'bonus_applications_details'[amount_on_application],

1,

'bonus_applications_details'[pending_payment_amount],

1,

'bonus_applications_details'[imputed_percentage],

1,

'dbt_marts oisales__bonus_accelerators'[activation_type],

1,

'bonus_applications_details'[sales_region],

1,

'bonus_applications_details'[remuneration_period],

1,

'bonus_applications_details'[investor_code],

1,

'bonus_applications_details'[is_cleared],

1,

'bonus_bdm_sales_target'[employee_name],

1

)

ORDER BY

[IsGrandTotalRowTotal] DESC,

'Application Received Date'[Application Recieved Date],

'bonus_applications_details'[application_code]/*,

'bonus_applications_details'[fundraise_name],

'bonus_applications_details'[investor_name],

'adviser_contacts'[adviser_code],

'adviser_contacts'[adviser_name],

'current_intermediaries'[intermediary_code],

'current_intermediaries'[company_name],

'bonus_applications_details'[amount_on_application],

'bonus_applications_details'[pending_payment_amount],

'bonus_applications_details'[imputed_percentage],

'dbt_marts oisales__bonus_accelerators'[activation_type],

'bonus_applications_details'[sales_region],

'bonus_applications_details'[remuneration_period],

'bonus_applications_details'[investor_code],

'bonus_applications_details'[is_cleared],

'bonus_bdm_sales_target'[employee_name]*/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm stripping back the DAX to see how it's translated into SQL and can see that even if I'm only querying two columns - date and amouunt - the SQL generated is querying every single field in the fact table

I can't see a reason to query fields that aren't required. Does this indicate something wrongly configured in Power BI or Synapse?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous Perhaps we should back up. What does your source data look like and what are you trying to accomplish? I feel like we are trying to bail water when we could just plug the hole...

Please see this post regarding How to Get Your Question Answered Quickly: https://community.powerbi.com/t5/Community-Blog/How-to-Get-Your-Question-Answered-Quickly/ba-p/38490

The most important parts are:

1. Sample data as text, use the table tool in the editing bar

2. Expected output from sample data

3. Explanation in words of how to get from 1. to 2.

@ me in replies or I'll lose your thread!!!

Instead of a Kudo, please vote for this idea

Become an expert!: Enterprise DNA

External Tools: MSHGQM

YouTube Channel!: Microsoft Hates Greg

Latest book!: The Definitive Guide to Power Query (M)

DAX is easy, CALCULATE makes DAX hard...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Here's a go at asking the question in a better way

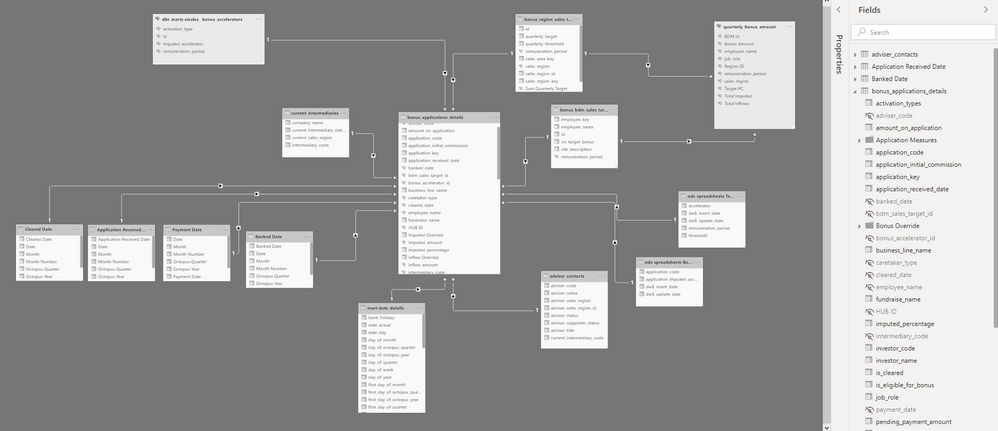

I'm using a composite model, with the main fact table in DirectQuery and other tables (e.g. date) in Import. Here's the model:

It has about 250k rows and about 40 columns, of which we keep about 30 and then make some measures on top.

Because it's DQ, I'm not sure extracting a sample, converting to import and sharing would replicate the same performance we see here - or would it?

The fact table contains sales with data regarding bonuses - sale id, customer info, employee info, regional info, multiple dates, amounts, bonus codes (e.g. bonus multiplier id).

The other tables are mainly date tables (we haven't implemented role playing dimensions yet) and bonus tables (multipliers and targets).

What I'm trying to solve here is to show sales ("applications") that haven't been processed ("cleared"), and to calculate the bonus multiplier for them by looking into a couple of other tables with bonus multiplier values.

E.g.

| sale_id | bonus period | employee name | customer | campaign | region | sale amount | bonus eligibility | bonus multiplier | bonus amount | ... |

I've put in red the fields that are causing most problems:

- employee name - it's just a field in the source table, but taking it out of the visual drops the query time from 55 seconds to 7 seconds

- bonus multiplier (measure) - it's a couple of lookups to other tables in the data model (DAX below) but I run out of available resources while refreshing the visual

- bonus amount (measure) - very simple product of sale amount and bonus multiplier, but fails based on the multiplier

The sale amount is also a measure:

Bonus multiplier =

var default = CALCULATE(

MAX('dbt_marts sales__bonus_multipliers'[bonus_multiplier]),

FILTER(

ALL('dbt_marts sales__bonus_multipliers'[id]),

'dbt_marts sales__bonus_multipliers'[id] = SELECTEDVALUE(bonus_applications_details[bonus_multiplier_id])

)

)

var override = CALCULATE(

MAX('ods_spreadsheets sales__bonus_overrides'[bonus_override]),

FILTER(

ALL('ods_spreadsheets sales__bonus_overrides'[application_code]),

'ods_spreadsheets sales__bonus_overrides'[application_code] = SELECTEDVALUE(bonus_applications_details[application_code])

)

)

return

if(not(ISBLANK(override)),override,default)

(is there a reason the code snippet can't format DAX??)

This is doing a couple of lookups to get a default bonus multiplier (based on the sale period) and checking for a sale-level override (based on the sale id. These are small lookup tables, but adding this field results in the resources being exceeded.

If I add the measure, the visual says "ran out of available resources".

Maybe it's a problem in Synapse (why would the SQL be querying fields that I don't use in the visual...?)

Maybe it's a problem in the data model (but even just bringing back a field from the source table takes almost a minute...)

Maybe it's a problem in my query (but I'm using small tables and I've tried to use FILTER efficiently)

I appreciate you have lives and jobs, so honestly any tips would be hugely appreciated and if this is an unreasonable or badly worded request for help, I get it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thought I'd post here to share what we did, in case this post is found again

We made changes in Synapse and Power BI, and combined they fixed the performance issue

In Synapse, we had been just replicating tables from BigQuery as part of a migration from GCP to Azure. We hadn't properly configured fields (e.g. size), turned on caching or made done anything to data distribution

In Power BI we were using measures where we should have used calculated columns

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

One quick suggestion to try is to replace your second variable

VAR __DS0FilterTable2 =

FILTER(

KEEPFILTERS(VALUES('bonus_applications_details'[is_eligible_for_bonus])),

NOT('bonus_applications_details'[is_eligible_for_bonus] IN {0,

BLANK()})

)with

VAR __DS0FilterTable2 =

Filter(Keepfilters(values('bonus_applications-details'[is_eligible_for_bonus])), 'bonus_applications-details'[is_eligible_for_bonus]>0)

Also, can you post the original measure from Power BI, not the MDX from DAX Studio? I'm not confident that will make it clearer but worth a try.

Regards,

Pat

Did I answer your question? Mark my post as a solution! Kudos are also appreciated!

To learn more about Power BI, follow me on Twitter or subscribe on YouTube.

@mahoneypa HoosierBI on YouTube

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 114 | |

| 99 | |

| 82 | |

| 70 | |

| 61 |

| User | Count |

|---|---|

| 149 | |

| 114 | |

| 107 | |

| 89 | |

| 67 |