- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Flatten audit table

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Flatten audit table

Hi, I'm a newbie in Power BI (3rd day) and wondering how you guys solve following situation. I have something in database that you could call audit table:

| id | original_id | status_1 | flag_1 | flag_2 | update_date |

| 1 | 0 | Confirmed | True | True | 2020-12-02 |

| 2 | 1 | Pending | True | False | 2020-12-01 |

Current entity state is with original_id = 0, when original_id is not 0, that means historical state, in original table there's way more statuses and flags.

I'm using "import" approach for dataset and have 40k new rows a day, 1.5milion in total.

I need to show dynamics based on date how by counting statuses and flags, like in past month each day we had so much items in pending state.

I think I could achieve that by adjusting query to database, but probably that would use CTE and would not perform very well (I can't change underlyging database structure).

Or may be I could somehow transform data in Power BI query, to have query output as something like this:

| id | status_1_confirmed_date | flag_1_true_date | flag_2_true_date |

| 1 | 2020-12-02 | 2020-12-01 | 2020-12-02 |

I think this pattern should be quite common, I'm not sure if flattening is correct word for that, so if there's a name for this pattern, I would happy to read more, if there are several alternatives to approach it, I would be happy to know that too with cons and pros.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @gban

Based on your description, I created data to reproduce your scenario. The pbix file is attached in the end.

Table:

You may paste the following m codes in 'Advanced Editor' to apply transformations to the query.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMlTSUTIAYuf8vLTMotzUFCA7pKg0FUEZGRgZ6Boa6RoYKcXqRCsZAYVAmgJS81Iy89IR6twSc4pR1BuC1RtjqIcpRNVgqGtsANZgQrQGI0ul2FgA", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [id = _t, original_id = _t, #"status_1 " = _t, flag_1 = _t, flag_2 = _t, update_date = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"id", Int64.Type}, {"original_id", Int64.Type}, {"status_1 ", type text}, {"flag_1", type logical}, {"flag_2", type logical}, {"update_date", type date}}),

Custom1 = Table.SelectRows(#"Changed Type",each [original_id]=0),

#"Added Custom" = Table.AddColumn(Custom1, "Custom", each

let l = List.Sort( Table.SelectRows(#"Changed Type",each [original_id]<>0)[update_date],Order.Ascending)

in

Record.FromList(

l,

List.Transform(

List.Transform(l,each List.Count( List.Select(l,(x)=>x<_))+1) ,

(y)=> "flag_"&Text.From(y)&"_true_date")

)),

#"Expanded Custom" = Table.ExpandRecordColumn(#"Added Custom", "Custom", {"flag_1_true_date", "flag_2_true_date", "flag_3_true_date"}, {"flag_1_true_date", "flag_2_true_date", "flag_3_true_date"}),

#"Removed Columns" = Table.RemoveColumns(#"Expanded Custom",{"original_id", "status_1 ", "flag_1", "flag_2"}),

#"Renamed Columns" = Table.RenameColumns(#"Removed Columns",{{"update_date", "confirm_date"}})

in

#"Renamed Columns"

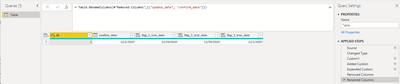

Result:

Best Regards

Allan

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, @gban

Based on your description, I created data to reproduce your scenario. The pbix file is attached in the end.

Table:

You may paste the following m codes in 'Advanced Editor' to apply transformations to the query.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("i45WMlTSUTIAYuf8vLTMotzUFCA7pKg0FUEZGRgZ6Boa6RoYKcXqRCsZAYVAmgJS81Iy89IR6twSc4pR1BuC1RtjqIcpRNVgqGtsANZgQrQGI0ul2FgA", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [id = _t, original_id = _t, #"status_1 " = _t, flag_1 = _t, flag_2 = _t, update_date = _t]),

#"Changed Type" = Table.TransformColumnTypes(Source,{{"id", Int64.Type}, {"original_id", Int64.Type}, {"status_1 ", type text}, {"flag_1", type logical}, {"flag_2", type logical}, {"update_date", type date}}),

Custom1 = Table.SelectRows(#"Changed Type",each [original_id]=0),

#"Added Custom" = Table.AddColumn(Custom1, "Custom", each

let l = List.Sort( Table.SelectRows(#"Changed Type",each [original_id]<>0)[update_date],Order.Ascending)

in

Record.FromList(

l,

List.Transform(

List.Transform(l,each List.Count( List.Select(l,(x)=>x<_))+1) ,

(y)=> "flag_"&Text.From(y)&"_true_date")

)),

#"Expanded Custom" = Table.ExpandRecordColumn(#"Added Custom", "Custom", {"flag_1_true_date", "flag_2_true_date", "flag_3_true_date"}, {"flag_1_true_date", "flag_2_true_date", "flag_3_true_date"}),

#"Removed Columns" = Table.RemoveColumns(#"Expanded Custom",{"original_id", "status_1 ", "flag_1", "flag_2"}),

#"Renamed Columns" = Table.RenameColumns(#"Removed Columns",{{"update_date", "confirm_date"}})

in

#"Renamed Columns"

Result:

Best Regards

Allan

If this post helps, then please consider Accept it as the solution to help the other members find it more quickly.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks a lot Allan, I'm glad I've asked as I don't see any chance I could come up with this by my self with such solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Looks like a Slowly Changing Dimension.

You can read about the theory by web search if you like. (different types, pros/cons)

Your data scenario looks a little more complicated with all the different flags to represent the state of an object.

Does the original id = 0 record represent the complete state of an object? ->

and Is a id =0 record generated every day for every object?

Are you only interested in reporting on the id = 0 record?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks, I'll read about slowly changing dimension.

Meanwhile I'll try to answer questions to see if that might lead to some other suggestions.

Yes, original id = 0 represents the complete state of an object.

No, rows are not generated every day for every object, new row is created only when user creates new entity or updates of existing entity. Now when I think about it probably original_id is a misleading column name, current_id would be better one - I don't know implementation details, but I guess it following happens when user updates a row:

1) current row values are copyed to new row

2) updated values are applied on new row

3) old row original id (which I would name current id) is updated to point to new row.

Which would give following:

1) There might be no history records for a row (if it was never updated)

2) There might be more than 1 history records during 1 day (if row was updated multiple times during the day), in such case only latest state during that day would be interesting. It is not likely, that state would change let's say from Pending to Confirmed and back to Pending, even if it was, I don't care about those exceptions. You can see it as Sales Order - Pending, Confirmed, Shipped - it might go back to Pending sometimes, but that's anomaly I'm fine to ignore.

So given the Sales order analogy - I need to know how many Pending orders were each day, how many Confirmed orders were each day, how many Shipped orders - so that I would see if there's any bottleneck in specfic state and correlations with other flags/parameters. So answering your last question - ideally I would like to turn historic records into additional columns for original id = 0 row, that would make visualization easy.

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 109 | |

| 98 | |

| 77 | |

| 66 | |

| 54 |

| User | Count |

|---|---|

| 144 | |

| 104 | |

| 101 | |

| 86 | |

| 64 |