- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Find & count certain for each column

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Find & count certain values for each column

Hi all,

My first post here, did some searching but could not find anything that resembles what I'd like to have.

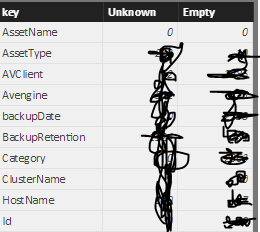

I am working on data that has some shortcomings: many cells are "Unknown", "?", "??" or NULL, 0, Empty. Now, I'd like to count how often such values occur per column. I have created something that kinda does what I want. I have copied the existing data source and then used R to do this:

# 'dataset' holds the input data for this script

output2 <- dataset

library(tidyverse)

unknown_values = c("Unknown", "UNKNOWN", "???", "??", "?")

isUnknown <- function(x){

x %in% unknown_values

}

empty_values = c("0", 0, " ", "", NULL, "NULL")

isEmpty <- function(x){

x %in% empty_values

}

output2 = output2 %>%

filter(Status == "Production") %>%

select(-one_of(c("OSStd", "OSSPStd"))) %>% # replace to your needs

gather() %>%

group_by(key) %>%

summarise_all(

funs(

'Unknown' = sum(isUnknown(.)),

'Empty' = sum(isEmpty(.))

)

) %>%

as.data.frame

This solution works but I would also like to filter using the dataframe that my R code spits out which unfortunately does not work out of the box. Makes sense however, because I have two dataframes that are not connected.

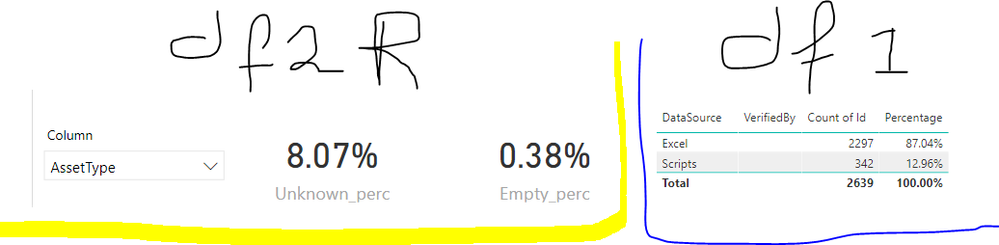

An example: a column in the original dataframe (1) is AssetType and another column in datasource (marked in blue).

AssetType

Server

Desktop

0

Empty

Null

Workstation

...

My R code creates a row in a secondary dataframe (2, marked in yellow) as follows:

key -- Unknown -- Empty

AssetType -- 201 -- 16

Now what I would like to happen, is that the table marked in blue only counts the rows where assetType is empty or unknown, which comes down to approx. 8.5% of 2639 = 222 in total. I could do this by making two measures (one for unknown, another one for empty) for each column that I have in dataframe 1, although I am not sure how I would have to select columns in a similar way as shown in the yellow part of the image above.

Is there a better way to do this or a way to link the two dataframes so that the filters will be applied preferably in a bidirectional way?

Kind regards,

Jacco

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I find it a bit difficult to understand what are you trying to achieve, can you post sample few rows from the both tables (anonymised)

Thank you for the kudos 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Df1 contains rows with info about systems. Df2 contains rows that in essence are counts of the columns from Df1.

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

ok, so you want to count rows in Dataframe1, that meet certain criteria

in DAX this can be achieved like this

AssetType_Empty= CALCULATE(COUNTROWS(DF1),DF1[AssetType] = BLANK()) AssetType_Unknown= CALCULATE(COUNTROWS(DF1),DF1[AssetType] = "Unknown")

assuming Dataframe2 is supposed to only serve as a source for the dropdown in the yellow visual, the following syntax would work

Empty =

VAR ColumnName = SELECTEDVALUE(DF2[key])

RETURN

SWITCH(ColumnName,

"AssetType",[AssetType_Empty],

"Other column",[Other column_Empty],

BLANK()

)then very similiar measure for Unknown

Is this what you were looking for, or do you look for a way to modify values in DF2?

Thank you for the kudos 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Stachu,

Thanks for your help. Using your solution, I'd have to manually create two measures (x_empty & x_unknown) for each column x I guess? And for an overall accuracy / integrity measure i'd have to combine those measures into a single score. That could work although it would be quite a hassle with 35 rows hehe.

Kind regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

you could create just 2 measures, and include the column specific ones as variables

Empty =

VAR ColumnName = SELECTEDVALUE(DF2[key])

VAR AssetType_Empty = CALCULATE(COUNTROWS(DF1),DF1[AssetType] = BLANK())

VAR Other_column_Empty = CALCULATE(COUNTROWS(DF1),DF1[Other_column] = BLANK())

RETURN

SWITCH(ColumnName,

"AssetType",AssetType_Empty,

"Other column",Other_column_Empty,

BLANK()

)if you could unpivot the columns so they would all be in 2 columns like ColumnName & ColumnValue then it's just 2 very simple measures. This approach requires some drastic changes to your data model

Thank you for the kudos 🙂

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks again, I am not sure whether I have made myself as clear as I would like so I'll try to rephrase. What I need is a score for data quality for both the whole dataframe(df1) and every single column in there. My R code simply counts the occurence of empty or unknown per column and saves those two counts in a second dataframe. However, the two df's have different structures and therefore they do not support cross-filtering.

What I'd like to have is that I can select a column (e.g. AssetType) using a slicer and that when I apply a filter (e.g. AVClient = "McAfee") the metrics update and only shows the unknown and empty counts for each row where AVClient equals McAfee.

However, if I deselect the AssetType column, it should show a score for the whole dataframe where AVClient = McAfee. I guess this requires looping over every cell in the df or calculating two scores for each column beforehand.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

what you're describing in regards to filters is a default behaviour, and assuming I understand you correctly this is how measure works now

so e.g.

you filter AssetType out of DF2 column Key to show Empty in AssetTpe column

whatever kind of filter you apply on DF1 (e.g. slicer DF1[AVClient] = McAfee) will affect the result of the Measure I posted

Thank you for the kudos 🙂

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 118 | |

| 107 | |

| 70 | |

| 70 | |

| 43 |

| User | Count |

|---|---|

| 148 | |

| 104 | |

| 104 | |

| 89 | |

| 66 |