- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: Earliest and latest timestamp

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Earliest and latest timestamp

I have a query that pulls excel files from a sharepoint link where all files named with the format yy.MM.dd.hhmm.xlsx

for each day there can range from 0 to 100+ files for each day for example:

22.05.23.0600.xlsx

22.05.23.0714.xlsx

22.05.23.1239.xlsx

22.05.23.1601.xlsx

22.05.24.0500.xlsx

22.05.24.1239.xlsx

22.05.24.1601.xlsx

22.05.25.1600.xlsx

I was able to pull a query which converts the file name into a date time format but I need to compile the records of each day's first of the day and latest of each day. If there is only 1 record for the day it should only pull once

So for above the results should pull records for

22.05.23.0600.xlsx

22.05.23.1601.xlsx

22.05.24.0500.xlsx

22.05.24.1601.xlsx

22.05.25.1600.xlsx

I am pretty sure I need to create a DAX formula using calculate and filters but not sure how the approach should be.

Any help is appreciated.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

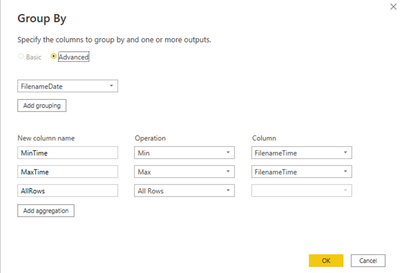

This sounds like a task better suited for Power Query. The solution below imports only the first and last file for each day. The concept is to use the Group By function in Power Query to get the Min and Max time for each date, and then flag those files for import (excluding the other files). The M code below would be extended to include the steps to import the selected files.

let

Source = Table.FromRows(

Json.Document(

Binary.Decompress(

Binary.FromText(

"i45WMjLSMzDVMzLWMzAzMNCryCmuUIrVQRY2NzTBImxoZGyJTdjMwBBD2ARIYjHbBLshJtgNMQUJwwyJBQA=",

BinaryEncoding.Base64

),

Compression.Deflate

)

),

let

_t = ((type nullable text) meta [Serialized.Text = true])

in

type table [Filename = _t]

),

ChangeType = Table.TransformColumnTypes(Source, {{"Filename", type text}}),

AddFilenameParts = Table.AddColumn(ChangeType, "FilenameParts", each [Filename]),

ReplaceValue = Table.ReplaceValue(

AddFilenameParts,

".xlsx",

"",

Replacer.ReplaceText,

{"FilenameParts"}

),

SplitColumn = Table.SplitColumn(

ReplaceValue,

"FilenameParts",

Splitter.SplitTextByEachDelimiter({"."}, QuoteStyle.Csv, true),

{"FilenameParts.1", "FilenameParts.2"}

),

ChangedType2 = Table.TransformColumnTypes(

SplitColumn,

{{"FilenameParts.1", type text}, {"FilenameParts.2", Int64.Type}}

),

RenameColumns = Table.RenameColumns(

ChangedType2,

{{"FilenameParts.1", "FilenameDate"}, {"FilenameParts.2", "FilenameTime"}}

),

GroupRows = Table.Group(

RenameColumns,

{"FilenameDate"},

{

{"MinTime", each List.Min([FilenameTime]), type nullable number},

{"MaxTime", each List.Max([FilenameTime]), type nullable number},

{

"AllRows",

each _,

type table [

Filename = nullable text,

FilenameDate = nullable text,

FilenameTime = nullable number

]

}

}

),

ExpandAllRows = Table.ExpandTableColumn(

GroupRows,

"AllRows",

{"Filename", "FilenameTime"},

{"Filename", "FilenameTime"}

),

AddIsMinOrMaxTime = Table.AddColumn(

ExpandAllRows,

"IsMinOrMaxTime",

each if [FilenameTime] = [MinTime] or [FilenameTime] = [MaxTime] then 1 else 0

),

FilterRows = Table.SelectRows(AddIsMinOrMaxTime, each ([IsMinOrMaxTime] = 1))

in

FilterRows

Screenshot of the Group By screen:

Result:

Did I answer your question? Mark my post as a solution!

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This sounds like a task better suited for Power Query. The solution below imports only the first and last file for each day. The concept is to use the Group By function in Power Query to get the Min and Max time for each date, and then flag those files for import (excluding the other files). The M code below would be extended to include the steps to import the selected files.

let

Source = Table.FromRows(

Json.Document(

Binary.Decompress(

Binary.FromText(

"i45WMjLSMzDVMzLWMzAzMNCryCmuUIrVQRY2NzTBImxoZGyJTdjMwBBD2ARIYjHbBLshJtgNMQUJwwyJBQA=",

BinaryEncoding.Base64

),

Compression.Deflate

)

),

let

_t = ((type nullable text) meta [Serialized.Text = true])

in

type table [Filename = _t]

),

ChangeType = Table.TransformColumnTypes(Source, {{"Filename", type text}}),

AddFilenameParts = Table.AddColumn(ChangeType, "FilenameParts", each [Filename]),

ReplaceValue = Table.ReplaceValue(

AddFilenameParts,

".xlsx",

"",

Replacer.ReplaceText,

{"FilenameParts"}

),

SplitColumn = Table.SplitColumn(

ReplaceValue,

"FilenameParts",

Splitter.SplitTextByEachDelimiter({"."}, QuoteStyle.Csv, true),

{"FilenameParts.1", "FilenameParts.2"}

),

ChangedType2 = Table.TransformColumnTypes(

SplitColumn,

{{"FilenameParts.1", type text}, {"FilenameParts.2", Int64.Type}}

),

RenameColumns = Table.RenameColumns(

ChangedType2,

{{"FilenameParts.1", "FilenameDate"}, {"FilenameParts.2", "FilenameTime"}}

),

GroupRows = Table.Group(

RenameColumns,

{"FilenameDate"},

{

{"MinTime", each List.Min([FilenameTime]), type nullable number},

{"MaxTime", each List.Max([FilenameTime]), type nullable number},

{

"AllRows",

each _,

type table [

Filename = nullable text,

FilenameDate = nullable text,

FilenameTime = nullable number

]

}

}

),

ExpandAllRows = Table.ExpandTableColumn(

GroupRows,

"AllRows",

{"Filename", "FilenameTime"},

{"Filename", "FilenameTime"}

),

AddIsMinOrMaxTime = Table.AddColumn(

ExpandAllRows,

"IsMinOrMaxTime",

each if [FilenameTime] = [MinTime] or [FilenameTime] = [MaxTime] then 1 else 0

),

FilterRows = Table.SelectRows(AddIsMinOrMaxTime, each ([IsMinOrMaxTime] = 1))

in

FilterRows

Screenshot of the Group By screen:

Result:

Did I answer your question? Mark my post as a solution!

Proud to be a Super User!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This works perfectly, thank you!

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 114 | |

| 100 | |

| 78 | |

| 75 | |

| 52 |

| User | Count |

|---|---|

| 144 | |

| 109 | |

| 108 | |

| 88 | |

| 61 |