- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: DAX measures to do Partial Match and Join betw...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

DAX measures to do Partial Match and Join between UNRELATED Tables

I have 2 tables that i have imported to Excel 2016 PowerPivot Data Model.

Input Table (unpivoted):

UPC Attribute Value 1 Char1 OIL FREE 1 Char2 NO GLUTEN 1 Char3 ZERO PARABEN 1 Char4 HYPO ALLERGENIC 1 Char5 NO SODIUM; NO OIL; ORGANIC 1 Char8 NOT 2 Char6 CONTAINS JOJOBA OIL 3 Char2 NO GLUTEN 5 Char4 DOES NOT CONTAIN COMMON ALLERGENS 5 Char8 TO 7 Char1 FREE OF OIL 7 Char3 NO PARABEN 7 Char5 ZERO OIL; ZERO PARABEN; NO GLUTEN 8 Char8 BE 9 Char6 HAS NO OIL 10 Char2 GLUTEN FREE 10 Char3 FREE OF PARABEN 10 Char4 HYPOALLERGENIC 11 Char7 3% SODIUM 12 Char4 HYPO-ALLERGENIC 12 Char8 USED 13 Char1 CONTAINS NO OIL 14 Char4 HYPO ALLERGENIC 15 Char8 ANY 16 Char5 FREE OF GLUTEN 17 Char1 OIL FREE 18 Char1 OIL FREE 18 Char8 MORE 20 Char1 DOES NOT HAVE OIL 20 Char7 NO OIL

WordsList Table:

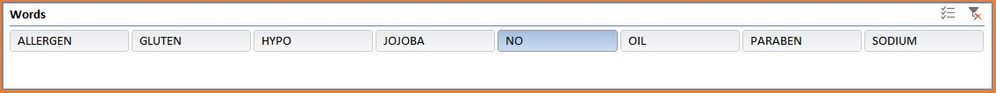

Words PARABEN OIL NO HYPO ALLERGEN SODIUM GLUTEN JOJOBA

I have to do a Partial Match of each Word in the WordsList table with the Value column in the InputTable using DAX and not Power Query. I have 2 Pivot Tables as shown below that i need to update with measures:

1st Pivot (based on WordList table, Words column) :

Words DistinctCountOfUPC %DistinctCountOfUPC ------------------------------------------------------------------------ OIL 8 53.33% NO 7 46.67% GLUTEN 5 33.33% ALLERGEN 5 33.33% HYPO 4 26.67% PARABEN 3 20.00% SODIUM 2 13.33% JOJOBA 1 6.67% ------------------------------------------------------------------------ Grand Total 20 100.00% ------------------------------------------------------------------------

2nd Pivot (based on InputTable - Attribute & Value columns):

This table gets filtered based on what Word gets selected in a Slicer shown below.

Attribute Value Count of Value ------------------------------------------------------------------------------- Char5 ZERO OIL; ZERO PARABEN; NO GLUTEN 1 Char5 NO SODIUM; NO OIL; ORGANIC 1 Char1 DOES NOT HAVE OIL 1 Char1 CONTAINS NO OIL 1 Char2 NO GLUTEN 2 Char6 HAS NO OIL 1 Char7 NO OIL 1 Char3 NO PARABEN 1 Char4 DOES NOT CONTAIN COMMON ALLERGENS 1 ------------------------------------------------------------------------ Grand Total 10 ------------------------------------------------------------------------

Slicer :

I can do a Partial Match in Power Query and then load to Data Model, but it will increase the number of Rows from 5M (50,954 Rows x 116 Columns) to 236 M (50,954 Rows x 116 Columns x 40 Words) i.e. for my original data and take an entire day to load to Data Model. So trying this way.

The problem i am facing is :

- How do i do a partial match between the 2 UNRELATED tables and find out which Words from WordList table partially match with Value column from InputTable?

- How do i join the 2 tables together so that the WordsList[Words] Slicer may filter the Value Column of the InputTable without getting a Circular Dependency error?

- How do i get a Distinct Count of the UPCs and their Percentage of GrandTotal, that contain those Words for Pivot 1?

- How do i get a Count of Value and %Count of Value for Value Column of Input Table?

- Is there a way to calculate these as Measures and not as Calculated Columns?

Any help would be most appreciated.

Thanks.

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So you could use a pattern like the following for the Count of UPC and Value count measures. It seems to work on the small sample data set in this post, but I would be really concerned about the performance over larger datasets

Distinct UPC Count = COUNTROWS(

CALCULATETABLE(

DISTINCT(Input[UPC])

, FILTER(Input, IFERROR(SEARCH(SELECTEDVALUE(Wordslist[Words],""),Input[value]),-1)> 0)

)

)Value Count = COUNTROWS(

CALCULATETABLE(

Input

, FILTER(Input, IFERROR(SEARCH(SELECTEDVALUE(Wordslist[Words],""),Input[value]),-1)> 0)

)

)Even though it will take longer to load, you may get much better query performance from the 230M row data set. How often does the data change, can you unpivot it and store it in a database to minimize the manipulation you have to do as you load it? If you are streaming that many rows direct from a database you should be able to load in less than 1.5 hours (assuming you have a 64bit machine otherwise you are likely to run into memory issues)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So you could use a pattern like the following for the Count of UPC and Value count measures. It seems to work on the small sample data set in this post, but I would be really concerned about the performance over larger datasets

Distinct UPC Count = COUNTROWS(

CALCULATETABLE(

DISTINCT(Input[UPC])

, FILTER(Input, IFERROR(SEARCH(SELECTEDVALUE(Wordslist[Words],""),Input[value]),-1)> 0)

)

)Value Count = COUNTROWS(

CALCULATETABLE(

Input

, FILTER(Input, IFERROR(SEARCH(SELECTEDVALUE(Wordslist[Words],""),Input[value]),-1)> 0)

)

)Even though it will take longer to load, you may get much better query performance from the 230M row data set. How often does the data change, can you unpivot it and store it in a database to minimize the manipulation you have to do as you load it? If you are streaming that many rows direct from a database you should be able to load in less than 1.5 hours (assuming you have a 64bit machine otherwise you are likely to run into memory issues)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @d_gosbell,

Just to give you a background - It is a 50K data extract that i Unpivot in Power Query, which increases the rows to 5M. Earlier i was doing the Join of a 40 Words Wordlist table with these 5M rows internally in Power Query and then loading to the Excel PowerPivot Data Model, which was making the rows increase to 236M thereby increasing the load time to >24 hours. I think this was happening because i was importing 1 source table from an external file and 2 source tables from within the tool into Power Query and then merging the sources. This design created multiple caches. Now when i moved all the source tables into a single External Source workbook, the load time reduced from >24 hours to 2 hours as a single cache gets created now. To further reduce the load time, i am now not merging the 40 Words Wordlist table with the 5M rows Input table in Power Query, but want to achieve the merge and calculations using DAX. Doing this, i am only loading the 5M rows to PowerPivot Data Model and then do the calculations. Hope you are getting my point.

BTW, i am using Excel 2016 64 bit on Windows 10 64 bit with all the latest updates. SELECTEDVALUE function is not present for Excel so the measures give errors. Is there an Alternative function that can be used as i am currently using FIRSTNONBLANK function which i think is giving me the desired results.

The imported data extract takes 2 minutes to load and then the 2 DAX (UPC Count & Value Count) calculations take some 1-2 minutes to calculate each word (with a 50K Rows x 116 Columns x 40 comparison Words file), but it is a huge improvement! Thanks.

- Can these measures be optimized futher because when i compared with Excel Pivot's default Count, it is much faster than these Counts.

- One place of improvement i see is to not allow the SEARCH function to search any cells in InputTable[Value] that have "~". To get the correct total UPC count, I had replaced nulls in UPC rows in the crosstab InputTable before Unpivoting it. This is because, Unpivot removes all rows having nulls (see yellow highlighted rows in INPUT sheet in Dummy Data.xlsx file), thereby removing some of the UPC rows and reducing the UPC count.

- I can filter the 2nd Pivot on the Value column to remove the "~" sign from rows, but it usually freezes Excel.

- I have been able to modify your measures and add the Grand Totals to both the pivots.

UPC Count:

VAR GT = DISTINCTCOUNT(InputTable[UPC]) RETURN

IF( HASONEFILTER(WordList[Words]),

COUNTROWS(

CALCULATETABLE(

DISTINCT(InputTable[UPC])

, FILTER(InputTable, IFERROR(SEARCH(FIRSTNONBLANK(WordList[Words],""),InputTable[Value]),-1)> 0)

)

)

,

GT

)Value Count:

VAR GT = COUNTA(InputTable[Value]) RETURN

IF(HASONEFILTER(WordList[Words]),

COUNTROWS(

CALCULATETABLE(

InputTable

, FILTER( InputTable, IFERROR(SEARCH(FIRSTNONBLANK(WordList[Words],1), InputTable[Value] ), -1)> 0 )

)

)

,

GT

)Here are the 2 files for your perusal - Sample Tool, Dummy Data.

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 113 | |

| 99 | |

| 75 | |

| 73 | |

| 49 |

| User | Count |

|---|---|

| 146 | |

| 108 | |

| 106 | |

| 90 | |

| 62 |