- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Cumulative Total Performance

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Cumulative Total Performance

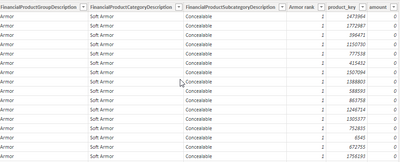

I have been trying to perform an 80/20 Analysis on product sales over the past 3 years. This analysis ranks each product by its total sales if it is greater than 0. I have two tables Products (200k rows 2 columns) and Sales (171k 6 columns). I need to perform a cumulative total in order to compare the product sales amount to that cumulative total.

My smaller businesses (<1k products) will return fine in about 8 minutes. However, my two larger categories (70 k products each) will run for about 30 minutes. This model is so scaled down to try to make it work since I thought having the full products and sales table was my issue. However, I get the same performance with these greatly reduced column tables. Plus, this isn't the endpoint. I need to eventually show this in a quad chart and provide more information back to the user for analysis.

Is there any advice on how to make this perform more quickly? I can't put these in the SQL queries because it will severely limit the flexibility of the analysis tool. Thank you in advance!

Sales

Products

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I believe I've landed on a solution where I can get accurate data. This version of the 8020 analysis looks at the total sales and rates each product based on non-zero or non-negative sales. I've used the performance analyzer to measure the times with each change. I found using Calculatetable and Summarize in the bottom measure to perform the same. It still takes > 220000 ms to respond but will return data locally and on the power bi cloud.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I believe I've landed on a solution where I can get accurate data. This version of the 8020 analysis looks at the total sales and rates each product based on non-zero or non-negative sales. I've used the performance analyzer to measure the times with each change. I found using Calculatetable and Summarize in the bottom measure to perform the same. It still takes > 220000 ms to respond but will return data locally and on the power bi cloud.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Anonymous , Please refer this blog from Matt, if that can help

https://exceleratorbi.com.au/pareto-analysis-in-power-bi/

Microsoft Power BI Learning Resources, 2023 !!

Learn Power BI - Full Course with Dec-2022, with Window, Index, Offset, 100+ Topics !!

Did I answer your question? Mark my post as a solution! Appreciate your Kudos !! Proud to be a Super User! !!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So that helped, and I made some progress. Instead of using the rankx function, it just uses the sales from a product and compares it against the total sales of the other products. This knocked the processing time down from 22,378 ms to 126ms when using the performance analyzer. Very impressive improvement, but still not enough for the most prominent categories.

I've changed the measure but still getting memory. I've tried both the sumx and the calculate formulas. Neither can handle the most extensive product category, which only has 20k products (the other 50k don't have sales against them). I've included the /Dax query from the performance analyzer on the category that will return. If there is any more advice, I'd appreciate the help. I'll work with the summarize and calculatetable to see if I can knock down the memory usage. Many thanks for considering my request.

// DAX Query

DEFINE

VAR __DS0FilterTable =

TREATAS({"Bianchi Duty Gear",

"Bianchi"}, 'Products'[Product Subcategory])

VAR __DS0Core =

SUMMARIZECOLUMNS(

ROLLUPADDISSUBTOTAL('Products'[Item Number], "IsGrandTotalRowTotal"),

__DS0FilterTable,

"Test_Rank", 'Sales'[Test Rank],

"Cumulative_Sales_Amount", 'Sales'[Cumulative Sales Amount],

"Test_Inv_Amount", 'Sales'[Test Inv Amount]

)

VAR __DS0PrimaryWindowed =

TOPN(502, __DS0Core, [IsGrandTotalRowTotal], 0, [Test_Rank], 1, 'Products'[Item Number], 1)

EVALUATE

__DS0PrimaryWindowed

ORDER BY

[IsGrandTotalRowTotal] DESC, [Test_Rank], 'Products'[Item Number]

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 106 | |

| 93 | |

| 75 | |

| 62 | |

| 50 |

| User | Count |

|---|---|

| 147 | |

| 107 | |

| 105 | |

| 87 | |

| 61 |