- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Calculate between many to many tables

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Calculate between many to many tables

I have 3 tables with a many to one and then one to many relationship.

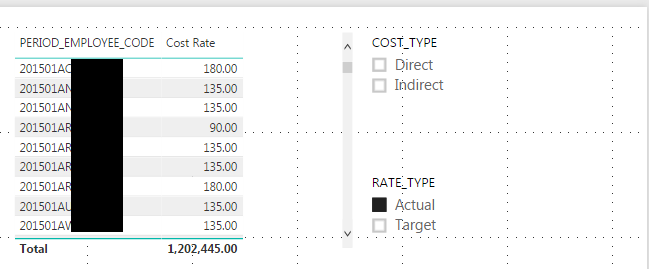

Cost Rates:

This table has different types and pieces of an hourly cost rate. The user would need to select what rate types and cost types are applicate. Then the calculations need to be performed using the sum of the cost_rates that match.

For this reason, I created a Cost Rate measure in the Workforce table:

Cost Rate = SUM('Cost Rates'[COST_RATE])

This value will dynamically change based on any filtering on the "Rate Type" and "Cost Type" fields.

This works. You can see below, that when the slicers are selected, the cost rate for the person, for the period is updated.

So that works well.

Now, what we really need to do, is multiple that cost rate by the number of hours in the hours table.

For this, I created a measure in the Hours table: Cost = SUM(Hours[CR_HRS]) * [Cost Rate]

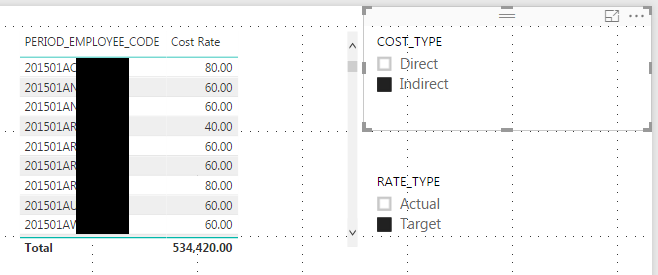

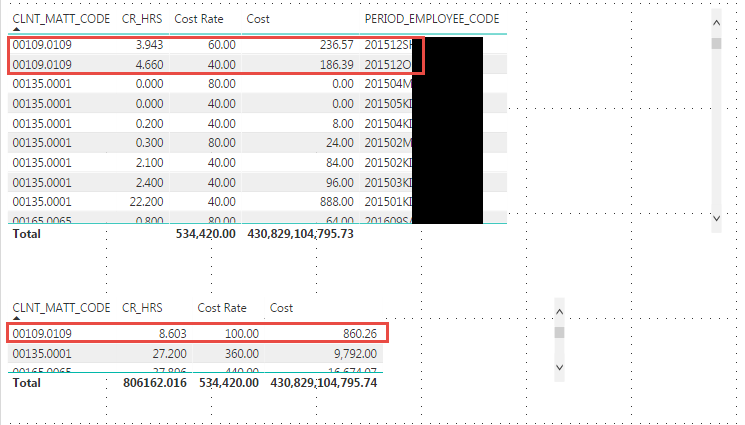

However, when I use the Cost measure I get an unfortunate behavior. Take a look at these two tables:

In the first table, the Cost is calculated correctly, because there is no summerization.

3.943 * 60 = 236.57 GOOD

4.660 * 40 = 186.39 GOOD

However, in the second table, I removed the period employee code field so that I could report on the Cost for the entire CLNT_MATT_CODE 00109.0109

In the second table the CR_HRS is summed correctly

3.943 + 4.660 = 8.603 GOOD

But the Cost Rate is summed... This is a rate, it should not be summed. The Cost value is wrong.

6.603 * 100 = 860.26 BAD

What I want here is:

236.57 + 186.39 = 422.96

How do I get Power BI to calculate this measure correctly?

Important: These tables are vastly simplified. There are several dementions in the Hours table not displayed, so I need to be able to show the cost for any summerization the report builder desires. Also, the Cost Rate has to be dynamically determined, because the user can use the Cost Type and Rate Type slicers to choose what cost rate parts they want to use.

This seems like it is logically not very hard

1) The Cost rate in the Workspace table is the sum of the Cost Rate table based on the slicer values chosen.

2) The Cost in the Hours table is the CR_HRS * Cost Rate for each row in the table, then sum that value as required for the visualizations.

How do I get this to work?

Solved! Go to Solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well, I have been posting on programming help forums like this one for over a decade now. And I know the rules... Always google your problem before posting it. Well, I have been googling and working on this problem for over a week, so I decided to post it.

But, after posting I decided to do just one more google search. Well, I found an article that explained how to use SUMX and I think it is doing exactly what I need it to do.

All I did from my above example was change the Cost measure to:

Cost = sumx(Hours,Hours[CR_HRS] * [Cost Rate])

It seems to work, I'll have to use it in my full, more complex dataset to be sure, but it seems right, unless someone has another idea?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well, I have been posting on programming help forums like this one for over a decade now. And I know the rules... Always google your problem before posting it. Well, I have been googling and working on this problem for over a week, so I decided to post it.

But, after posting I decided to do just one more google search. Well, I found an article that explained how to use SUMX and I think it is doing exactly what I need it to do.

All I did from my above example was change the Cost measure to:

Cost = sumx(Hours,Hours[CR_HRS] * [Cost Rate])

It seems to work, I'll have to use it in my full, more complex dataset to be sure, but it seems right, unless someone has another idea?

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 109 | |

| 98 | |

| 77 | |

| 66 | |

| 54 |

| User | Count |

|---|---|

| 144 | |

| 104 | |

| 100 | |

| 86 | |

| 64 |