- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- Desktop

- Re: COMPOSITE DATA MODEL WITH NUMEROUS APIS

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

COMPOSITE DATA MODEL WITH NUMEROUS APIS

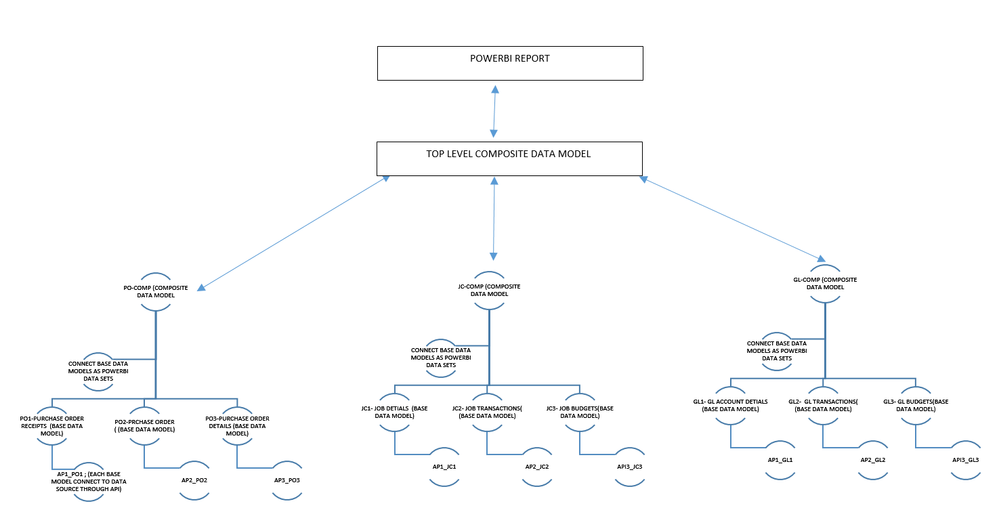

Ok, I'm a new bee and we have issues loading larger data sets to power bi. either APIs are crashing or reaching 'out memory status' or failing when auto-refresh is scheduled. Hence, we came up with the following composited data model concept..,

steps,

1. create base data models through APIs (some base data models just have a single larger data table - Ex: Purchase order receipts)

2. then using these multiple base data models, a higher-level composite data model will be created. These composite data models obtain data from a power bi data set; base data models. Only at this level, all key measures required for reporting are to be defined.

3. Then refresh scheduled to each base data model at a specific time gap in between - the idea is to reduce the data load obtained through APIs & server when refreshing.

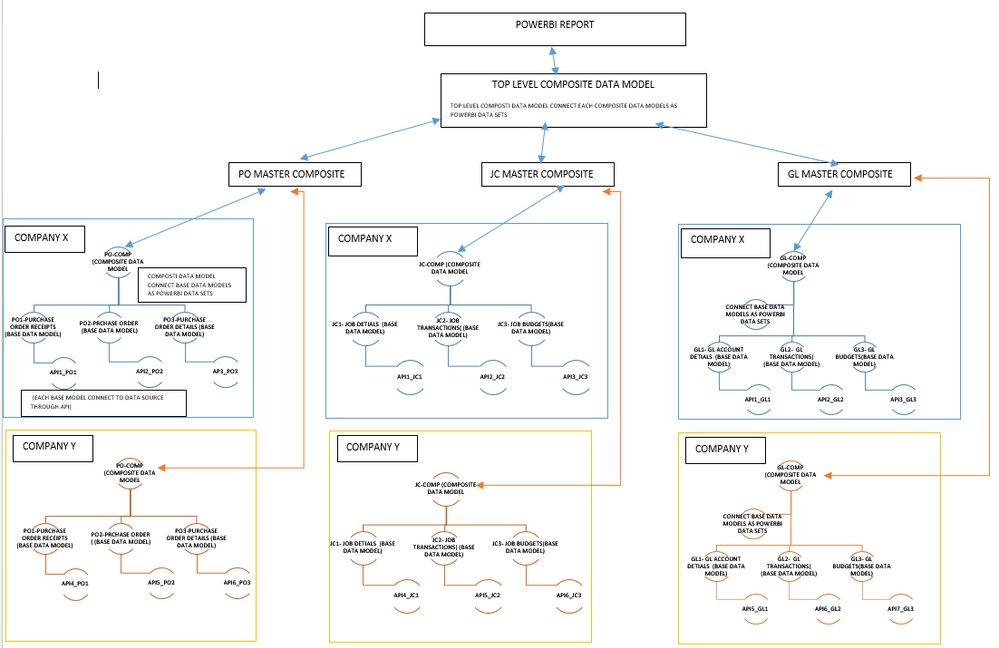

4. in long run, expand the model by adding similar composite data models to multi companies and provide one consolidated power bi report for the whole company.

Tell me how crazy is this idea, any other way to optimize it, all your expert ideas are highly appreciated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @ravjay ,

Are these datasets under same tenant?

Best Regards,

Jay

If this post helps, then please consider Accept it as the solution to help the other members find it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes,

Each dataset represents the individual company (X,Y) under one tenant

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 106 | |

| 93 | |

| 75 | |

| 62 | |

| 50 |

| User | Count |

|---|---|

| 147 | |

| 107 | |

| 105 | |

| 87 | |

| 61 |