- Power BI forums

- Updates

- News & Announcements

- Get Help with Power BI

- Desktop

- Service

- Report Server

- Power Query

- Mobile Apps

- Developer

- DAX Commands and Tips

- Custom Visuals Development Discussion

- Health and Life Sciences

- Power BI Spanish forums

- Translated Spanish Desktop

- Power Platform Integration - Better Together!

- Power Platform Integrations (Read-only)

- Power Platform and Dynamics 365 Integrations (Read-only)

- Training and Consulting

- Instructor Led Training

- Dashboard in a Day for Women, by Women

- Galleries

- Community Connections & How-To Videos

- COVID-19 Data Stories Gallery

- Themes Gallery

- Data Stories Gallery

- R Script Showcase

- Webinars and Video Gallery

- Quick Measures Gallery

- 2021 MSBizAppsSummit Gallery

- 2020 MSBizAppsSummit Gallery

- 2019 MSBizAppsSummit Gallery

- Events

- Ideas

- Custom Visuals Ideas

- Issues

- Issues

- Events

- Upcoming Events

- Community Blog

- Power BI Community Blog

- Custom Visuals Community Blog

- Community Support

- Community Accounts & Registration

- Using the Community

- Community Feedback

Register now to learn Fabric in free live sessions led by the best Microsoft experts. From Apr 16 to May 9, in English and Spanish.

- Power BI forums

- Forums

- Get Help with Power BI

- DAX Commands and Tips

- Re: out of memory with dax

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

out of memory with dax

Hi Guys,

I am running out of memory with the following expression. I must say it works if I use the function All as a filter on the calculate function of the first variable.

# Piezas 30 dias =

VAR ultimafecha =

CALCULATE(

MAX( Ventas[Fecha] )

)

VAR resultado =

CALCULATE(

[# Piezas],

DATESINPERIOD ( Calendario[Fecha], ultimafecha, -30, DAY )

)

return

resultado

This is the first time this error happens to me. Any help would be grately appreciated

Thanks,

Reynaldo

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have tried with and without the calculate

# Piezas =

// total de piezas vendidas, si no hay ventas entonces es blank

Calculate( SUM( Ventas[Piezas] ) )- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can you comment out the second variable and just return the first?

# Piezas 30 dias =

VAR ultimafecha =

CALCULATE(

MAX( Ventas[Fecha] )

)

RETURN ultimafecha

Does that give the same error?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I did. It returns the maximum date of the sales table with the current context filter

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

is this running in power bi desktop?

is the dataset imported or direct query.

I can't see anything wrong with your dax. Are you able to share acsanitised pbix file?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This is running on the desktop. Is imported. Cant share data but is 75 Million rows

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you tried deploying to power bi service and seeing how that it will run there?

What data type is the column you're summing? Worth trying to switch it to fixed decimal.

Beyond that would have to get into dax studio and see what is happening with your data model memory wise. Eg do you have lots of calculated columns?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@bcdobbs thanks for the help. Data type is whole number. Yep I publish it but measure is marked as error. There are non calculated columns its coming straight out of the DB. I will take a look at it on DAX Studio or will open a ticket with microsoft support.

Once again thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry I've not been more help; they're very difficult problems to solve remotely.

When you say the measure is marked with an error does it give any more details?

The only other thing to ask is how is your model structured? It should be able to cope easily with your measure even with 75M rows so I'm wondering if you have a proper star schema?

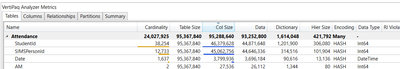

If you do look at it in dax studio go Advanced >> View Metrics and see if there are any columns that have high cardinality that you don't actually use:

Helpful resources

Microsoft Fabric Learn Together

Covering the world! 9:00-10:30 AM Sydney, 4:00-5:30 PM CET (Paris/Berlin), 7:00-8:30 PM Mexico City

Power BI Monthly Update - April 2024

Check out the April 2024 Power BI update to learn about new features.

| User | Count |

|---|---|

| 43 | |

| 23 | |

| 21 | |

| 15 | |

| 15 |

| User | Count |

|---|---|

| 45 | |

| 31 | |

| 30 | |

| 18 | |

| 17 |